High-Score (Bugfree Users) Interview Experience: Walmart Senior Software Engineer — Why the Hiring Manager Round Can Make or Break It

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

High-score Interview Recap: Walmart Senior Software Engineer — Why the Hiring Manager Round Matters

A candidate who cleared DSA, LLD, and System Design described their Hiring Manager (HM) round for Walmart’s Senior Software Engineer role as a decisive stage. Even after technical rounds, the HM focused on both concrete coding and high-level architectural trade-offs — proving that the HM interview can make or break the final outcome.

Below is a rephrased and expanded walkthrough of that experience, with actionable prep tips.

Interview flow (what the candidate cleared first)

- Data Structures & Algorithms (DSA)

- Low-Level Design (LLD)

- System Design

- Hiring Manager round (final, and surprisingly broad)

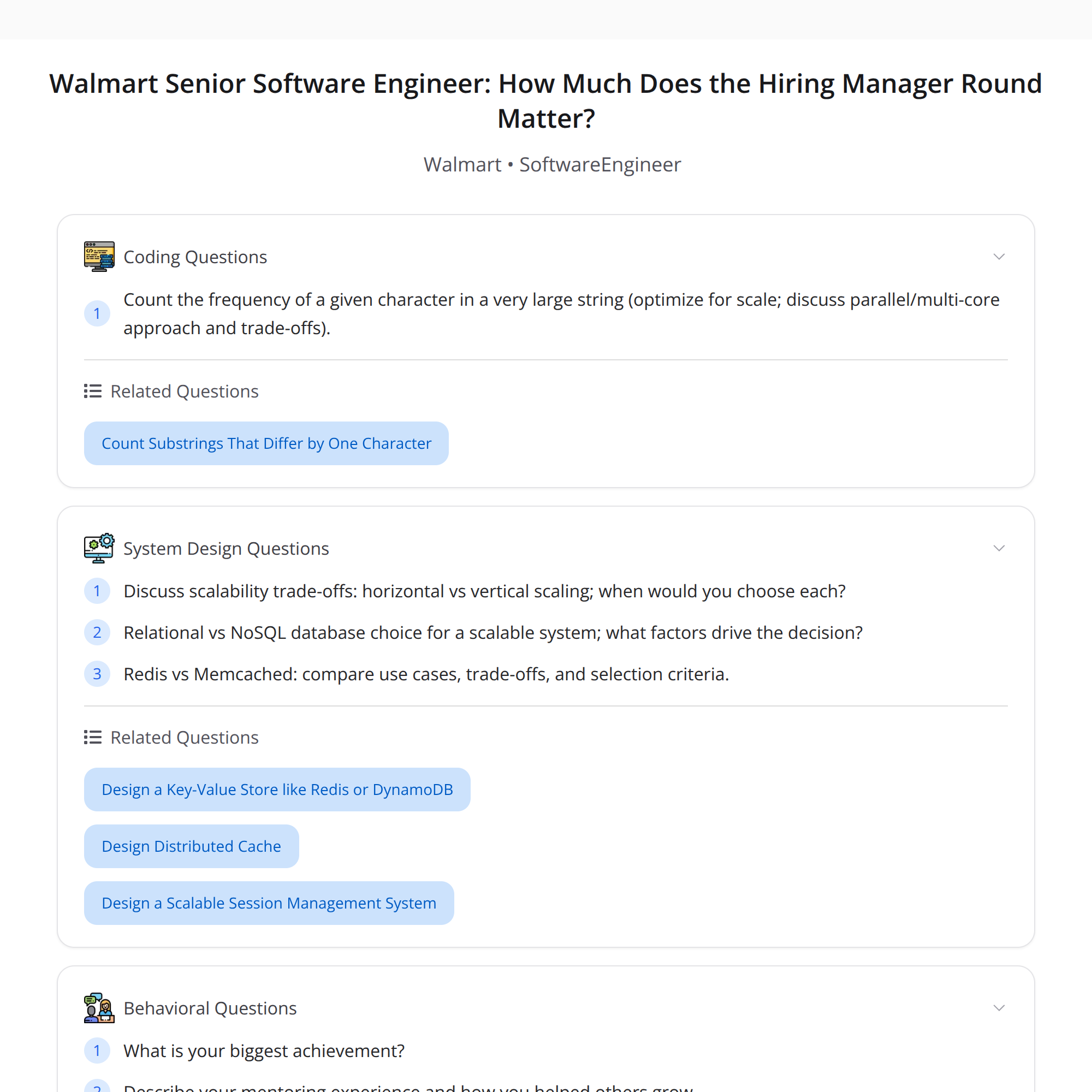

What the Hiring Manager focused on

The HM round combined live coding, systems thinking about parallelism and scaling, and behavioral questions. Key areas covered:

- Coding: Counting character frequency in a very large string

- Parallelism / multi-core trade-offs: partitioning, synchronization, overhead

- Real-world architecture: horizontal vs vertical scaling, relational vs NoSQL decisions, caching choices (Redis vs Memcached)

- Behavioral: biggest achievement, mentoring & influencing, owning releases

Deep dive: the coding problem — character frequency in a very large string

The problem sounds simple but the "very large" qualifier changes the approach.

Possible solutions and when to use them:

- In-memory hashmap (single-threaded): best when input fits comfortably in memory. Use streaming read and update a hashmap. For ASCII-only input, a fixed-size int[256] is fastest.

- Streaming / chunked counting: read the input in chunks, process each chunk to avoid loading the entire string at once.

- External sort or invert-count via disk: when input exceeds memory, use external counting techniques (split, sort, merge) or an external key-value store.

- MapReduce-style / distributed counting: useful for extremely large datasets across machines — map partial counts, then reduce/merge.

Parallel approach specifics:

- Partition the input into disjoint ranges that threads can process independently.

- Use thread-local counters and a final merge to minimize contention.

- Be aware of false sharing (put thread-local arrays on separate cache lines).

- Sync/lock costs: frequent locking kills parallel gains; prefer lock-free or batched merges.

Edge considerations to discuss with the interviewer:

- Memory vs time trade-offs

- I/O bottlenecks vs CPU

- Character encoding (Unicode vs ASCII)

Parallelism and multi-core trade-offs

Interviewers often probe whether you understand when parallelism helps and when it doesn't.

Key concepts to mention:

- Amdahl’s Law: diminishing returns as the serial portion dominates

- Overhead sources: thread startup, context switching, synchronization, merging results

- Partitioning strategy: balancing work while minimizing cross-thread communication

- Contention and locking: prefer per-thread aggregation and cooperative merging

- When parallelism is a bad idea: small inputs, high coordination costs, or I/O-bound workloads

Concrete example: splitting a 100GB file into N chunks works if the merge step is cheap and I/O is parallelizable; otherwise, gains are limited.

System architecture: horizontal vs vertical scaling

The HM probed real-world trade-offs:

- Vertical scaling (bigger machine): simpler, but limited ceiling and single point of failure.

- Horizontal scaling (more machines): better for fault tolerance and elasticity but increases complexity (sharding, consistency, coordination).

Decision factors:

- Predictable vs bursty load

- Statefulness of the service

- Cost and operational overhead

Relational vs NoSQL choices

Discuss the access patterns and consistency needs:

- RDBMS: strong consistency, complex transactions, joins. Pick when ACID and complex queries matter.

- NoSQL: horizontal scaling, flexible schemas, eventual consistency. Pick when you need large scale and simple access patterns.

Caching: Redis vs Memcached

High-level trade-offs:

- Memcached: simple, in-memory cache for key-value blobs, easy to scale horizontally, no persistence or advanced data types.

- Redis: feature-rich (sorted sets, lists, pub/sub), persistence options (RDB/AOF), stronger single-node capabilities, clustering for scale.

When to choose which:

- Use Memcached for simple, transient caching with minimal operational complexity.

- Use Redis when you need richer data structures, persistence, pub/sub, or advanced eviction semantics.

Behavioral questions (what they asked and how to prepare)

Typical HM behavioral topics:

- Biggest achievement: quantify impact (latency reduced by X%, revenue improvement, users reached)

- Mentoring & influence: examples of mentoring, cross-team influence, technical leadership

- Release ownership: describe a release you led, risks you managed, rollback/monitoring strategies

Preparation tips:

- Use STAR (Situation, Task, Action, Result). Keep actions concrete and results measurable.

- Have 3–4 stories ready that show leadership, conflict resolution, and technical depth.

Key takeaways and prep checklist

- The HM round can be decisive — prepare both technical depth and people/leadership stories.

- Brush up on coding for large inputs (streaming, external counting) and concurrent programming patterns.

- Be ready to reason about architecture trade-offs (scaling, data stores, caching) with clear justifications.

- Practice concise, quantified behavioral stories using STAR.

Quick checklist:

- Review streaming and external-memory algorithms

- Revisit Amdahl’s Law and thread-local aggregation patterns

- Refresh Redis vs Memcached features and RDBMS vs NoSQL trade-offs

- Prepare 3 STAR stories: ownership, mentoring, biggest technical achievement

Final note: clearing earlier technical rounds is excellent, but the HM round often probes end-to-end judgment — coding, architecture, and leadership together. Treat it as a holistic assessment.

#SoftwareEngineering #SystemDesign #InterviewPrep