Meta SWE Manager Interview — What Really Gets Tested (High-Score Bugfree Users)

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

Inside the Meta SWE Manager Loop: What Interviewers Actually Test

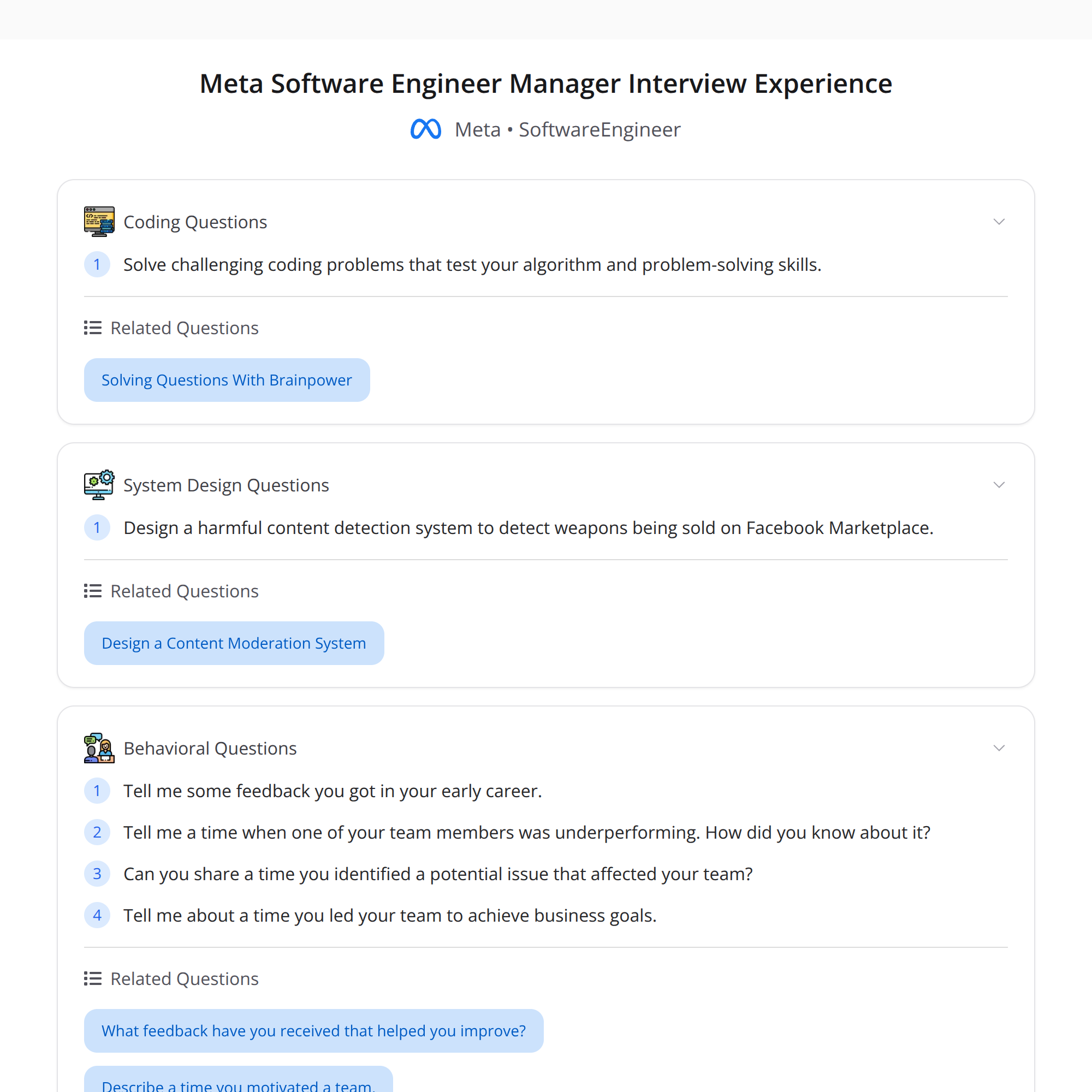

This condensed interview report from high-score (Bugfree) candidates shows that Meta's Software Engineering Manager (SWE Manager) loop is intentionally broad: it evaluates technical depth, product thinking, people leadership, and execution. Below is a reorganized, practical breakdown of what each interview focuses on, what interviewers listen for, and how to prepare.

1) Coding: correctness and efficiency under pressure

What they test

- Algorithmic problem solving with time pressure. Expect questions that require clear reasoning, correct edge-case handling, and asymptotic efficiency.

- Emphasis is not only on a working solution but also on trade-offs, complexity analysis, and incremental improvements.

What interviewers listen for

- Clean, testable code and clear communication of thought process.

- Ability to iterate: propose a simple correct solution first, then optimize.

How to practice

- Solve medium-to-hard LeetCode problems with a focus on patterns (two pointers, heaps, graphs, DP).

- Practice coding aloud and reasoning about complexity.

- Run through mock interviews where you explain trade-offs and edge cases.

Sample prompt

- "Given a stream of events, find the k most frequent items in the last T minutes." Write a correct solution; then discuss how to scale it for high throughput.

2) Behavioral: growth mindset and impact orientation

What they test

- Learning agility, ownership, and how you respond to feedback.

- Examples of early-career feedback and how you used it to improve are commonly probed.

What interviewers listen for

- Specific, measurable improvements after feedback.

- Evidence of reflection, humility, and a bias for action.

How to prepare

- Use the STAR framework with measurable outcomes: Situation, Task, Action, Result.

- Prepare 4–6 concise stories around feedback, conflict, influence, and leadership.

Sample question

- "What feedback did you get early in your career? How did you act on it and what changed?"

3) ML / System Design: real-world moderation and safety

What they test

- Ability to design production systems tackling real problems—e.g., detecting weapon sales on a marketplace.

- Integration of ML models, rule-based systems, privacy and safety considerations, and operational constraints.

What interviewers listen for

- Clear problem scoping, prioritized requirements (precision vs recall, latency, throughput).

- Trade-offs between model complexity and interpretability, and fallback/manual review strategies.

How to prepare

- Practice end-to-end design: data collection, feature extraction, model choice, evaluation metrics, deployment, monitoring and feedback loops.

- Consider abuse scenarios and adversarial behavior; plan mitigations.

Sample prompt

- "Design a system to detect and remove listings that facilitate weapon sales on the marketplace. Describe signals, model architecture, human-in-the-loop flow, and how you measure success."

4) People Management: spotting risk, handling underperformance, conflict resolution

What they test

- Your approach to performance management, spotting flight risks, mentoring, and conflict resolution.

- Depth of follow-up questions that probe actual coaching habits and escalation decisions.

What interviewers listen for

- Evidence of proactive risk identification and concrete examples of course-correcting underperformance.

- Balance between empathy and accountability; how you escalate vs. coach.

How to prepare

- Craft stories where you diagnosed a performance issue, created a development plan, and reached measurable outcomes (or had to make a hard decision).

- Be ready to discuss one-on-one cadence, career development plans, and when to involve HR.

Sample prompt

- "A high-performer’s output has dropped. How do you investigate and address it? Walk me through your actions and timeline."

5) Project Retrospective: driving teams to outcomes with strategy and decisions

What they test

- Ability to lead projects toward business outcomes—setting strategy, prioritizing work, and making trade-off decisions under uncertainty.

What interviewers listen for

- Clear articulation of business metrics you owned, how technical choices supported those metrics, and lessons learned.

- Decision-making process and who you involved.

How to prepare

- Prepare 2–3 deep examples where you led a cross-functional effort from problem definition to measurable impact.

- Be explicit about decisions, alternatives considered, and post-launch insights.

Sample prompt

- "Describe a project you led that changed a business metric. What trade-offs did you make, and what would you do differently?"

Quick prep checklist

- Refresh medium/hard algorithm problems and practice coding interviews.

- Prepare concise STAR stories focused on feedback, conflict, mentoring, and project outcomes.

- Practice designing systems that combine ML, heuristics, and human review with attention to safety and metrics.

- Rehearse people-management scenarios with concrete timelines and measurable outcomes.

Recommended resources

- System Design Primer, productionizing ML guides, Level Up interviews (mock manager loops), and targeted behavioral prep using the STAR method.

Meta’s loop evaluates a manager’s ability to combine technical rigor with people leadership and product judgment. Practice clear communication, measurable outcomes, and trade-off reasoning to perform well across the loop.