High-Score (Bugfree Users) Meta SWE Manager Interview Experience: What Really Gets Tested

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

High-Score Meta SWE Manager Interview — What Really Gets Tested

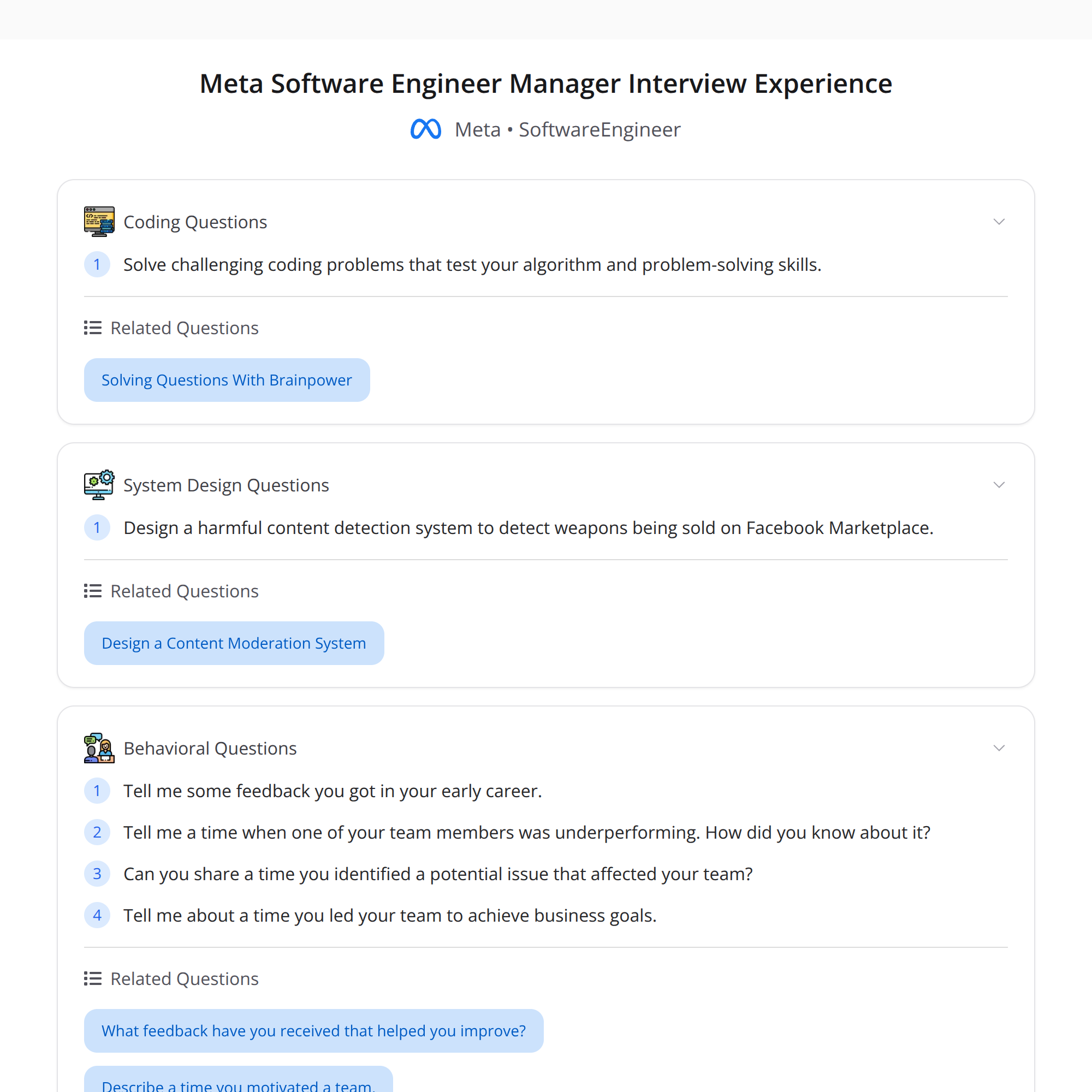

This is a concise, first-hand breakdown of a high-scoring Software Engineering Manager (SWE Manager) interview loop at Meta, distilled from Bugfree users' reports. The loop is broad and deep: expect algorithmic coding, behavioral probes, system/ML design rooted in real-world product problems, people-management scenarios, and project leadership retrospectives.

Interview loop overview

The Meta SWE Manager process tests five core areas:

- Coding (algorithms)

- Behavioral (growth mindset, feedback, leadership style)

- ML / System Design (applied to real product moderation problems)

- People Management (coaching, underperformance, conflict)

- Project Retrospective (strategy, decisions, business outcomes)

Below is what each area tends to evaluate and how to prepare efficiently.

1) Coding — correctness and efficiency under pressure

What they test:

- Algorithmic problem-solving with emphasis on correct, clear solutions.

- Time-pressure tradeoffs: produce a working solution, then optimize.

- Communication: explain approach, tradeoffs, and complexity.

How to prepare:

- Practice medium-to-hard algorithm problems (arrays, trees, graphs, DP, hash/sets).

- Talk through edge cases and test your solution aloud.

- After a correct solution, discuss optimizations and complexity bounds.

- Write clean code and explain invariants—interviewers probe your reasoning.

Quick tip: if you can’t finish, deliver a correct brute-force and outline the optimized path.

2) Behavioral — growth mindset and concrete learning stories

What they test:

- Ability to accept, act on, and learn from feedback.

- Evidence of continuous growth and self-awareness.

- How you handle tradeoffs, ambiguity, and difficult conversations.

Common probe: “What feedback did you get early in your career?”

How to prepare:

- Pick 2–3 concise, specific stories where feedback changed your behavior.

- Structure answers: Situation → Feedback → Action → Outcome (quantify when possible).

- Show reflection and concrete changes you made after the feedback.

3) ML / System Design — practical product moderation scenarios

Example prompt reported: design a system to detect weapons sales on Facebook Marketplace.

What they test:

- End-to-end thinking: signals, model types, retrieval, ranking, human-in-loop.

- Tradeoffs: precision vs. recall, latency, privacy, fairness, false positives.

- Operational concerns: labeling, monitoring, escalation, legal/policy constraints.

How to approach such problems:

- Clarify product goals and acceptable error rates (business vs. safety priorities).

- Sketch a high-level pipeline: ingestion → feature extraction → model(s) → rules → human review → enforcement.

- Discuss data sources: text, images, seller history, pricing anomalies, geo-signals.

- Consider detection techniques: keyword rules, classification models, image detection (object detection), multimodal fusion.

- Define metrics and monitoring: precision@k, false positive impact, runtime constraints, feedback loop for retraining.

Quick tip: explicitly call out privacy and policy implications and propose mitigations (e.g., differential access, escalation paths).

4) People Management — deep follow-ups on real people problems

What they test:

- Your approach to underperformance: root-cause diagnosis, coaching vs. replacement.

- Risk spotting: when a project or person is going off track.

- Conflict handling and calibration with peers and execs.

How to prepare:

- Have 2–3 stories showing how you coached someone, handled a conflict, or mitigated risk.

- Use concrete steps: expectations set, checkpoints, feedback cadence, measurable signals of progress or failure.

- Show empathy and ownership, but also clarity on performance consequences when needed.

Frameworks to leverage: SBI (Situation-Behavior-Impact), GROW (Goal-Reality-Options-Will), and clear performance plans.

5) Project Retrospective — leading teams to measurable outcomes

What they test:

- Your ability to drive a project from ambiguous goal to business impact.

- Clarity of strategy, tradeoffs made, and how decisions were communicated.

How to prepare:

- Prepare a 2–3 minute narrative of a project you led: goal, constraints, key decisions, metrics, outcome.

- Emphasize your role in prioritization, stakeholder alignment, and tradeoffs (technical debt vs. speed, scope vs. quality).

- Quantify outcomes (traffic, revenue, moderation accuracy, latency improvements) and lessons learned.

Quick prep checklist

- Practice coding with clear verbalization and complexity analysis.

- Prepare 4–6 behavioral stories (feedback, failure, influence, conflict) with measurable outcomes.

- Walk through at least one ML + system design case focusing on product goals and operations.

- Rehearse people-management examples: performance improvement plans, escalations, hiring decisions.

- Prepare a concise project retrospective showing strategy → decision → measurable impact.

Final tips

- Expect deep follow-ups: interviewers dig into specifics. Numbers and timelines help.

- Frame tradeoffs explicitly and justify them relative to business goals.

- Show humility and teachability—Meta values growth mindset.

- If unsure, ask clarifying questions and surface assumptions early.

Good luck — target clarity, concrete outcomes, and a strong narrative connecting technical decisions to product and people impact.