High-Score (Bugfree Users) Interview Experience: Oracle OCI Software Engineer — What They Really Test

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

High-score (Bugfree users) Interview Experience: Oracle OCI Software Engineer — What They Really Test

This is a concise, practical breakdown of a high-scoring (Bugfree users) Oracle OCI Software Engineer interview — what was actually asked, how the rounds were structured, and how to prepare so you can focus on the right topics.

Quick overview

The process was end-to-end and covered four broad areas:

- Screening: fundamentals in CS, backend, web basics and data/DB concepts

- Loop rounds: deeper system design, project walkthroughs, and coding

- Coding: LeetCode-style algorithm problems during loops

- Behavioral: ownership, on-call incidents, impact and learning from mistakes

Below I expand each area, list example questions, and give practical prep tips.

Screening round — what they test

The screening focused on core fundamentals and quick conceptual checks.

Key topics asked:

CS & backend fundamentals

- Mutex vs semaphore — when to use each and the difference in counting behavior

- sleep vs wait in Java — thread scheduling vs monitor release

- Optimistic vs pessimistic locking — tradeoffs and use cases

Web basics

- HTTPS fundamentals (TLS handshake, certificates)

- Why use Tomcat (servlet container, deployment model)

- Why Protobuf (compact binary format, forward/backward compatibility, speed)

Data and DB concepts

- DynamoDB: LSI vs GSI — local vs global secondary indexes and partitioning implications

- Communication protocols: HTTP/1.1 vs HTTP/2 vs gRPC (streaming, multiplexing, binary framing)

Screening is usually fast — show you can reason clearly, avoid overlong answers, and give tradeoffs.

Loop rounds — deeper focus

Loop (onsite) rounds probed design, scalability, testing, and coding.

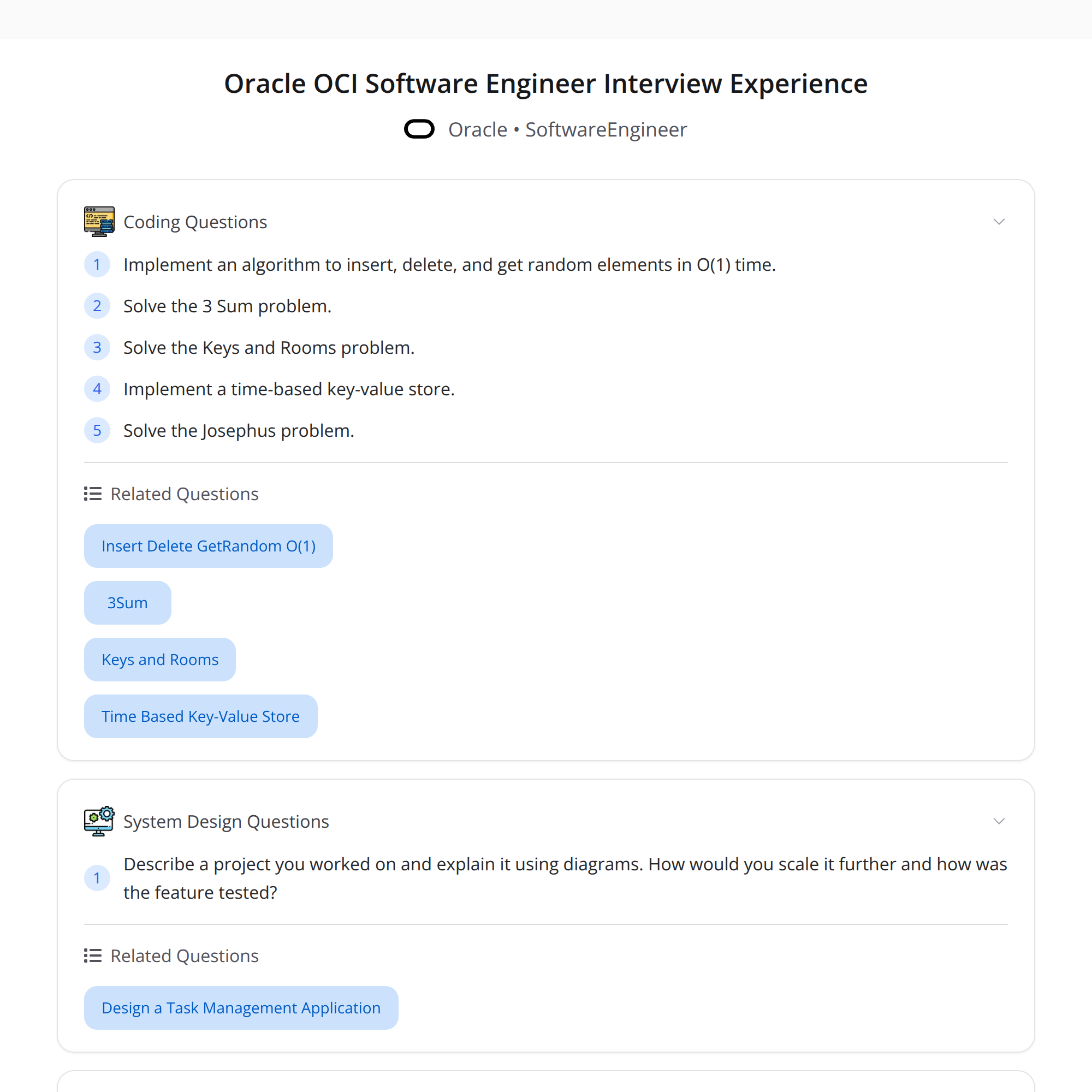

System design & project walkthrough

- Expect a walkthrough of a past project: architecture, tradeoffs, scaling decisions, and testing strategies.

- Scaling questions: load balancing, caching, sharding, partition keys, rate limiting, failover and observability.

- Testing/operational concerns: unit/integration tests, canary/blue-green deployment, monitoring, SLO/SLI and alerting.

Coding

- LeetCode-style problems focusing on clarity, time/space complexity, edge cases and correctness.

- Common problem types: arrays, strings, trees/graphs (BFS/DFS), dynamic programming, two-pointers, sliding window.

Loop rounds evaluate both system-level judgement and hands-on problem solving.

Behavioral — as important as technical

Behavioral questions measured ownership, learning, and real-world impact. Common themes:

- Biggest mistakes & what you learned — show humility and structured improvements.

- Impact — quantify results (latency improvements, cost reduction, user impact).

- On-call incidents — describe incident, detection, mitigation, postmortem and follow-up.

- Deployment ownership — processes you followed for safe deploys and rollbacks.

Be specific: use metrics, describe technical steps taken, and show ownership over resolution and prevention.

Sample questions (with concise answers)

Q: Mutex vs semaphore?

- A: Mutex is a binary lock for mutual exclusion (one owner). Semaphore can allow N concurrent holders — useful for resource pools.

Q: sleep() vs wait() in Java?

- A: sleep pauses a thread without releasing locks; wait releases the monitor and waits to be notified.

Q: Optimistic vs pessimistic locking?

- A: Optimistic assumes low conflict and checks on commit (good for reads); pessimistic locks resources up front to avoid conflicts (good for high contention).

Q: LSI vs GSI in DynamoDB?

- A: LSI is local to a partition key and shares the same partition key, supports alternate sort keys; GSI can index across partitions (different partition key), useful for global queries but costs differ.

Q: Why Protobuf vs JSON?

- A: Protobuf is compact, typed, and faster to parse — good for internal RPC and high-throughput services.

Q: How would you scale a service that’s CPU-bound?

- A: Profile to find hotspots, optimize critical code, add horizontal instances behind a load balancer, consider batching, and offload to worker queues.

Q: Example LeetCode problem to expect?

- A: "Given an array, find the longest subarray with sum K" — think sliding window for positives, prefix sums + hashmap when elements can be negative.

Preparation checklist (practical)

- Review concurrency basics (mutex, semaphore, locks, memory models).

- Brush up Java thread primitives if interviewing with Java.

- Read TLS/HTTPS essentials and common deployment containers (Tomcat, servlet model).

- Study Protobuf and RPC frameworks (gRPC) vs REST.

- Revisit DynamoDB concepts — partition keys, LSI/GSI behaviors, capacity modes.

- Practice medium-to-hard LeetCode problems with explanations; time-box each solve.

- Prepare 2–3 project walkthroughs with metrics and tradeoffs highlighted.

- Prepare behavioral stories using the STAR format (Situation, Task, Action, Result).

Recommended resources: System Design Primer, LeetCode (medium/hard), Cracking the Coding Interview, official OCI/AWS/DynamoDB docs.

Final tips

- Be concise and structured: state assumptions, outline your approach, and then drill into details.

- Always quantify impact in behavioral answers.

- For system design, cover API contracts, data model, scaling, caching, and failure scenarios.

- During coding, communicate thought process, handle edge cases, and run correctness checks.

Good luck — focus on fundamentals, be ready to justify tradeoffs, and demonstrate ownership in past work.