High-Score (Bugfree Users) Meta E5 Onsite: Coding + System Design + Behavioral — What Worked

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

High-Score (Bugfree Users) Meta E5 Onsite: What Worked

Posted by Bugfree Users — A high-scoring interview experience and what helped me succeed.

This Meta E5 onsite felt very structured and interactive: each round was time-boxed and concluded with dry runs and discussion. Below I summarize the interview flow, the key coding problems and approaches, the system design conversations, and the behavioral topics that stood out — plus practical tips you can reuse.

Interview at-a-glance

- Format: Multiple rounds, each with a coding or design focus, ending with dry runs and discussion.

- Tone: Interactive — interviewers pushed for tradeoffs and alternatives rather than only a single solution.

- Recommended approach that worked: clarify, propose multiple solutions, pick one, implement, dry-run, fix bugs.

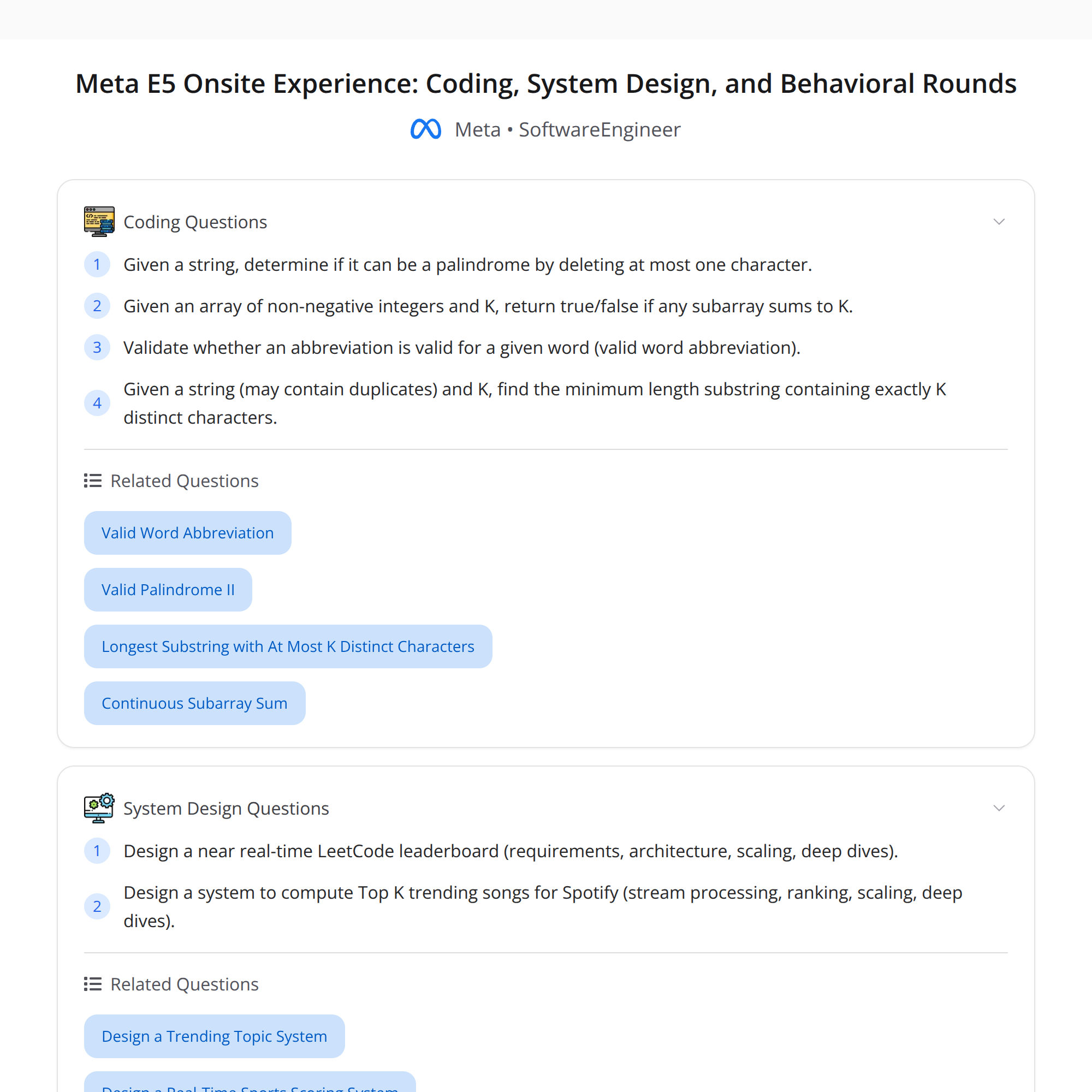

Coding rounds — problems, approach, and tips

Overall theme: clear requirement-gathering, talk complexity and tradeoffs, and dry-run your code.

Problem 1 — Palindrome with one deletion + Subarray sum = K (non-negative)

- Time: ~35 minutes for both (combined in one round).

- Key actions:

- Clarified edge cases (empty strings, single chars, indices returned vs boolean).

- Proposed two approaches for the palindrome-with-deletion: two-pointer greedy check vs dynamic programming for general k deletions.

- Implemented the two-pointer greedy (O(n) time, O(1) space) and handled the check with a helper function.

- For subarray sum with non-negative numbers used sliding-window / two-pointer technique (O(n) time). Clarified whether numbers could be zero and whether negative numbers were possible; this affected algorithm choice.

- What helped:

- Sharing multiple approaches quickly showed that I understood tradeoffs.

- After a hint from the interviewer I found an initial off-by-one bug and fixed it during the dry run.

Tips and pitfalls:

- For palindrome with one deletion, be explicit about returning boolean vs index. When using two pointers, ensure the helper check runs on the correct substring.

- For subarray sum with non-negative integers, prefer sliding window; if negatives are allowed, switch to prefix-sum + hashmap.

Complexities:

- Palindrome-with-one-deletion: O(n) time, O(1) space.

- Subarray sum (non-negative): O(n) time, O(1) space.

Problem 2 — Valid word abbreviation + Min-length substring with exactly K distinct chars

- Time: ~40 minutes total.

Valid word abbreviation

- Typical LeetCode-style check: walk both strings with pointers, parse numbers vs letters, ensure no leading zeros in counts.

- Complexity: O(n) time, O(1) space.

- Tip: Validate numerical parts carefully (no "01" allowed unless the problem states otherwise).

Minimum-length substring with exactly K distinct characters

- Approach: sliding window with a hashmap/counter that tracks character frequencies.

- Expand the right pointer until we have >= K distinct characters; then shrink the left pointer while maintaining exactly K to update the minimum length.

- Important nuance: stopping condition and when to update the minimum requires careful dry-running — I caught a bug here during the dry run and fixed the window update logic.

- Complexity: O(n) time, O(min(n, alphabet)) space.

General coding tips that worked in the interview

- State complexity and tradeoffs before coding.

- Write clean helpers and give short names to invariants (e.g., distinctCount, freqMap).

- Dry-run small examples aloud — it helps reveal off-by-one or boundary bugs.

System design rounds — two designs and core tradeoffs

The interviews dove deep on scaling and tradeoffs. Two prompts:

1) Near real-time LeetCode-style leaderboard

- Requirements discussed: frequent submissions, leaderboard refresh latency (near real-time), global scale, and read vs write ratio.

- Suggested architecture:

- Ingestion layer: API servers that accept submissions, validate, and publish events to a streaming system (Kafka/PubSub).

- Stream processing: lightweight stream processors (Flink/Beam/Kinesis) to compute incremental scores and ranking deltas.

- Ranking store: Redis Sorted Sets or a sharded in-memory service for top-K queries with TTLs for cached views.

- Persistent store: write events and aggregated results to a durable DB (Cassandra/Bigtable) for analytics and recovery.

- Cache & CDN: cache static leaderboard pages, but provide a real-time endpoint for live updates via WebSockets or server-sent events.

- Key tradeoffs and choices:

- Consistency vs latency: eventual consistency via streaming gives lower latency; strict global consistency would be slower and harder to scale.

- Storage for rank history: time-series DB vs append-only events.

- Hot keys: use sharding and replication strategies to prevent a single leaderboard from becoming a bottleneck.

2) Spotify-like Top-K trending songs (near real-time)

- Requirements: compute trending top-K across region/global, support plays, likes, and trending window (e.g., last hour/day), handle high write throughput.

- Suggested architecture elements:

- Event ingestion: play/like events into a streaming platform.

- Aggregation via sliding-window stream processing (e.g., tumbling or hopping windows depending on freshness requirements).

- Approximate counting: use space-efficient sketches (Count-Min Sketch, HyperLogLog) for massive scale when exact counts are not needed.

- Ranking & alerting: materialize top-K per region using incremental top-K algorithms; store in in-memory stores for low-latency queries.

- Offline processing: longer-term trends and anti-fraud analysis in batch jobs.

- Tradeoffs discussed:

- Exact vs approximate counts: exact is more expensive but simpler; approximate saves memory and CPU at cost of occasional inaccuracy.

- Window size: smaller windows increase freshness but also resource use.

- Anti-fraud: incorporate rate-limits and anomaly detection to prevent spurious trending.

System design tips that mattered

- Ask about requirements (latency, consistency, cost, traffic patterns) early.

- Sketch core components first, then dive into scaling: sharding, caching, replication, failure modes.

- Discuss metrics (QPS, latency, data retention), bottlenecks, and recovery strategies.

Behavioral rounds — themes and what resonated

Topics covered: conflict handling, proud projects, pivoting mid-execution, and weakness.

What worked in responses:

- Conflict handling: describe the situation briefly (context), the actions you took (listen, align on data, propose tradeoffs), and the outcome. Emphasize collaboration and measurable results.

- Proud project: focus on the technical depth, impact (metrics), and what you learned or changed in the codebase/process.

- Pivoting mid-execution: show how you recognized the need to change course, how you communicated the change, and how you validated the new direction.

- Weakness: pick a real but non-critical weakness and show the steps you’ve taken to improve and mitigate it.

Behavioral tips

- Use the STAR framework (Situation, Task, Action, Result) concisely.

- Quantify impact where possible (performance improvements, user metrics, reduced incidents).

- Be specific about your role vs the team.

Takeaways & practical advice

- Clarify requirements up front — it saves time and shows product sense.

- Offer multiple approaches quickly; implement the simplest correct solution unless a better tradeoff is warranted.

- Talk complexity and space tradeoffs explicitly.

- Dry-run your code out loud to catch off-by-ones and boundary issues.

- In system design, prioritize the core read/write path and then iterate on scaling (sharding, caching, stream processing).

- For behavioral questions, be concise, data-driven, and reflective.

If you’re preparing for Meta E5 or similar onsite loops: practice time-boxed whiteboarding, mock dry-runs, and structured behavioral stories.

Hashtags: #SoftwareEngineering #SystemDesign #InterviewPrep #MetaE5