High-Score (Bugfree Users) Meta E5 Onsite: Coding + System Design + Behavioral — What Worked

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

High-Score (Bugfree Users) Meta E5 Onsite: Coding + System Design + Behavioral — What Worked

Posted by Bugfree Users — a high-score interview experience and practical takeaways from a Meta E5 onsite that felt interactive, time-boxed, and very focused on dry runs and discussion.

Quick summary

- Interview flow: time-boxed rounds that ended with dry runs and discussion. Interviewers expected clarity on trade-offs and incremental improvements.

- Coding rounds: two focused sessions with algorithmic problems. Clarify constraints, discuss multiple approaches, and dry-run edge cases.

- System design: deep dives into scaling, trade-offs, and near-real-time requirements.

- Behavioral: STAR answers covering conflict handling, proud project, pivots, and weakness.

Interview structure and strategy

The onsite felt very interactive: each round had clear time limits and concluded with a dry run or walkthrough where the interviewer probed edge cases and trade-offs. That meant:

- Clarify inputs, outputs and constraints early (e.g., non-negative array, allowed deletions).

- State multiple approaches (naive → improved → optimal) and pick one, but keep alternatives ready.

- Implement incrementally and test with hand examples; expect the interviewer to give hints that point to subtle bugs.

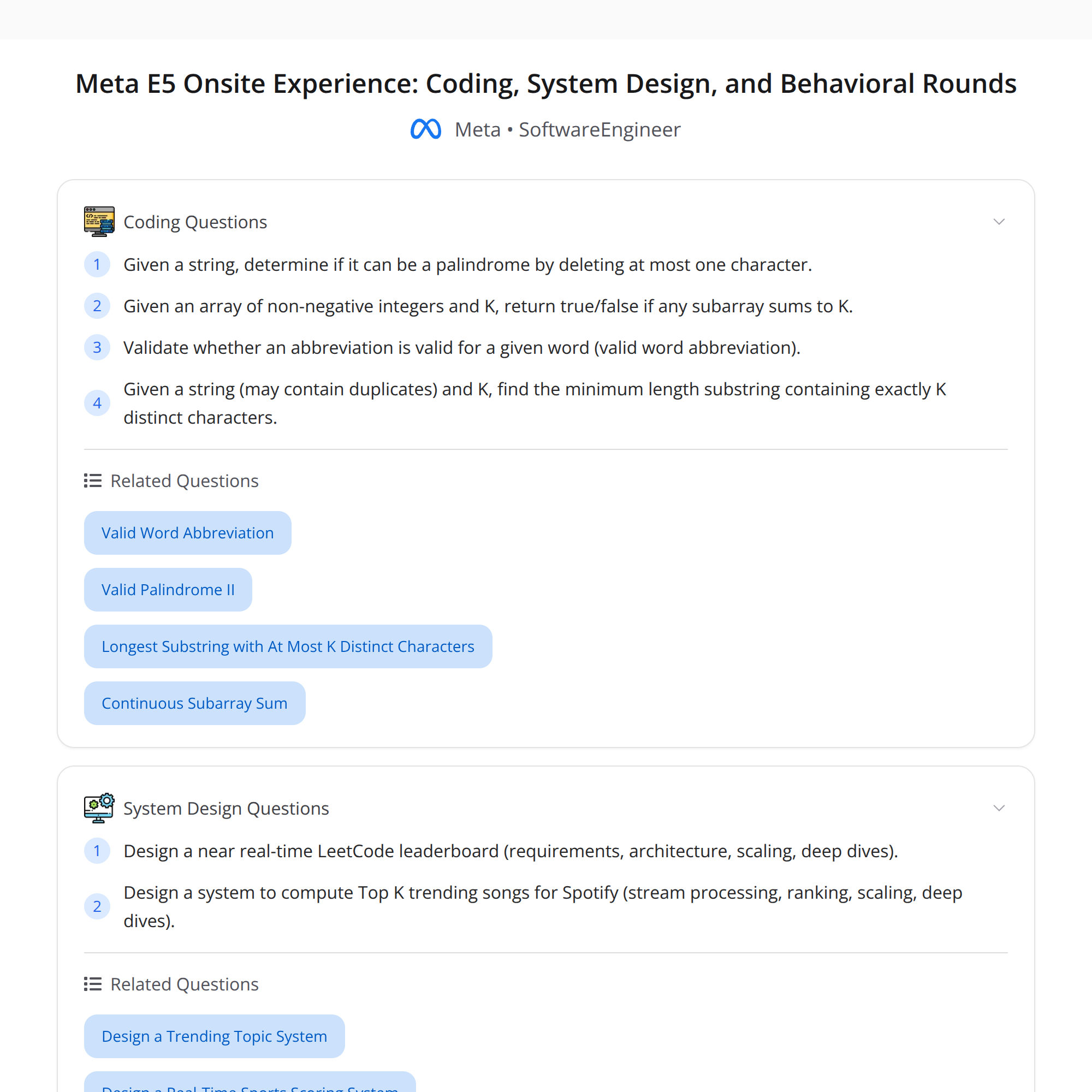

Coding rounds — what I got and how I approached them

Round 1 (≈35 minutes total)

Problems: 1) Palindrome with at most one deletion (Valid Palindrome II). 2) Subarray Sum = K (non-negative array).

What I did and why it worked:

Palindrome with one deletion

- Clarified allowed operations and example behavior on odd/even lengths.

- Approach: two-pointer check from both ends. When mismatch occurs, check both skip-left and skip-right cases with helper that checks palindrome in O(n). That yields O(n) time, O(1) space.

- Implementation tip: make the helper robust to indices and off-by-one errors. A hint from the interviewer helped catch an initial edge-case bug I introduced when advancing pointers.

Subarray Sum = K (non-negative)

- Clarified that numbers are non-negative. That lets you use the sliding window (two pointers) technique for O(n) time instead of prefix-sum+hashmap.

- Approach: expand right pointer, accumulate sum, then move left pointer while sum > K. If sum == K, return indices or true. Mentioned prefix-sum approach for the general case (negatives allowed) to show breadth.

Why the round went well

- I shared multiple approaches, chose the one that fit the constraints, and quickly switched when given hints.

- Dry-run after coding found a small pointer-handling bug which I fixed before finalizing.

Round 2 (≈40 minutes)

Problems: 1) Valid word abbreviation (checking whether an abbreviation matches a word). 2) Minimum-length substring with exactly K distinct characters.

What I did and why it worked:

Valid word abbreviation

- Clarified format: digits in abbreviation represent counts, leading zeros invalid, letters must match positionally.

- Approach: iterate two pointers over word and abbreviation, parse numbers into counts, advance the word pointer accordingly, and compare letters when present. O(n) time, O(1) space.

- Watch for edge cases: leading zeros (e.g., "01" invalid), end-of-string handling, and numeric overflow (large counts).

Min-length substring with exactly K distinct chars

- Approach: use two-pointer sliding window with a frequency map. Expand right pointer until you have >= K distinct chars, then try to shrink from left to remove extra characters while maintaining >= K distinct. Track the smallest window that has exactly K distinct.

- Important detail: when you reach > K distinct, shrink until distinct == K (or < K), then update min. Careful dry-running with strings of repeated chars helped reveal a bug I fixed during the dry run.

Why the round went well

- I articulated the invariants of the sliding-window and explained complexity and memory usage. Doing a careful dry-run exposed subtle off-by-one/frequency errors.

System design — two deep dives and trade-offs

Both designs emphasized near-real-time updates, scaling, and trade-offs between accuracy, latency, and cost.

Design A: Near real-time LeetCode-style leaderboard

Requirements (example):

- Show rankings of users by score with near-real-time updates.

- Support frequent score updates and high read QPS (browsing / contest pages).

- Provide paginated top-k and per-user rank queries.

- Support eventual consistency for non-critical pages, strong consistency for contest leaderboards.

High-level components:

- Event ingestion: clients publish score events to a durable queue (Kafka).

- Stream processor: real-time aggregation using a streaming engine (Flink/Storm) to compute incremental score updates per user.

- Aggregation & shards: partition by user-id; maintain per-shard top-k and partial rankings.

- Storage: OLAP store for historical results, fast key-value store (Redis) for current user scores and small leaderboards.

- Serving layer: cached leaderboard pages, per-user rank API that either computes rank from counters or queries a precomputed index.

- Consistency: use snapshot windows for contest leaderboards (stronger guarantees) or feed-through with low-latency eventual consistency for community boards.

Scaling & trade-offs:

- Latency vs accuracy: exact global rank requires global sorting—expensive. Use sharding + periodic global merge for stronger consistency windows.

- Approximate approaches: keep per-shard top-k and merge on read; for absolute accuracy use a central aggregator at longer intervals.

- Hot keys: popular users or contest pages need caching and maybe replicated in-memory copies.

- Failure modes & recovery: replay events from Kafka, idempotent updates, and tombstones for deletions.

When to choose what:

- If strong, exact rankings are required (e.g., contest final standings) favor batch global aggregation with careful snapshotting.

- For feeds and social leaderboards where small inconsistencies are okay, favor low-latency streaming and per-shard merging.

Design B: Spotify Top-K trending songs (near real-time streaming)

Requirements:

- Continuously compute trending/top-K songs over sliding windows (e.g., last hour, last 24 hours).

- Support millions of song events per second, regional top-K and global top-K.

- Provide low-latency updates and memory-efficient storage.

Key design ideas:

- Ingestion: event stream with plays, likes, or other interactions into Kafka.

- Windowing & aggregation: streaming engine performs windowed counts (tumbling/sliding windows). Use incremental aggregation and emit deltas.

- Heavy-hitter detection: use approximate algorithms (Count-Min Sketch or Space-Saving / Frequent algorithm) at the ingestion shard to keep memory low.

- Per-shard top-K: maintain a small heap of top candidates; periodically merge shard-level results into global top-K.

- Decay and weighting: to reflect recency, apply exponential decay or sliding windows.

- Serving: cache top results in Redis/ElastiCache; allow region-specific caches and a global merge for cross-region queries.

Scaling & trade-offs:

- Accuracy vs memory/latency: Count-Min Sketch trades deterministic accuracy for lower memory and faster updates. For exact results, maintain exact counters at higher cost.

- Merge complexity: merging approximate structures introduces merge-error; handle with conservative thresholds.

- State management: streaming state needs durable checkpoints; choose streaming engine that supports exactly-once semantics if correctness matters.

Operational concerns:

- Backpressure: partitioning and autoscaling consumers to avoid lag.

- Hot songs: heavy hitters cause skew—use consistent hashing or hierarchical aggregation to mitigate.

- Monitoring & alerting: track lag, error rates, estimation error, and traffic hotspots.

Behavioral — what to prepare and sample framings

The behavioral rounds focused on conflict resolution, a proud project, pivoting mid-execution, and weakness. Tips and short sample answers:

Conflict handling (STAR)

- Situation: disagreement on implementation approach between two eng leads.

- Task: choose a path that balances velocity and maintainability.

- Action: facilitated a short design spike with both approaches, quantified trade-offs (time, risk), and proposed a hybrid solution with a rollback plan.

- Result: team aligned, reduced rework, and shipped with monitoring in place.

Proud project

- Focus on impact, technical depth, and ownership. Briefly describe the problem, your contributions, metrics improved, and the outcome.

Pivoting mid-execution

- Explain a situation where requirements changed; describe how you reprioritized, communicated trade-offs, and adjusted scope while keeping stakeholders informed.

Weakness

- Pick a real but non-critical weakness, show steps you're taking to improve (courses, mentorship, small goals), and, if possible, show measurable progress.

Behavioral tips

- Use STAR and quantify outcomes.

- Emphasize learning and iteration.

- Be concise and honest.

Key takeaways and practical tips

- Clarify constraints upfront — it narrows the solution space and lets you pick the optimal approach quickly.

- Walk through examples and dry-run edge cases out loud; interviewers often probe from there.

- When designing systems, explicitly state requirements, non-functional constraints, and acceptable trade-offs.

- For near-real-time systems, discuss ingestion, stateful stream processing, sharding, approximate vs exact algorithms, and caching.

- Behavioral answers should be structured, metric-driven, and show growth.

Final words

The Meta E5 onsite rewarded clarity, incremental design, and careful dry runs. Share multiple approaches, pick the right one for the constraints, and be ready to discuss trade-offs and operational concerns at scale.

#SoftwareEngineering #SystemDesign #InterviewPrep