Meta Production Engineer Interview — Coding, OS & System Design Highlights

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

High-Score (Bugfree Users) Meta Production Engineer Interview — Coding + OS + System Design Highlights

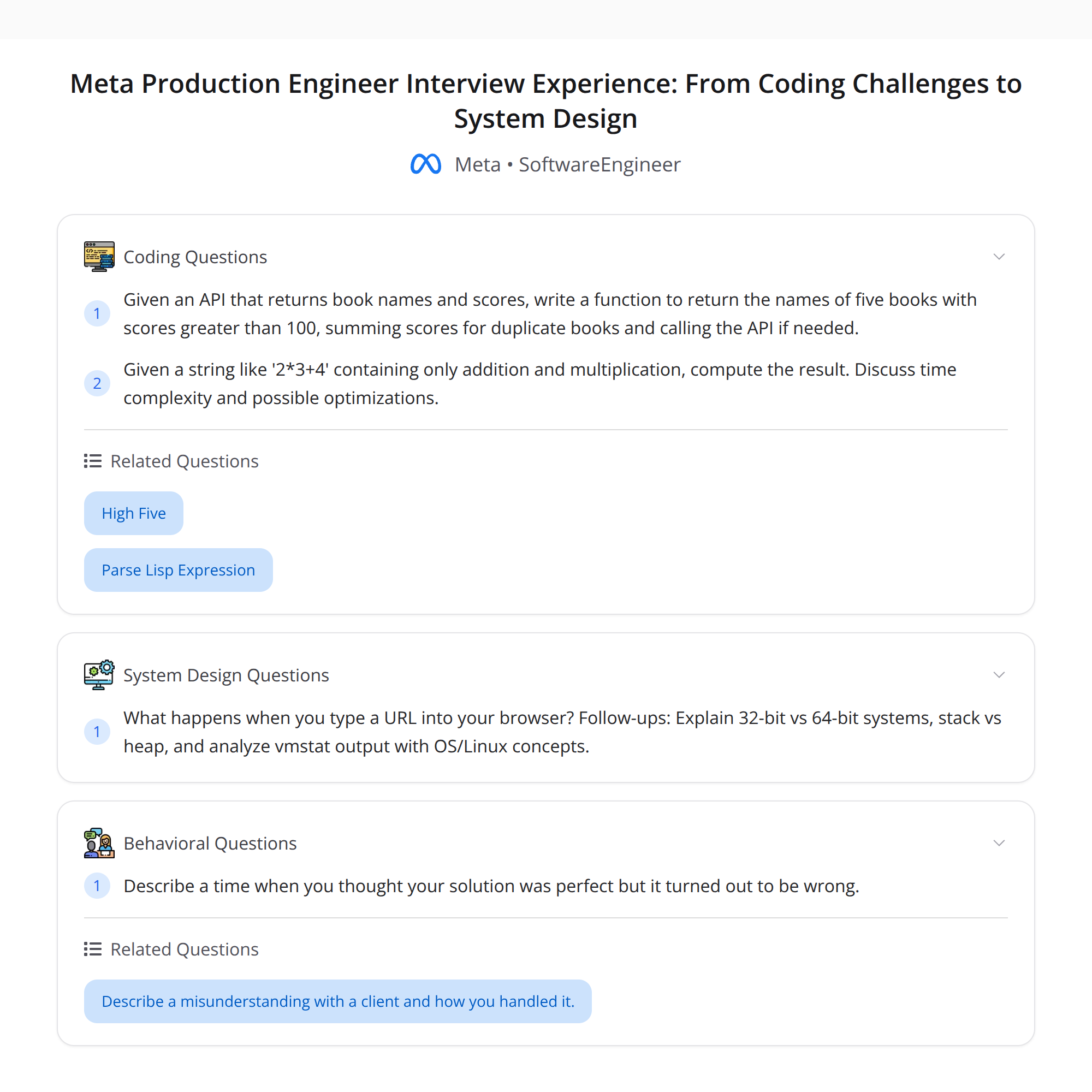

I recently compiled a detailed interview loop reported by Bugfree users for a Meta Production Engineer role. It was a thorough, real-world-focused loop that tested fundamentals end-to-end. The candidate was ultimately rejected, but the process offered an excellent learning loop. Below I distill the flow, concrete topics, example problems, good approaches, and actionable tips.

Interview flow (what to expect)

- Recruiter reach-out

- Online assessment (OA): 20 multiple-choice questions

- Two phone screens (technical + behavioral)

- Virtual onsite: behavioral, coding, and system design rounds

Each stage tested practical understanding rather than trivia — expect deep, explainable answers.

Core topics emphasized

The loop repeatedly returned to fundamentals. Key themes:

- "What happens when you type a URL?" — full-stack, end-to-end flow

- 32-bit vs 64-bit architectures

- Stack vs heap (memory layout and consequences)

- Linux / OS basics (processes, paging, I/O)

- Tools and diagnostics (vmstat, paging/swapping analysis)

- Shell behavior and globbing (e.g.,

ls -l foo*)

Below are concise explanations and tips for each.

What happens when you type a URL?

A high-level sequence you should be able to narrate and where follow-up questions can go deeper:

- Browser checks cache, cookies, and preflight policies (HSTS, service workers)

- DNS resolution (browser cache → OS resolver → recursive resolver → authoritative)

- Establish transport: TCP 3-way handshake (SYN, SYN-ACK, ACK) and optionally TLS handshake (cert validation, keys)

- HTTP request: browser forms HTTP(S) request, includes headers, cookies, etc.

- Networking path: routing, CDNs, load balancers (edge), reverse proxies (e.g., nginx)

- Server handling: web server → application layer → service mesh / API gateway → backend services

- Backend processing: business logic, database queries, caches (Redis/Memcached)

- Response flows back through proxies/CDN; browser renders and executes resources (JS, CSS), may make additional XHR/fetch calls

Possible follow-ups: caching headers, CDN cache invalidation, TCP vs QUIC, connection pooling, backpressure, observability (logs, traces, metrics).

32-bit vs 64-bit

Key points to mention:

- Address space: 32-bit limits to ~4GB virtual address space per process; 64-bit vastly larger addressable memory

- Pointer size: pointers double in size on 64-bit (affects memory footprint and data layout)

- Performance: 64-bit can be faster for arithmetic and pointer-heavy workloads, but larger memory footprint may hurt cache usage

- Data model differences (ILP32 vs LP64) affect long and pointer sizes; watch ABI and structure alignment

- Security: more address space enables better ASLR effectiveness

Stack vs heap

Be explicit about differences and trade-offs:

- Allocation: stack is automatic (function call frames), heap is manual (malloc/new, GC-managed, or custom allocators)

- Lifetime: stack lifetime tied to scope; heap persists until freed or GCed

- Size & growth: stack typically limited per-thread (risk of stack overflow); heap is larger but can fragment

- Access patterns: stack allocations are contiguous and cache-friendly; heap allocations can be scattered

- Thread-safety: stacks are per-thread; heap needs synchronization in multi-threaded allocators

Linux / OS basics

Discuss process lifecycle, system calls, signals, and scheduling:

- Processes vs threads, context switches, preemption

- System calls crossing user↔kernel boundary (e.g., read, write, mmap)

- File descriptors, pipes, sockets, and how blocking vs non-blocking I/O works

- Virtual memory: paging, page faults, copy-on-write

vmstat, paging and swapping

When presented vmstat output, focus on:

si/so(swap in/out) — non-zero values indicate swapping activitywa(iowait) — high values mean processes waiting on disk I/Oid(idle) andus/sy(user/system CPU) — shows CPU usage balancefree,buff,cache(other tools like free/top) — how much RAM is available

Interpretation: swapping suggests memory pressure; investigate which processes allocate heavily, check OOM killer logs, and consider tuning swappiness or adding memory.

Shell patterns: ls -l foo*

Explain globbing vs regex and how the shell treats it:

- Globbing is done by the shell before the command runs:

foo*expands to matching filenames in the current directory - Behavior when no match: depends on shell (bash may leave pattern if

nullglobisn’t set, zsh behaves differently) - Quoting prevents globbing:

ls -l 'foo*'passes the literal pattern to ls ls -l foo*lists details (-l) for each filename matching the glob

Good to mention quoting rules and the security implication of untrusted glob expansions.

Coding problems reviewed

Two representative coding questions surfaced in this loop.

1) API-returns books and scores — aggregate duplicates and stop when 5 high-scoring books

Problem outline (paraphrased):

- An API returns book records (title/id and score). The API may return duplicates across calls. Keep calling the API until you have 5 unique books with score > 100. Aggregate duplicates (keep the best score per unique book) and handle pagination/retries.

Solution highlights and edge cases:

- Maintain a map/dictionary: book_id → best_score

- Each API response: for each returned book, update map[book_id] = max(map.get(book_id, -inf), score)

- After processing a response, count how many books in the map have score > 100

- Stop when count >= 5

- Handle API quirks: duplicates within a response, network failures, rate limits, and pagination

- Respect termination: add a max-call limit or timeout to avoid infinite loops if condition never met

Pseudocode:

map = {}

calls = 0

MAX_CALLS = 100

while calls < MAX_CALLS:

resp = call_api(page_token)

for book in resp.books:

id, score = book.id, book.score

map[id] = max(map.get(id, -inf), score)

if count(map.values() > 100) >= 5:

break

if not resp.has_more:

maybe_sleep_or_retry()

calls += 1

result = top_5_books_with_score_gt_100(map)

Complexity: O(N) overall where N is total items processed. Memory proportional to number of unique books seen.

Interview tips: clarify whether duplicates are identical IDs, what to do with ties, whether to return the five highest or any five meeting >100, and how to handle API pagination and errors.

2) String expression evaluator: only + and (example: "23+4")

Problem: Evaluate an expression string containing only non-negative integers and the operators + and , respecting operator precedence (`before+`). No parentheses.

Approach options:

- Two-pass: split on

+into terms, evaluate each term by multiplying its factors - One-pass with running accumulation: scan left-to-right, maintain current product and running sum

One-pass example (linear time, constant extra space):

- Initialize sum = 0, curProd = 1, num = 0, lastOp = '*'

- Parse characters; when you see a digit, accumulate

num = num * 10 + digit - When you encounter an operator or end of string:

- If lastOp == '': curProd = num

- If lastOp == '+': sum += curProd; curProd = num

- Update lastOp to current operator; reset num = 0

- After loop, sum += curProd

This respects precedence without building a full AST.

Edge cases: large integers, whitespace handling, invalid tokens — ask clarifying questions.

Onsite system-design / diagnostic highlights

- Expect vmstat and system metrics questions: demonstrate how you reason from numbers to root cause

- Paging and swapping: explain triggers, consequences (latency spikes), and mitigations (add RAM, tune swappiness, optimize memory usage)

- Service design questions often emphasize observability, capacity planning, and graceful degradation

Behavioral and final outcome

- Behavioral rounds focused on past impact, incident postmortems, and cross-team collaboration

- Outcome reported: rejected. But the candidate got a high-value learning experience — the loop surfaced concrete gaps and reinforced fundamentals.

Practical tips and interview strategy

- Talk aloud: communicate assumptions and trade-offs. Interviewers value thought process over perfect code.

- Ask clarifying questions immediately: input constraints, expected edge-case behavior, and failure policies

- Focus on correctness first; then discuss complexity, trade-offs, and hardening (timeouts, retries, instrumentation)

- For OS questions, narrate the end-to-end flow and be prepared to dive into any step

- In coding rounds, implement a correct, readable solution; optimize if time permits

Summary

This Meta Production Engineer loop favoured deep, practical fundamentals across networking, OS, and systems design, combined with focused coding problems. Even with a rejection, the experience is a solid template for what to prepare: end-to-end system reasoning, OS internals, diagnostics (vmstat), shell semantics, and robust problem-solving for API/data-aggregation and expression evaluation tasks.

Good luck preparing — master the fundamentals, practice explainer answers for common end-to-end flows, and always test your solutions against edge cases.