E-commerce System Design: What Interviewers Expect You to Nail

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

E-commerce System Design: What Interviewers Expect You to Nail

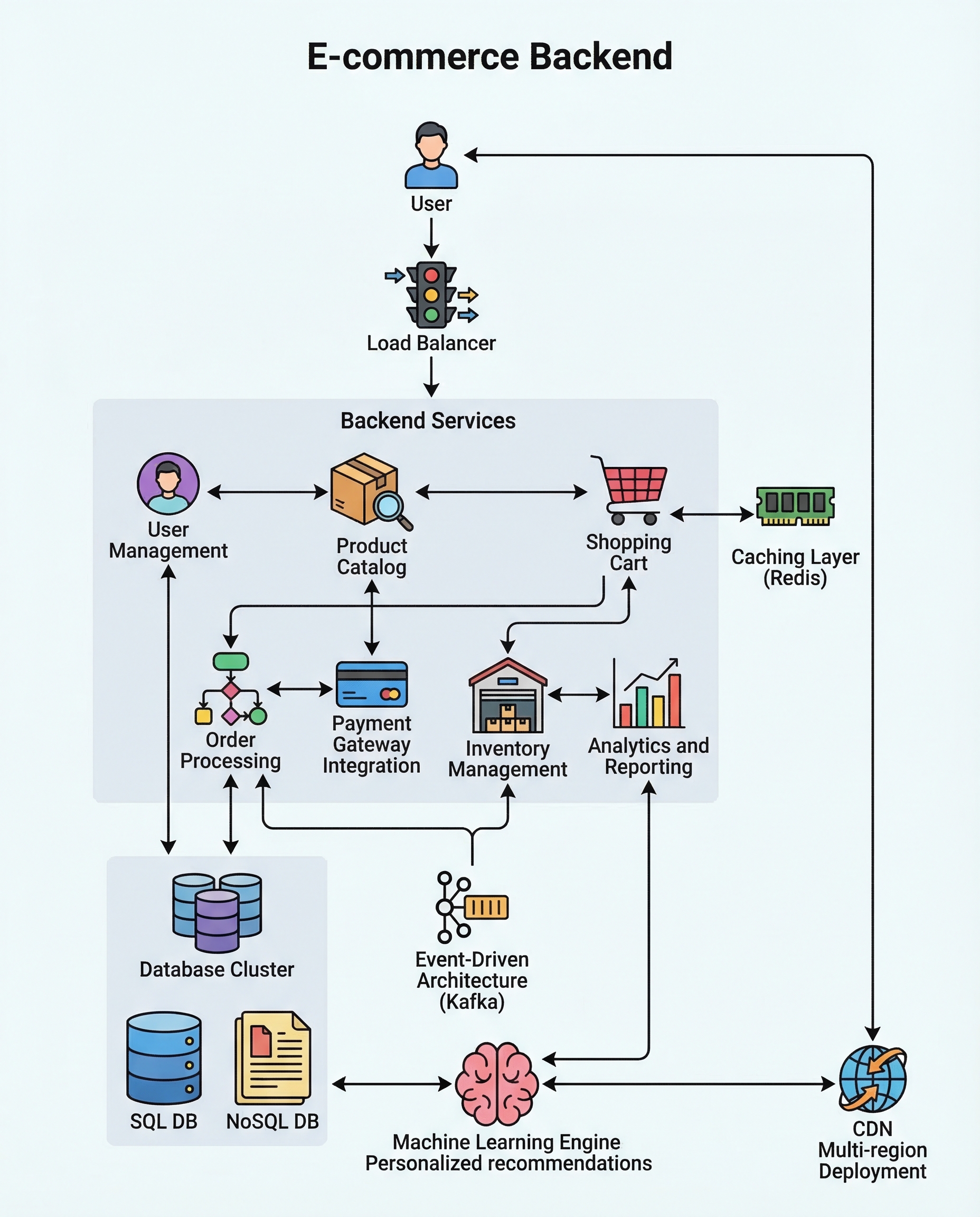

In backend system design interviews for e-commerce roles, interviewers expect you to cover both the core building blocks and how your design holds up under real-world constraints. Cover the essentials clearly, then defend trade-offs for traffic spikes, concurrency, low latency, and global users.

Below is a concise, interview-friendly checklist with explanations and tradeoffs you should be ready to discuss.

Core building blocks (explain each clearly)

User authentication & authorization

- Options: JWT, OAuth2, session tokens. Explain where to store tokens (cookies vs local storage), refresh tokens, token revocation, and secure practices (HTTPS, same-site cookies).

- Talk about role-based access, rate limiting per user, and session invalidation.

Product catalog & search indexing

- Primary datastore for product data (relational DB or document store), and a search index (Elasticsearch/OpenSearch) for full-text, filters, and faceted search.

- Discuss index update strategy: sync vs async, near-real-time indexing, and stale-index tradeoffs.

Cart persistence

- Short-lived carts: store in Redis for speed; persistent carts: back to a DB keyed by user.

- Explain session vs user-linked carts, expiration, and how to merge guest carts after login.

Checkout & order lifecycle

- Order states: CREATED → RESERVED → PAID → FULFILLED → CANCELLED. Show how events or state machines manage transitions.

- Idempotency keys for order creation and payment callbacks to avoid duplicate orders.

Payment gateway integration

- Use external processors (Stripe, Adyen). Discuss synchronous vs asynchronous flows, webhooks, secure storage of payment references, and handling failed payments/retries.

Inventory control (preventing oversell)

- Strategies: pessimistic locking (DB locks), optimistic locking (versioning), or reservation/hold model (reserve stock on cart/checkout for a short TTL).

- Outline latency and throughput tradeoffs: locks are safe but hurt throughput; reservation introduces complexity but scales better.

Defend your design under real scenarios

Traffic spikes / sudden load (Black Friday)

- Autoscale application tiers; put a load balancer in front. Use a CDN for static content.

- Cache aggressively with Redis or an LRU cache; use cache-aside pattern and tune TTLs. Consider rate limiting and graceful degradation (read-only mode, feature toggles).

- Partition/shard large datasets (user or product shards) and use database replicas for read traffic.

Real-time stock accuracy

- Event-driven architecture: publish events (order.created, order.cancelled) to a durable queue (Kafka) and have inventory services consume and update stock.

- For hot items, use a reservation queue or distributed counters in Redis with atomic decrement and fallback to strong-consistent DB checks.

- Be explicit about eventual consistency vs strong consistency and where each is acceptable (e.g., cart listing can be eventually consistent; final checkout needs stronger guarantees).

Recommendations & personalization

- Offline batch models (recommendation pipelines, feature store) + online serving (low-latency caches in Redis or specialized stores like Cassandra/Scylla).

- Use embeddings or collaborative filtering; keep model inference fast—precompute top-k lists for products and personalize further at request time.

Multi-region latency & availability

- Use a global CDN for assets and edge caches for read-heavy APIs.

- Replicate read data regionally and funnel writes to a primary region or use multi-master with conflict resolution. Discuss tradeoffs: cross-region consistency vs write latency.

- Consider regulatory constraints and data locality.

Explain tradeoffs clearly (what to say in interviews)

- Consistency vs availability: identify which operations need strong consistency (inventory during checkout) and which can be eventual (product recommendations).

- Latency vs correctness: caching reduces latency but risks staleness—describe invalidation or TTL strategies.

- Complexity vs cost: microservices improve independence and scaling, but add operational cost (deployments, monitoring, distributed tracing).

- Simplicity vs extensibility: prefer a simple, correct approach for core flows and mention how it can evolve (e.g., add reservation service later).

Practical interview checklist (quick talking points)

- Start with a high-level diagram and dataflow.

- Define critical APIs and data models (Product, Cart, Order, Inventory).

- Explain data stores and why: RDBMS vs NoSQL vs search index vs cache.

- Describe concurrency control for inventory and idempotency for payments.

- Discuss scalability: autoscaling, caching, sharding, and queues.

- Cover observability: metrics, logs, tracing, alerting, and SLOs.

- Mention security: encryption in transit/at rest, PCI compliance for payments, input validation.

Closing / How to present this in interviews

Start from the user journey (browse → add to cart → checkout) and expand into components. Prioritize correctness for checkout and inventory, then discuss scaling and resilience. When asked "why?", always state tradeoffs and a fallback plan.

Use this checklist to structure answers: clear architecture, concise rationale, and practical tradeoffs. That approach shows you understand both design and operational realities.