Data Interview Must-Know: Precision vs Recall vs F1 (Stop Mixing Them Up)

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

Precision vs Recall vs F1 — Quick, interview-ready explanations

When interviewers ask about evaluation metrics, they want clear definitions, when to use each, and a short justification tied to business cost. Here are compact explanations you can say confidently.

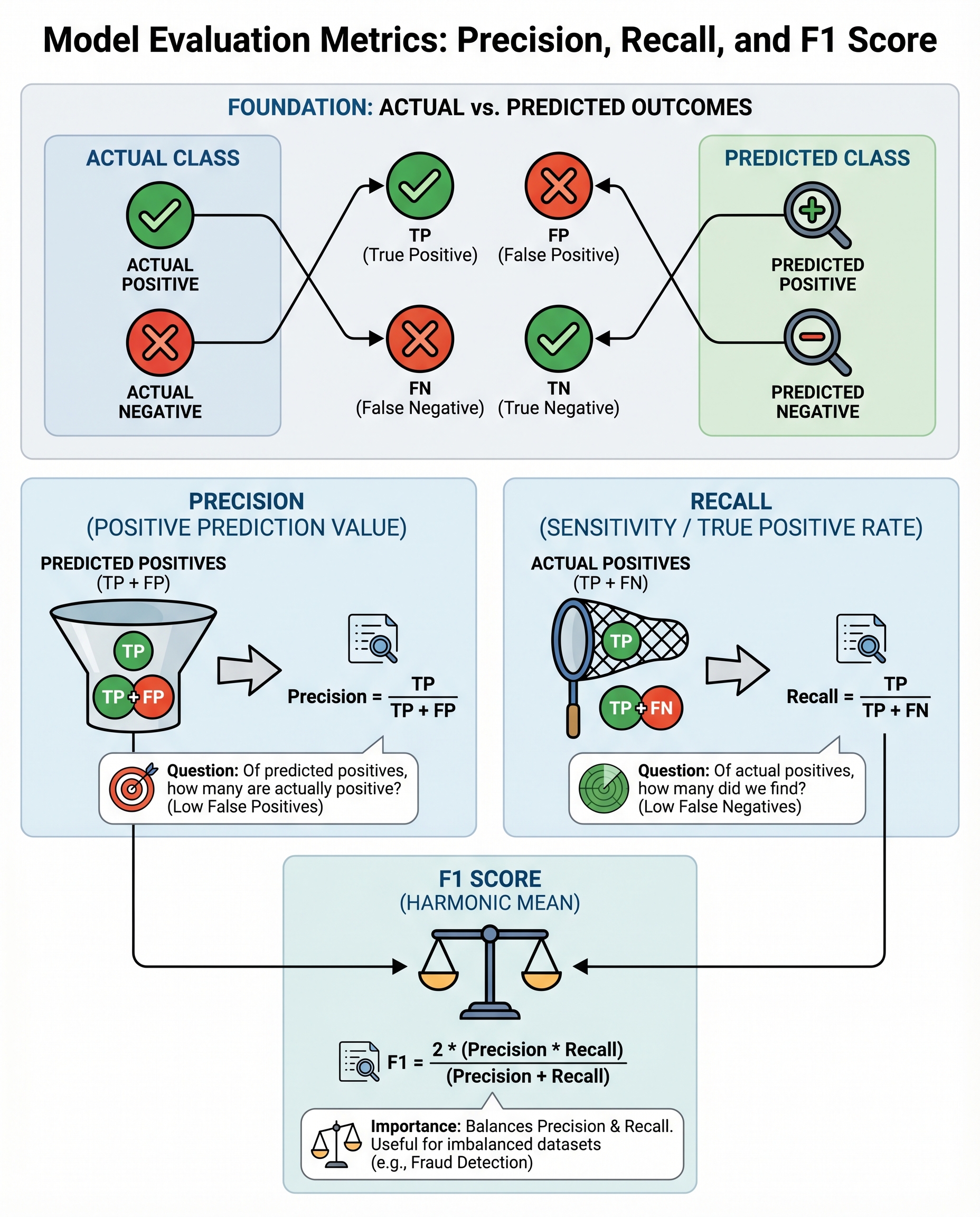

Confusion matrix reminder

| Predicted Positive | Predicted Negative | |

| Actual Positive | TP | FN |

| Actual Negative | FP | TN |

- TP = true positives, FP = false positives, FN = false negatives, TN = true negatives.

Precision

- Formula:

Precision = TP / (TP + FP) - Meaning: Of the examples predicted positive, what fraction were actually positive?

- Use when false positives are costly: e.g., marking good email as spam, recommending irrelevant products, or flagging innocent users as fraudulent.

- Interview line: “Optimize precision when you must avoid false alarms.”

Recall (a.k.a. Sensitivity)

- Formula:

Recall = TP / (TP + FN) - Meaning: Of all actual positives, how many did the model catch?

- Use when false negatives are costly: e.g., missing a disease, failing to detect a defect, or not flagging a dangerous event.

- Interview line: “Optimize recall when missing positives has a high cost.”

F1 score

- Formula:

F1 = 2 * (Precision * Recall) / (Precision + Recall) - Meaning: The harmonic mean of precision and recall — balances both.

- Use when you need a single metric and care about both precision and recall (common with imbalanced classes like fraud detection).

- Interview line: “Use F1 for a balanced view when both error types matter and classes are imbalanced.”

Tip: if you care more about recall than precision, use F-beta (beta > 1) to weight recall higher; if precision matters more, use beta < 1.

Trade-offs & practical advice

- Increasing model threshold → usually increases precision, decreases recall. Lowering threshold → increases recall, decreases precision.

- Always align the metric with business costs (false positive vs false negative consequences). Explain the cost trade-off in the interview.

- For highly imbalanced datasets, prefer Precision-Recall curves over ROC curves — PR curves are more informative when positives are rare.

- When reporting, show the confusion matrix, chosen operating point (threshold), and why that metric matches the problem.

Short, interview-ready answers

- "Precision measures how many predicted positives are correct; use it when false positives are expensive."

- "Recall measures how many actual positives we caught; use it when false negatives are dangerous."

- "F1 balances both; use it for imbalanced data or when you want a single summary metric."

Use these lines, back them with a short example from your past work (or a concise hypothetical) and you’ll sound precise, practical, and interview-ready.