TikTok ML Scientist Interview: 3-Round Prep Guide (Bugfree Users' Experience)

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

TikTok ML Scientist Interview: 3-Round Prep Guide (Bugfree Users' Experience)

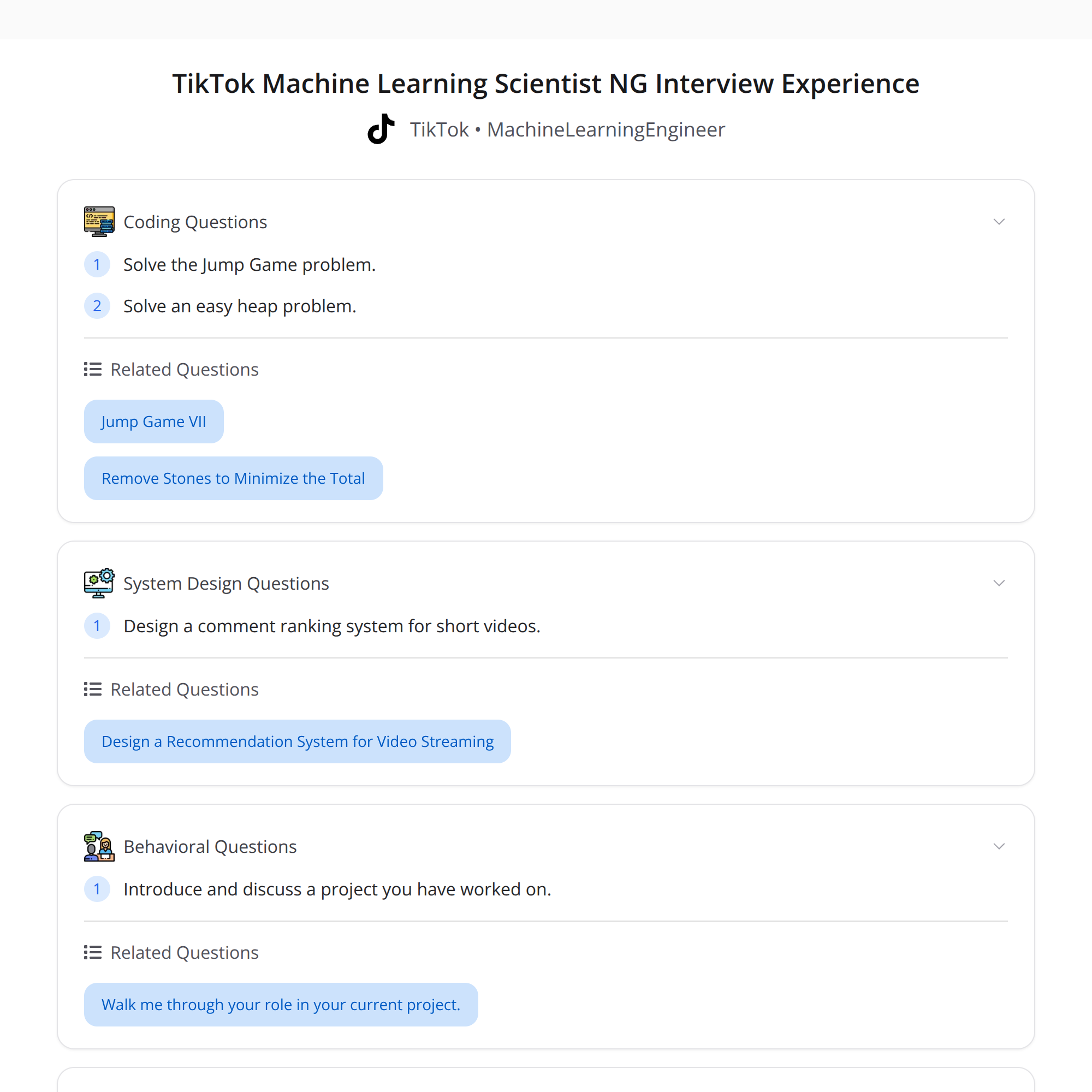

A candidate who scored well on Bugfree shared a real TikTok ML Scientist (NG) interview experience. The full process can include up to 4 rounds (two peer interviews, a hiring manager (HM) interview, and HR), but this account describes three technical rounds that are a fantastic checklist for preparation.

Below I break down what showed up in each round, what the interviewers were testing, and a practical prep checklist so you can target your study efficiently.

Interview summary: what to expect

- Planned process: up to 4 rounds (2 peer interviews, HM, HR). The shared experience covered 3 technical rounds.

- Outcome: rejection after round 3 — still valuable because the scope gives a comprehensive prep checklist.

- Focus areas across rounds: coding (medium/easy), ML fundamentals, recommender systems, project deep dives, word embeddings and Transformer details, and system design for ranking short-video comments.

Round 1 — Coding + ML fundamentals + recommender systems chat

What appeared:

- A Jump Game style coding problem (array / greedy / DP).

- Questions on ML basics (metrics, overfitting, loss functions, evaluation).

- A discussion about recommender systems at a high level.

What they were testing:

- Clean coding, edge cases, and complexity trade-offs.

- Core ML understanding: when to choose evaluation metrics, how to detect overfitting, regularization approaches.

- Practical recommender knowledge: types of recommenders, ranking vs. rating, cold-start strategies.

Prep tips:

- Practice common array/greedy/DP problems (e.g., Jump Game, maximum subarray, intervals).

- Refresh ML basics: precision/recall/AUC, bias–variance, regularization (L1/L2), train/val/test splitting, cross-validation.

- Be prepared to explain recommender system paradigms (collaborative filtering, content-based, hybrid), negative sampling, and simple candidate generation ideas.

Sample questions to rehearse:

- "Explain how you'd evaluate a ranking model for feed recommendations."

- "Solve Jump Game (can you get from index 0 to last index?)." (Discuss greedy vs DP and complexity.)

Round 2 — Easy heap coding + deep project dive

What appeared:

- An easy heap/priority-queue coding question (top-K, merge K lists, sliding-window problems, etc.).

- A detailed deep-dive into one of the candidate's projects.

What they were testing:

- Familiarity with common data structures (heap complexity, API, memory trade-offs).

- Ability to tell a crisp project story: data sources, feature engineering, model choice, offline/online evaluation, deployment, limitations and next steps.

Prep tips:

- Practice heap problems on LeetCode and be ready to discuss time/space complexity.

- Prepare a 2–3 minute concise project pitch that covers:

- Problem statement and impact

- Dataset and preprocessing

- Modeling choices and why (features, architecture)

- Evaluation metrics and A/B test design

- Engineering/production considerations and lessons learned

Questions to prepare answers for:

- "Walk us through the most important feature in your project and why it helped."

- "How did you validate the model offline and online?"

Round 3 — Advanced ML concepts, no coding; system design for ranking

What appeared (this round was described as hard and primarily conceptual):

- Word2vec implementation trade-offs, especially on small datasets.

- Transformer positional encodings (sinusoidal vs learned) and why they matter.

- A very detailed walkthrough of resume projects.

- A system design question: comment ranking for short videos (candidate generation, ranking, freshness, latency, personalization).

What they were testing:

- Deep model-level understanding and practical trade-offs.

- Ability to reason about algorithmic choices under data/compute constraints.

- System-level thinking for real-time ranking and ML engineering concerns.

Key concepts to review:

- Word2vec (CBOW vs Skip-gram), negative sampling, subsampling frequent words, handling small corpora (pretraining on larger corpora, transfer learning, data augmentation), and evaluation of embeddings.

- Positional encoding: sinusoidal intuition (handing relative position information without learned params) vs learned positional embeddings (trade-offs: capacity vs generalization to longer sequences).

- Ranking system design: high-level architecture including data ingestion, feature store, offline candidate generation, online candidate retrieval, ranking model (pointwise/pairwise/listwise), re-rankers, and personalization layers.

- Operational concerns: latency budgets, freshness (how to surface new comments), caching, feature staleness, online feature computation, A/B testing metrics (CTR, engagement, abusive content rate), fairness and safety filters.

Example approach for "Comment ranking for short videos":

- Data layer: user interactions, comment metadata, moderation signals.

- Candidate generation: retrieve comments by recency, similarity to user/video, social graph, or learned recall model.

- Ranking: a lightweight online model for latency (e.g., gradient-boosted trees / shallow neural network) plus a heavier offline re-ranker for batch results.

- Safety & business rules: toxicity filters, spam detection, content policy.

- Metrics & experiments: offline proxies + live A/B testing (engagement, dwell time, moderation load).

Why rejection after R3 is still useful

Even if the outcome was a rejection, the topics covered form a thorough checklist of what TikTok (and many other ML-focused roles) expect. Use the rounds as a focused syllabus to prioritize study time.

Practical prep checklist (2–4 weeks plan)

- Coding (daily)

- 10–15 array/greedy/heap/DP problems on LeetCode; time yourself.

- Practice explaining complexity and edge cases aloud.

- ML fundamentals (every other day)

- Review metrics, bias–variance, regularization, loss functions, and model selection.

- Study recommender basics: candidate generation vs ranking, negative sampling, offline/online eval.

- Deep dives (weekends)

- Prepare 2 polished project walkthroughs (2–3 minutes + deep technical backup).

- Rehearse answers about feature importance, ablation studies, and deployment challenges.

- Advanced topics (ongoing)

- Read word2vec and Transformer positional encoding explanations; summarize trade-offs in a paragraph each.

- Practice system design for ranking problems: sketch architecture and discuss trade-offs.

- Mock interviews

- Peer or coach mock interviews for both coding and system/ML design.

- Focus on communicating trade-offs clearly and concisely.

Recommended resources

- LeetCode (arrays, heaps, DP)

- "Hands-On Recommendation Systems" or online courses on recommender systems

- Original papers: Word2Vec (Mikolov et al.), "Attention Is All You Need" (Vaswani et al.) — read the positional encoding sections

- System design for ML: blogs and case studies on ranking systems and production ML pipelines

Final notes

- Expect a mix of coding, ML fundamentals, project storytelling, and production/system thinking.

- Practice concise explanations and be ready to defend model and engineering choices.

- Use this three-round outline as a prioritization map: if you can cover everything on this list, you’ll be in a strong position for similar ML scientist interviews.

Good luck — and treat every round (even rejections) as a data point to improve your next run.

#MachineLearning #InterviewPrep #RecommenderSystems