Data Interview Must-Know: How to Design Controlled Experiments When Data Is Scarce

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

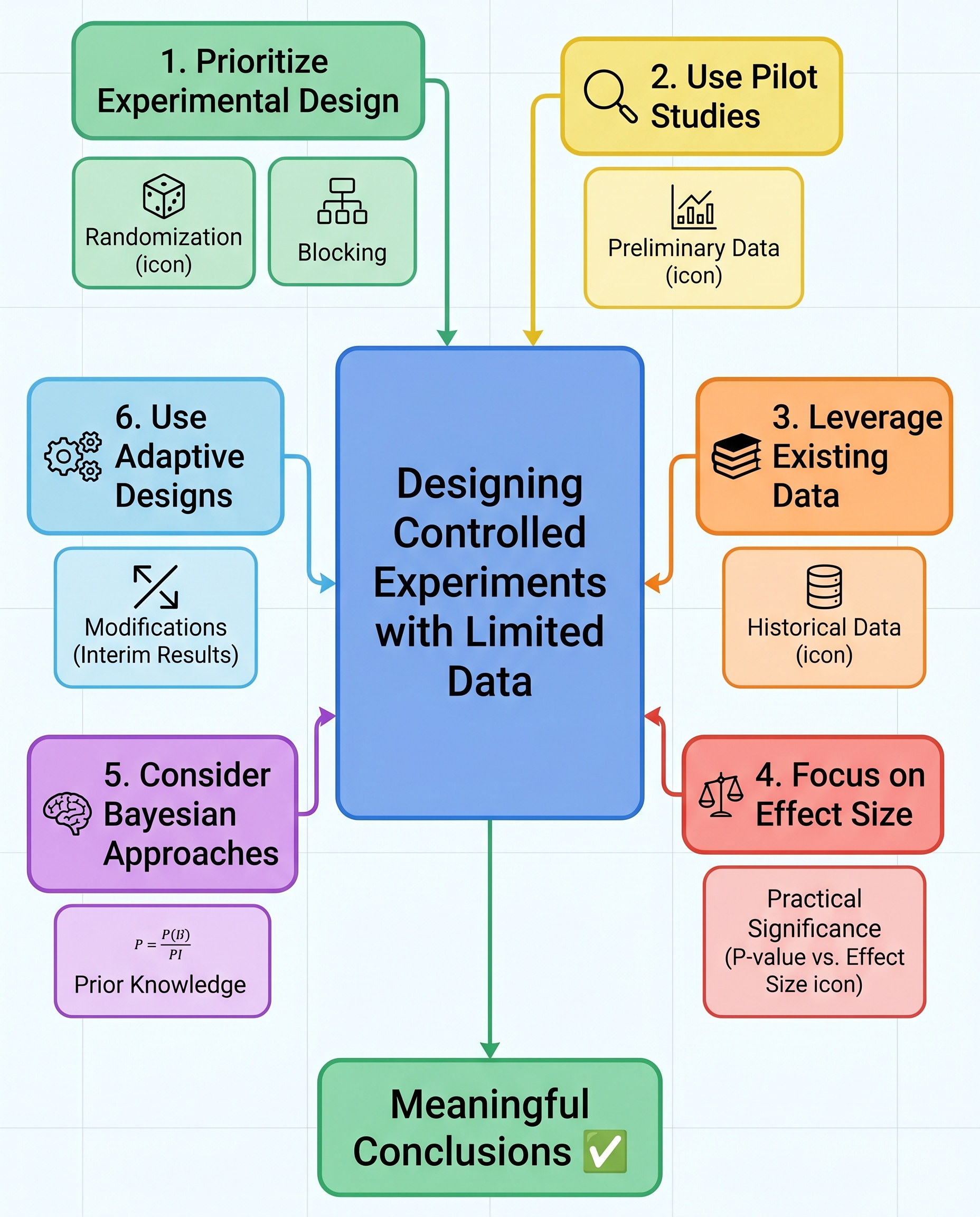

Designing Controlled Experiments When Data Is Scarce

Limited data doesn’t excuse weak experimentation—it demands better design. In interview settings you want to show you can protect causal inference even with a small N. Focus on design choices that reduce bias and variance, and make your assumptions explicit.

Here are practical strategies and what to say when asked about experiments with scarce data:

Randomization to reduce bias

Always randomize assignment when possible. Randomization balances observed and unobserved confounders on average and is your strongest defense for causal claims.

With small samples, randomization might not perfectly balance covariates—acknowledge that and plan to measure and adjust for key covariates in analysis.

Blocking (stratification) to control known variability

Use blocking on strong predictors of the outcome (e.g., user segment, geography, baseline metric).

- Blocking reduces within-block variance and increases power for a given N.

In interviews, describe which variables you’d block on and why.

Run a pilot to estimate effect size and refine metrics

A small pilot (or A/A test) helps estimate variance, detect measurement issues, and validate metric definitions.

Use pilot results to update your power calculations and to confirm that your metric captures the intended behavior.

Leverage historical or similar-data for context

Use historical baselines or data from similar experiments to set priors, estimate variance, and calibrate expectations.

Be explicit about differences between the historical context and the current experiment (seasonality, product changes).

Report effect size (and uncertainty), not just p-values

With small samples, p-values are noisy. Report point estimates with confidence intervals or credible intervals.

Emphasize practical significance: What effect magnitude would change a decision? Show whether the interval contains business-relevant thresholds.

Consider Bayesian methods to incorporate priors responsibly

Bayesian approaches let you combine prior information with current data, providing more stable estimates with small N.

Use weakly informative priors or priors based on historical/industry data and demonstrate sensitivity analyses to different priors.

Use adaptive designs carefully to reallocate samples

Group sequential designs, multi-armed bandits, or other adaptive schemes can reallocate samples toward promising arms and improve efficiency.

- In interviews, mention how you’d control Type I error (e.g., alpha spending, pre-specified stopping rules) and avoid data peeking pitfalls.

Additional tactics and considerations

- Acknowledge higher variance: Small N means higher uncertainty—be explicit about limits to what you can claim.

- Nonparametric methods and bootstrap: If distributional assumptions are doubtful, use resampling or permutation tests for robust inference.

- Hierarchical models: Pool information across related groups (partial pooling) to stabilize estimates when each group has few observations.

- Pre-registration and analysis plan: Pre-specify metrics, hypotheses, and stopping rules to avoid p-hacking and to strengthen credibility.

- Ethics and incentives: Ensure your adaptive choices don’t create perverse incentives or unfair treatment of participants.

Quick sample interview script

- State the goal and primary metric.

- Propose randomization and key blocking variables.

- Suggest a small pilot and what you’d measure in it.

- Explain how you’d use historical data or priors.

- Describe analysis: effect sizes, intervals, and robustness checks.

- Mention adaptive options if needed and how you’d control error rates.

Checklist to mention during interviews

- Randomization: yes/no and why

- Blocking variables: which and rationale

- Pilot: duration and purpose

- Historical data/prior: source and limitations

- Analysis: effect sizes, confidence/credible intervals, not just p-values

- Adaptive design: when and how, with safeguards

- Robustness: bootstrapping, sensitivity to priors, nonparametric checks

Small N demands deliberation. Walk interviewers through your trade-offs, quantify uncertainty, and show you can combine principled design with pragmatic analysis to protect causality even when data are scarce.