Data Interview Must-Know: How to Design Controlled Experiments When Data Is Scarce

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

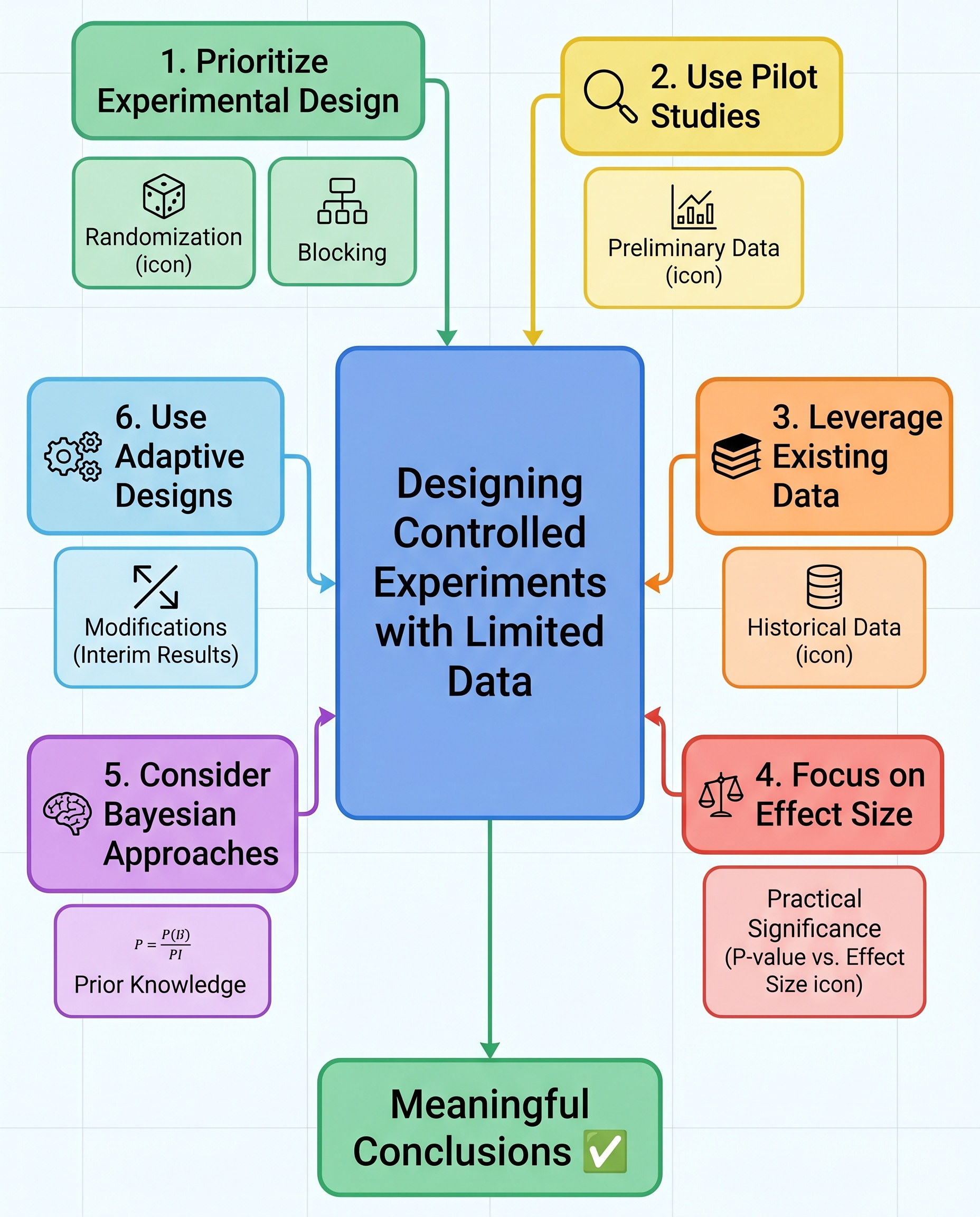

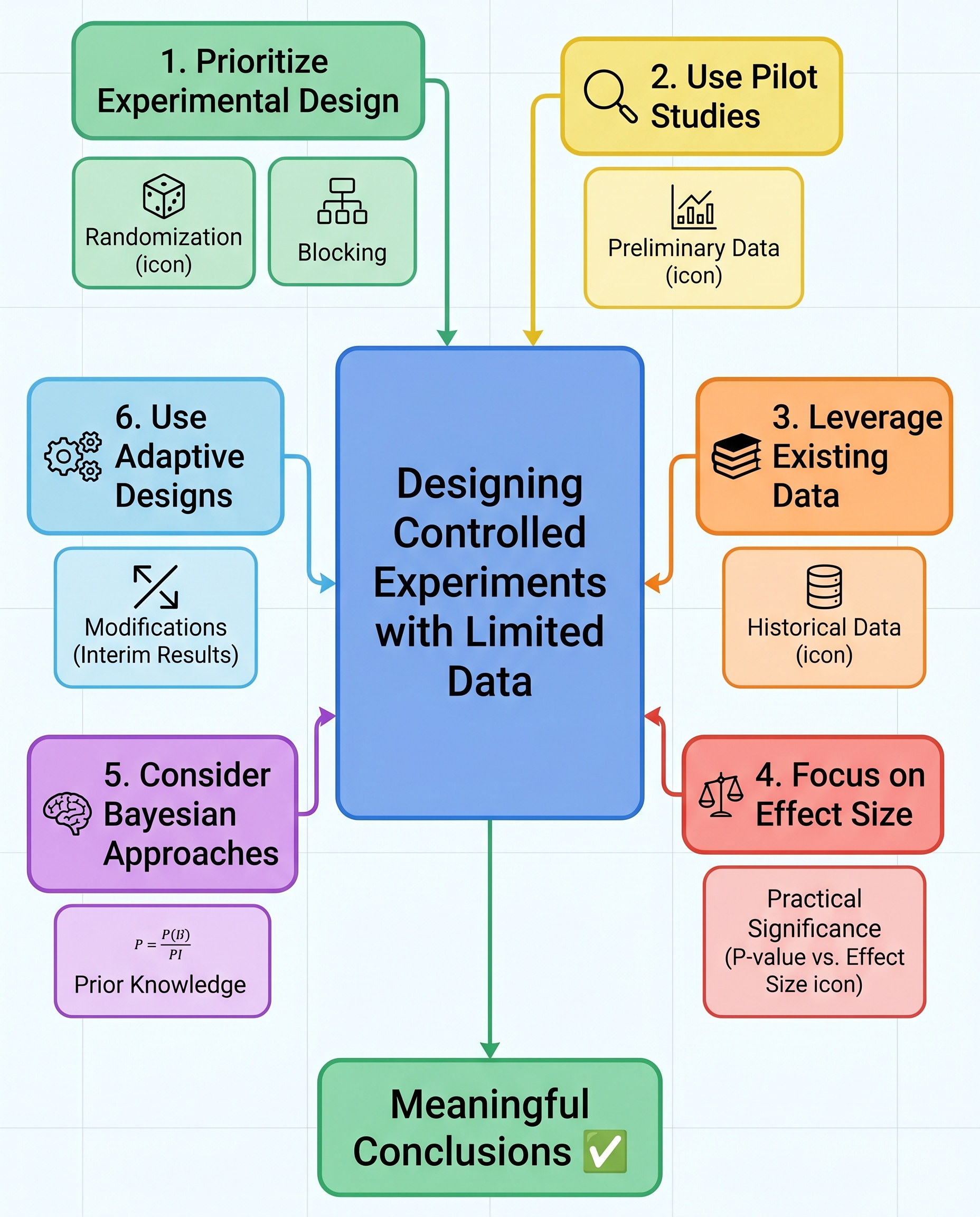

Design Controlled Experiments When Data Is Scarce — Interview-Ready Techniques

Limited data doesn’t excuse weak experimentation — it demands better design. In interviews (and in practice) demonstrate that you can protect causal inference and extract the most information from a small sample. Below are seven practical strategies you can describe succinctly and confidently.

Randomization — reduce bias

Random assignment is still the baseline: it balances observed and unobserved confounders on average.

- With small N, emphasize stratified randomization or pairwise randomization to avoid chance imbalances.

Interview talking point: explain how you’d randomize and check baseline balance (table of covariates, standardized differences).

Blocking (stratification) — control known variability

Block on major, known sources of heterogeneity (e.g., geography, device, prior activity) so variance within blocks is lower.

- Use block-level analysis or include block fixed effects to get more precise estimates.

Interview tip: propose 2–4 strong blocking variables rather than many weak ones.

Run a Pilot — estimate effect size and refine metrics

A small pilot helps estimate variance, detect measurement issues, and validate metrics before full allocation.

- Use pilot data to compute a realistic minimum detectable effect and to calibrate stopping rules.

Interview answer: describe what success looks like in a pilot and what you'll change if results show high variance or noisy metrics.

Leverage Historical or Similar Data — provide context

Use past experiments or observational data to form priors, estimate baseline rates, or inform expected variance.

- Historical controls can help with benchmarking when randomization is constrained.

Caveat: check for regime changes; historical data must be comparable.

Report Effect Size, Not Just p-values

With small N, p-values are noisy and easily misinterpreted. Always report point estimates and confidence intervals (or credible intervals).

Emphasize practical significance and decision thresholds — show the range of plausible effects.

Use Bayesian Methods — incorporate priors responsibly

Bayesian models let you combine prior knowledge with limited data and produce direct probability statements (e.g., P(effect > 0)).

- Be explicit about prior choice and check sensitivity to different priors.

Interview angle: describe a conservative prior based on historical data and how you'd test robustness.

Adaptive Designs — reallocate samples based on interim results

Sequential or adaptive allocation can concentrate power where it matters (e.g., multi-arm bandits, group sequential tests).

- Pre-specify adaptation rules and use proper corrections to control error rates or integrate them with Bayesian updating.

- Interview talking point: discuss when you’d use early stopping, and how you’d control for false positives.

Practical closing tips

- Small N means higher variance — be deliberate: pre-register hypotheses, define metrics and analysis plans, and avoid mining for significance.

- Prefer simpler models and robust estimators (e.g., bootstrap CIs) when data is scarce.

- Communicate uncertainty clearly to stakeholders and frame decisions around risk and expected value, not just statistical significance.

In interviews, walk through a short, specific example: how you would randomize and block, what a pilot would measure, a prior you’d use, and what decision rule you’d apply given limited data. That combination of practicality and statistical rigor shows you can protect causality even when samples are small.

#DataScience #ABTesting #Statistics