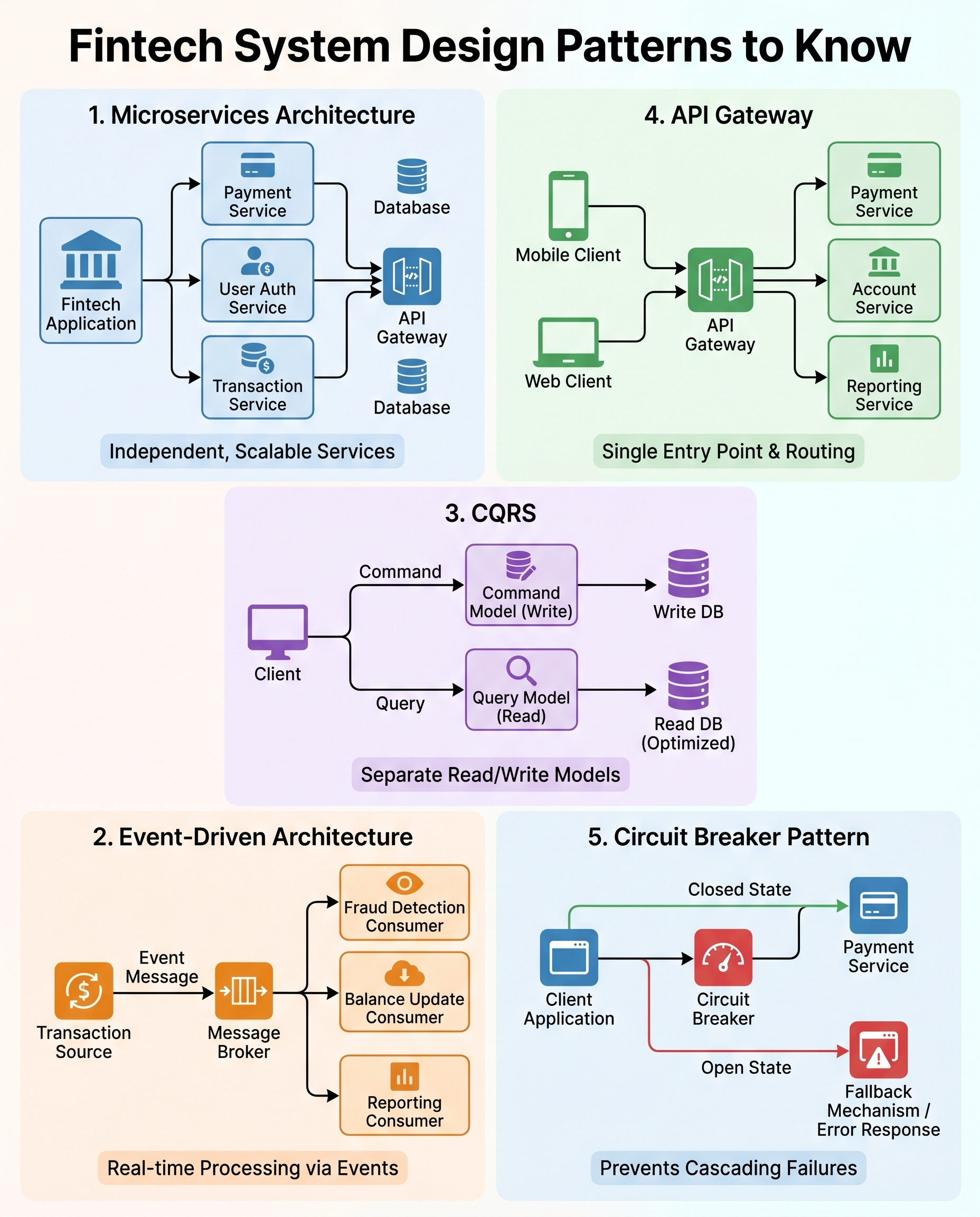

5 Fintech System Design Patterns Interviewers Expect You to Know

Fintech systems are unforgiving: sloppy designs can cause outages, lost funds, and regulatory headaches. Interviewers expect candidates to not only name patterns but also explain when to use them, the trade-offs, and how you'd mitigate risks. Below are five essential patterns to study, with focused talking points you can use in interviews.

1) Microservices

What it is

- Decompose the platform into domain-specific services (e.g., payments, authentication, ledger, notification).

Why use it in fintech

- Independent scaling for hot paths (payments vs user profile)

- Safer, smaller releases and team autonomy

- Fault isolation: one service failing doesn't take the whole system down

Key trade-offs

- Increased operational complexity (deployments, monitoring, service discovery)

- Data consistency across services—no global DB transactions

- Network latency and error handling between services

Interview talking points

- How you define service boundaries (bounded contexts)

- Approaches for consistency: SAGA, compensating transactions, idempotent operations

- Observability: structured logs, distributed tracing, health checks

When to use

- High throughput, rapidly evolving domains, multiple teams

2) Event-Driven Architecture

What it is

- Services communicate through events published to brokers (Kafka, Pulsar, RabbitMQ).

Why use it in fintech

- Real-time processing for fraud detection, balance updates, and notifications

- Loose coupling and replayability (reprocess events for new consumers)

Key trade-offs

- Eventual consistency (reads may be slightly stale)

- Complexity around ordering, duplication, and exactly-once semantics

- Need for robust schema/versioning and retention policies

Interview talking points

- Choosing a broker and topic partition strategy for ordering and throughput

- Handling duplicates and idempotency in consumers

- Versioning events and evolving schemas (Protobuf/Avro + schema registry)

When to use

- Multiple consumers of transactional data, asynchronous workflows, analytics pipelines

3) CQRS (Command Query Responsibility Segregation)

What it is

- Separate the write model (commands) from the read model (queries). Reads can be denormalized for performance.

Why use it in fintech

- Heavy-read surfaces like statements, dashboards, and balances need low-latency queries

- Allows use of different storage technologies optimized for read vs write

Key trade-offs

- Added complexity: multiple models and synchronization between them

- Eventual consistency between writes and read projections

Interview talking points

- How to keep read models up-to-date (events, change-data-capture)

- When not to use CQRS: simple CRUD systems or when strong consistency is mandatory

- Combining CQRS with event sourcing for auditability and replay

When to use

- When read performance and scalability are primary concerns and slight staleness is acceptable

4) API Gateway

What it is

- A single, secure entry point for clients that handles routing, auth, rate limiting, and protocol translation.

Why use it in fintech

- Centralized enforcement of security (mTLS, OAuth), request throttling, request/response shaping, and API versioning

- Can implement cross-cutting concerns like request aggregation for mobile apps

Key trade-offs

- Gateway can become a bottleneck or single point of failure (use HA and regional gateways)

- Additional latency and operational complexity

Interview talking points

- Rate limiting per API key, IP, or user to protect downstream services

- Edge caching strategies and when to bypass the gateway

- Authentication patterns: JWTs vs opaque tokens, refresh strategies, token revocation

When to use

- Public-facing APIs, multiple client types, need centralized security and governance

5) Circuit Breaker

What it is

- Prevents cascading failures by stopping calls to a failing dependency and providing fallbacks

Why use it in fintech

- Protects critical flows (e.g., payment gateway) and allows graceful degradation (read-only mode, cached responses)

Key trade-offs

- Tuning thresholds is hard: too sensitive = premature break; too lenient = late action

- In distributed systems, circuit state coordination and consistent behavior can be complex

Interview talking points

- Fallback strategies (queue requests, return cached data, degrade non-critical features)

- Integrating circuit breakers with bulkheads and retries (backoff policies)

- Monitoring and alerting: error rate, latency, open/close events

When to use

- When you depend on unstable external services or want to protect system stability under load

Quick comparison & when to combine

- Microservices + API Gateway: common pairing for team autonomy and a secure edge.

- Event-Driven + CQRS: use events to update read models asynchronously.

- Circuit Breakers + Bulkheads + Retries: combine for robust fault handling.

How to explain trade-offs in an interview (short framework)

- State constraints (consistency, latency, throughput, regulatory needs).

- Propose a pattern and why it fits the constraints.

- Explain key trade-offs and consequences.

- Describe mitigations (monitoring, fallbacks, eventual consistency strategies).

- Mention specific technologies and metrics you’d track.

Example snippet: “I’d split the payments service out (microservice) so we can scale and deploy independently. To keep cross-service consistency I’d use an event-driven SAGA for settlement. Trade-offs are higher operational overhead and eventual consistency; we mitigate with strict auditing, idempotency, and replayable events in Kafka.”

Study these patterns, practice explaining the trade-offs concisely, and be ready to justify your choices with constraints and mitigation strategies. That’s what interviewers are listening for.