Write-Through vs Write-Back Cache: The Interview Answer You Must Nail

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

Write-Through vs Write-Back Cache: The Interview Answer You Must Nail

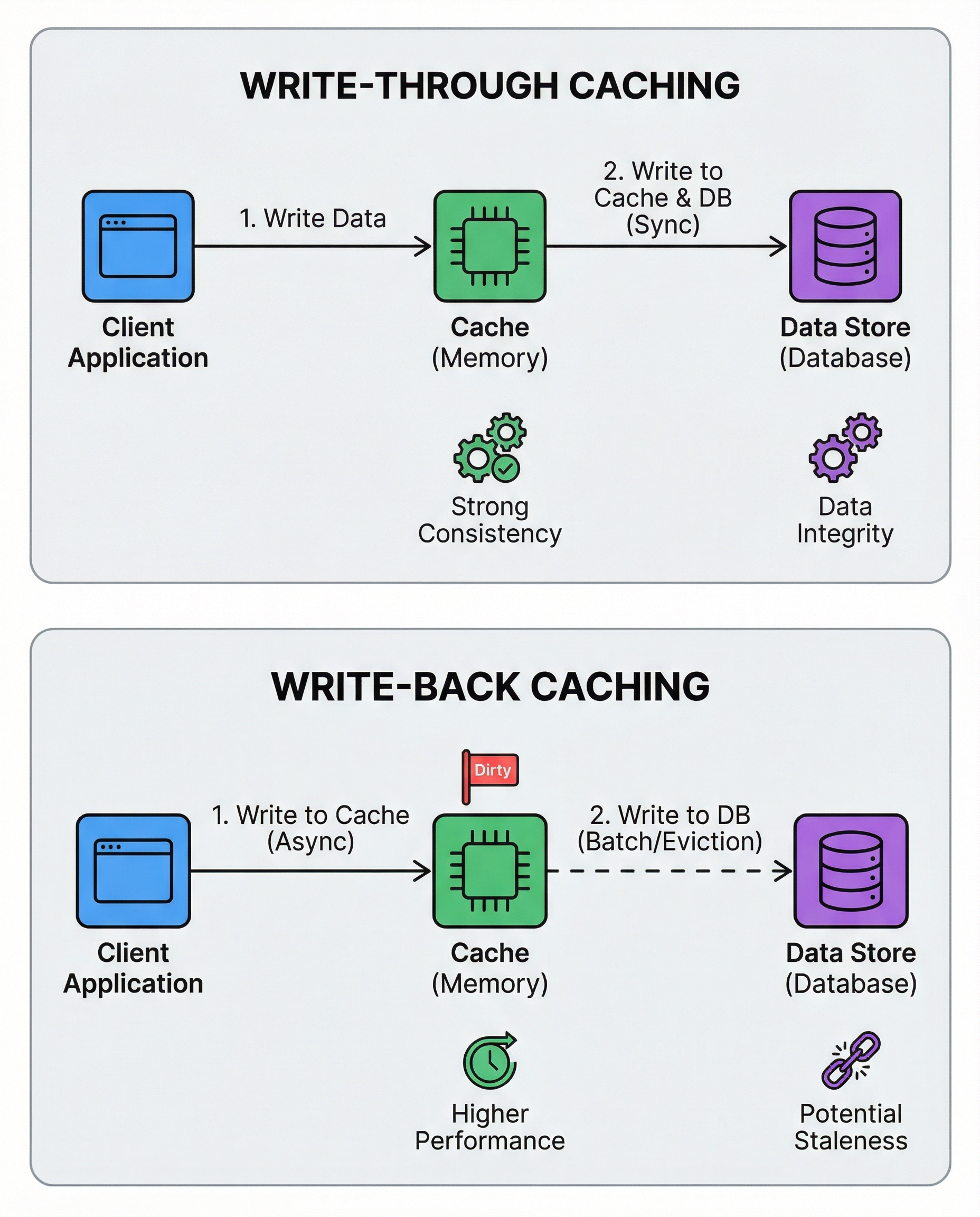

Caching isn’t just about speed — it’s a consistency contract. When you pick a caching write policy you’re choosing how and when your writes become durable and visible. Interviewers often expect a crisp explanation plus reasoning for trade-offs. Here’s a simple, interview-ready breakdown and guidance for when to use each.

What they are

- Write-through: Every write is written to the cache and to the backing store (DB) synchronously. The cache and DB are updated together.

- Write-back (write-behind): Writes are written to the cache immediately and flushed to the backing store later (on eviction, periodically, or batched). The backing store is updated asynchronously.

Key trade-offs

Write-through

- Pros:

- Stronger consistency: reads from cache reflect the most recent writes.

- Simpler failure semantics and easier recovery.

- Predictable correctness — good for critical data (payments, account balances).

- Cons:

- Slower writes (every write touches DB synchronously).

- Higher write latency and increased DB load.

Write-back

- Pros:

- Much faster writes (write to cache only), good for write-heavy workloads.

- Can batch and coalesce writes to reduce DB load and increase throughput.

- Cons:

- Risk of data loss if the cache crashes before flushing to DB (unless you add durability mechanisms).

- More complex eviction and flush logic; harder to reason about correctness.

- Potential for stale data if reads bypass the cache or if multiple replicas aren’t coordinated.

Practical considerations

- Durability: If you can’t tolerate lost updates (banking, billing), prefer write-through or ensure strong durability for the cache (replication, write-ahead logs, or immediate persistence).

- Performance: If ultra-low write latency and high throughput matter and occasional risk is acceptable, write-back with careful batching may be appropriate.

- Complexity: Write-back requires careful handling of eviction, crash recovery, ordering, and concurrency. Add queues, checkpoints, or a WAL to mitigate risk.

- Read patterns: If reads are frequent and must reflect recent writes, write-through simplifies correctness.

Implementation patterns & mitigations

- Use write-back with a durable queue or replication so that cache crashes don’t lose data.

- Batch flushes during low traffic periods to reduce DB pressure.

- Combine approaches: e.g., write-through for critical keys, write-back for non-critical high-volume writes.

- Consider cache-aside for reads with synchronous writes to the DB when appropriate.

Interview-ready answer (short)

"Write-through writes synchronously to both cache and DB, giving strong consistency and simpler recovery at the cost of higher write latency. Write-back writes to cache first and flushes to DB later for much faster writes, but introduces risk of lost or stale data and requires more complex eviction/flush logic. Choose based on whether you need strict consistency/durability (write-through) or you prioritize write performance and can accept additional complexity and risk (write-back)."

Quick decision checklist

- Use write-through when: correctness/durability is paramount (finance, critical state), and extra write latency is acceptable.

- Use write-back when: write performance is critical, you can tolerate complexity and mitigate durability risks (analytics, buffers, some leaderboards).

Answering clearly and stating the trade-offs — especially which guarantees you’re giving up or preserving — is the key to nailing this interview question.

#SystemDesign #SoftwareEngineering #TechInterviews