High-Score (Bugfree Users) Uber Senior Data Scientist Interview: Projects + Product Experiment Design

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

High-Score (Bugfree Users) Uber Senior Data Scientist Interview: Projects + Product Experiment Design

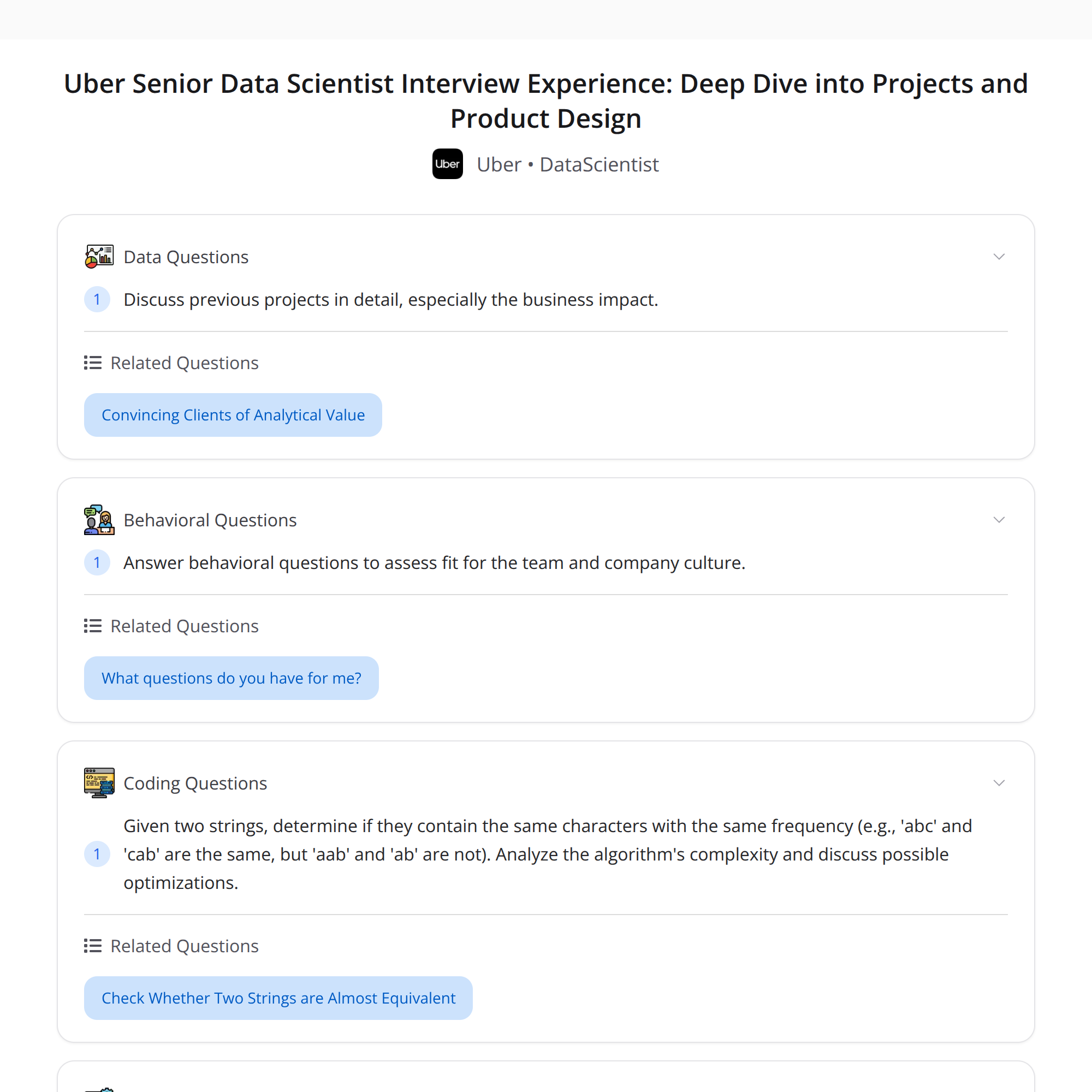

This post summarizes a high-scoring interview experience for Uber’s Senior Data Scientist role (shared by Bugfree users). Two one-hour technical rounds tested both product/experimentation thinking and project depth — plus a short coding task. Below is a clear breakdown, concrete tips, and sample approaches you can reuse in prep.

Interview structure (high level)

- Two 1-hour technical rounds:

- Round 1 (DS Director): past projects deep-dive, a product launch case focused on experiment design, handling network effects, discussion of instrumental variables (IVs) and their assumptions, and a quick coding problem (anagram check + complexity/optimizations).

- Round 2 (DS Manager): deep follow-up on project details, business impact, and behavioral/fit questions.

Key theme: communicate technical rigor and business outcomes clearly — both matter.

Round 1 — what they asked and how to approach it

1) Past projects

- Expect questions about objectives, signals and metrics, methods, deployment, A/B test validation, and business impact (quantified when possible).

- Structure answers with: context → objective → approach/model → evaluation/metrics → business impact → trade-offs.

2) Product launch case: design an experiment

- Standard experiment design checklist:

- Clarify the goal and primary metric (e.g., DAU, conversion rate, revenue per user).

- Define the population and unit of randomization (user, session, region, cluster).

- Choose treatment and control definitions and ensure randomization is feasible.

- Pre-specify primary and guardrail metrics and success criteria (including minimum detectable effect and power).

- Compute sample size and estimate duration.

- Define monitoring rules, stopping rules, and analysis plan (intent-to-treat vs. per-protocol).

- Plan for rollout and rollback.

3) Handling network effects

- Why they matter: randomization assumptions break when one unit’s treatment affects another unit’s outcome (interference).

- Solutions/approaches:

- Cluster-level randomization (randomize groups rather than individuals).

- Graph cluster / community detection to form clusters with minimal cross-cluster edges.

- Exposure modeling: define and estimate treatment exposure levels rather than binary treatment.

- Encourage designs (peer encouragement) when you can’t force treatment but can randomize encouragement.

- Use observational/causal inference methods with careful assumptions if experimentation isn’t possible.

- “Unlimited supply” nuance: even if supply (e.g., driver availability) is large, network effects can remain via user-to-user externalities (matching quality, social signals). If truly unlimited and independent, some congestion-based network effects vanish; but indirect benefits (word-of-mouth, platform value) may still create interference.

4) Instrumental variables (IVs): when/why & assumptions

- When to use: use IVs when treatment is endogenous (e.g., take-up is correlated with unobserved confounders) but you have a valid instrument that affects treatment assignment and only affects outcome through treatment.

- Main assumptions:

- Relevance: instrument strongly predicts treatment.

- Exclusion restriction: instrument affects the outcome only via the treatment (no direct path).

- Independence: instrument is as good as randomly assigned (unconfounded with outcome).

- (Sometimes) Monotonicity: no units for which the instrument has a negative effect on treatment if it increases treatment for others.

- Practical examples: random assignment to encouragement, geographic variation in policy exposure, or time-based rollouts.

5) Quick coding: check if two strings are anagrams

- Clarify assumptions: character set (ASCII, lowercase letters, unicode), case sensitivity, whitespace.

- Approaches:

- Sorting method: sort both strings and compare. Time: O(n log n) (n = length), Space: O(n).

- Counting method (hashmap or fixed-size array for known alphabet): iterate once and count frequency differences. Time: O(n), Space: O(k) where k is alphabet size.

Sample Python-ish approach (assuming lowercase a–z):

# O(n) time, O(1) extra space if alphabet fixed

def is_anagram(a, b):

if len(a) != len(b):

return False

counts = [0] * 26

for ch1, ch2 in zip(a, b):

counts[ord(ch1) - 97] += 1

counts[ord(ch2) - 97] -= 1

return all(c == 0 for c in counts)

Notes: use collections.Counter for general unicode and clarity (still O(n) time, O(k) space). Discuss edge cases with the interviewer and optimize based on constraints.

Round 2 — what to expect

- Deep dive into a few projects: interviewers will probe technical details (model choices, feature engineering, validation), edge cases, and deployment/monitoring.

- Business impact & trade-offs: quantify business outcomes, describe alternative approaches you considered, and explain why you chose a particular solution.

- Behavioral fit: use STAR (Situation, Task, Action, Result), focus on leadership, cross-functional influence, and product intuition.

Key takeaways & preparation checklist

- Communicate both technical rigor and business outcomes: always tie your methods back to business metrics and impact.

- For experiment design problems: follow a checklist (goal → unit → metrics → randomization → power → analysis plan) and explicitly call out interference risks.

- For network effects: propose cluster designs, exposure models, and encourage designs; explain why each reduces interference.

- For IVs: state assumptions (relevance, exclusion, independence), give real examples, and explain plausibility checks.

- For coding: clarify constraints, pick an approach, explain complexity, and mention edge cases.

- Behavioral stories: quantify impact, give clear role delineation, and highlight collaboration.

Quick prep plan (1–2 weeks)

- Rehearse 4–6 project summaries focusing on impact and trade-offs.

- Practice 3–5 experiment designs with network interference scenarios.

- Review IV theory and a few applied examples.

- Brush up on small coding problems (string & array manipulations) and practice explaining complexity.

Good luck — remember: clarity, structure, and measurable impact are as important as technical correctness.

#DataScience #ProductAnalytics #Experimentation