High-Score (Bugfree Users) Uber Senior Data Scientist Interview: Projects + Product Experiment Design

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

High-Score (Bugfree Users) Uber Senior Data Scientist Interview: Projects + Product Experiment Design

A Bugfree community user shared a concise, high-scoring interview experience for Uber's Senior Data Scientist role. Below is a cleaned-up and expanded recap with practical takeaways you can use to prepare.

Interview format (what to expect)

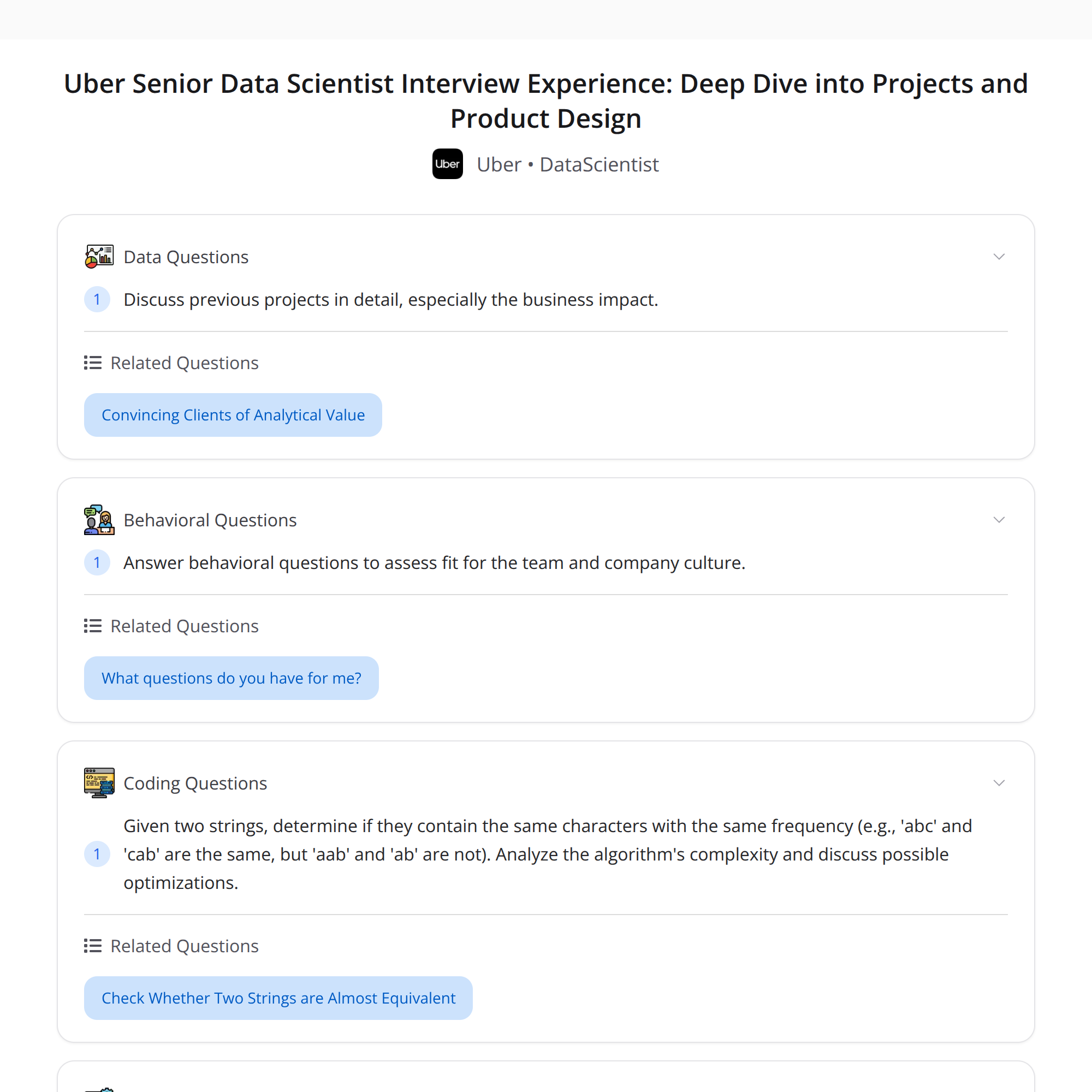

- Two technical 1-hour rounds.

- Round 1: DS Director — deep dive on past projects, then a product case focused on experiment design; a short coding question to finish.

- Round 2: DS Manager — deeper exploration of project execution, impact, and behavioral fit.

Key theme: demonstrate both technical rigor and clear, business-focused communication.

Round 1 — structure and sample topics

Past projects

- Expect probing questions about your role, choices, failure modes, and measurable impact.

- Quantify outcomes (lift, revenue impact, retention, cost savings) and trade-offs.

Product launch case — experiment design

- Typical ask: design an experiment, handle network effects, and reason about IVs (instrumental variables).

- Important elements to cover:

- Objective: primary metric(s) and guardrail metrics.

- Randomization unit: user, ride, region, or cluster — justify based on interference/network effects.

- Sample sizing: baseline rate, minimum detectable effect (MDE), power, and duration estimates.

- Implementation plan: logging, instrumentation of treatment, monitoring, and A/A tests.

- Analysis plan: pre-specify primary analysis, significance thresholds, multiple-comparison controls, and heterogeneity checks.

Handling network effects

- Recognize SUTVA violations: users/drivers influence each other.

- Options:

- Cluster randomization (e.g., by geographic region or driver groups) to reduce interference.

- Graph-aware/randomized experiments when user-to-user edges matter (e.g., matching platforms).

- Exposure models or partial interference assumptions when full clustering is impractical.

- Practical trade-offs: cluster randomization reduces power (fewer independent units) but gives cleaner causal estimates.

Instrumental variables (when/why and assumptions)

- When to use IV: when you have endogeneity (treatment assignment correlated with unobserved confounders) and a valid instrument that shifts treatment but not the outcome directly.

- Key IV assumptions to state explicitly:

- Relevance: instrument must strongly predict treatment.

- Exclusion: instrument affects the outcome only through treatment (no direct path).

- Independence: instrument is independent of unobserved confounders.

- Monotonicity (for local average treatment effects, sometimes needed).

- Limitations: in networks, exclusion can be hard to defend due to interference — discuss feasibility and robustness checks.

"Unlimited supply" and network effects — do they matter?

- "Unlimited supply" (e.g., infinite driver supply) reduces certain competitive effects like immediate capacity constraints.

- But network effects can still matter via user experience (availability, wait times), cross-side externalities, and engagement. Explain whether improved supply changes the relevant business metric and how you'd test it.

Short coding task (example given)

- Problem: check if two strings are anagrams (same character frequencies).

- Approaches:

- Sort both strings and compare: O(n log n) time, O(n) memory for copies.

- Count characters with a hash map or fixed-size array (for ASCII/Unicode segments): O(n) time, O(1) or O(k) space (k = alphabet size).

- Optimizations: early length check, early exit on negative counts, use fixed-length arrays for known alphabets, streaming comparison to avoid full copies.

- Discuss complexity and edge cases (unicode, normalization, whitespace/punctuation rules).

Round 2 — depth on projects & behavioral fit

- Expect deeper follow-ups: modeling choices, validation, productionization, monitoring, and the exact business impact.

- Behavioral questions: use STAR (Situation, Task, Action, Result). Be ready to discuss cross-functional communication, ambiguity handling, and a time you changed course based on data.

Communication tips (what made this interview "high-score")

- Balance technical detail with business intuition. Show you can translate model results into expected business outcomes.

- Be explicit about assumptions and how you would validate them.

- When designing experiments, pre-specify metrics and analyses — interviewers look for operational thinking.

- For product cases, include trade-offs and rollout strategies (e.g., canary, phased rollouts, classification of risk).

- For coding, talk through complexity and best/worst-case behavior; optimize incrementally.

Quick checklist to prepare

- Refresh causal inference basics: randomized experiments, IVs, difference-in-differences.

- Practice experiment design end-to-end: metrics, power calculations, implementation, and analysis plan.

- Review cluster/graph randomization techniques and examples.

- Brush up on coding basics and common algorithmic patterns (hashing, sorting, two-pointer).

- Prepare 2–3 project stories with clear metrics and impact statements.

Final takeaway

Interviewers look for clarity: rigorous technical reasoning plus clear focus on measurable business impact. Practice communicating assumptions, trade-offs, and how your results would move the business.

#Tags

#DataScience #ProductAnalytics #Experimentation