Negative R² in Interviews: It’s Not a Bug—It’s Your Model Losing to the Mean

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

Negative R² in Interviews: It’s Not a Bug—It’s Your Model Losing to the Mean

Many candidates assume R² must lie between 0 and 1. That’s a common misconception. R² is defined as:

R² = 1 − SSE / SST

where SSE is the model's sum of squared errors and SST is the total sum of squares (the variance around the mean). If SSE > SST, the fraction SSE/SST exceeds 1 and R² becomes negative. In plain language: your model predicts worse than a trivial predictor that always outputs the mean ȳ.

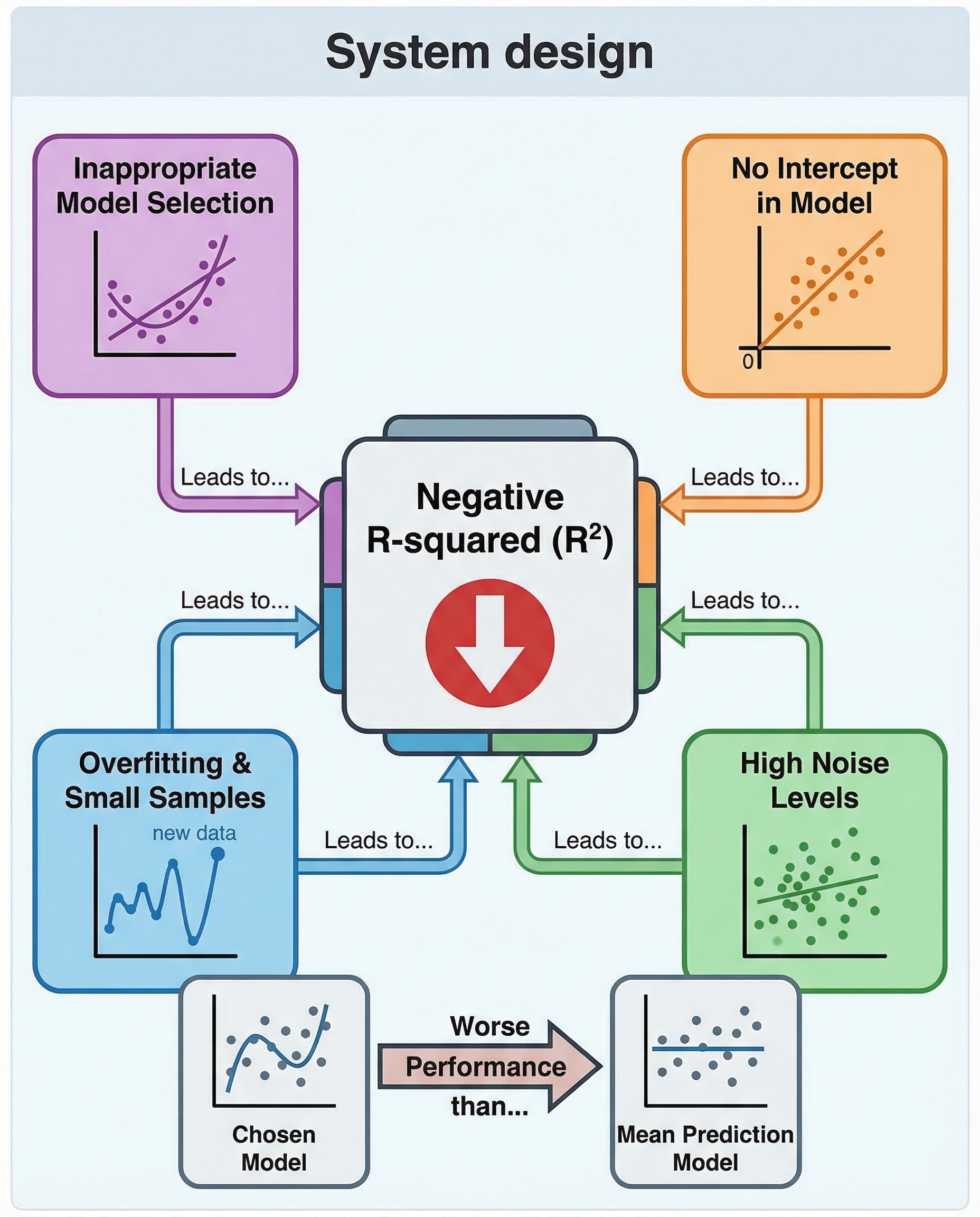

Why R² goes negative

- Baseline reminder: the mean predictor ȳ has SSE = SST, so it has R² = 0 by definition. Negative R² simply means your model's SSE is larger than that baseline.

- Common causes:

- Model misspecification: e.g., fitting a straight line to strongly nonlinear data.

- Evaluating on a test set after overfitting the train set: the model generalizes poorly so SSE_test outgrows SST_test.

- Forcing a model without an intercept: removing the intercept often increases SSE relative to SST.

- Bad preprocessing or target leakage issues that break generalization.

Quick numeric example

Suppose SST = 100 and SSE = 150. Then

R² = 1 − 150 / 100 = 1 − 1.5 = −0.5.

Interpretation: your model is making predictions so bad that the mean of y would have been a better forecast.

What to say (and why) in an interview

Be concise and precise. For example:

"Negative R² means the model's SSE exceeds the total variance SST, so it performs worse than the baseline predictor ȳ. Typical causes are misspecification (e.g., linear model for nonlinear data), evaluating on a test set after severe overfitting, or removing the intercept. Remedies include adding an intercept, transforming features, regularization, or choosing a more appropriate model."

That answer shows you know the formula, the intuition (comparison to the mean), and practical causes and fixes.

How to fix or avoid it

- Restore/allow an intercept unless there's a good reason not to.

- Try feature transformations (polynomials, logs) or non-linear models.

- Use regularization (Ridge, Lasso) to prevent overfitting.

- Validate on a properly held-out test set or with cross-validation.

- Check data preprocessing and leakage issues.

Bottom line

Negative R² isn't a bug in the metric — it's a signal. It tells you the model is doing worse than the dumb baseline that always predicts the mean. Say that clearly in interviews and follow up with likely causes and fixes.