High-Score Interview: Intuit Staff SWE (TPI) — AI Basics, Kafka at Scale & PQ Coding

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

High-Score (Bugfree Users) Interview Experience: Intuit Staff SWE (TPI)

A concise, practical write-up of a high-scoring interview for Intuit (Staff Software Engineer, TPI). Key themes: AI fundamentals (applied, not heavy math), system design and architecture trade-offs, Kafka and event-driven systems at scale, plus monitoring/observability and a coding + LLD component. Includes prep tips focused on narration of real systems and ops at scale.

TL;DR

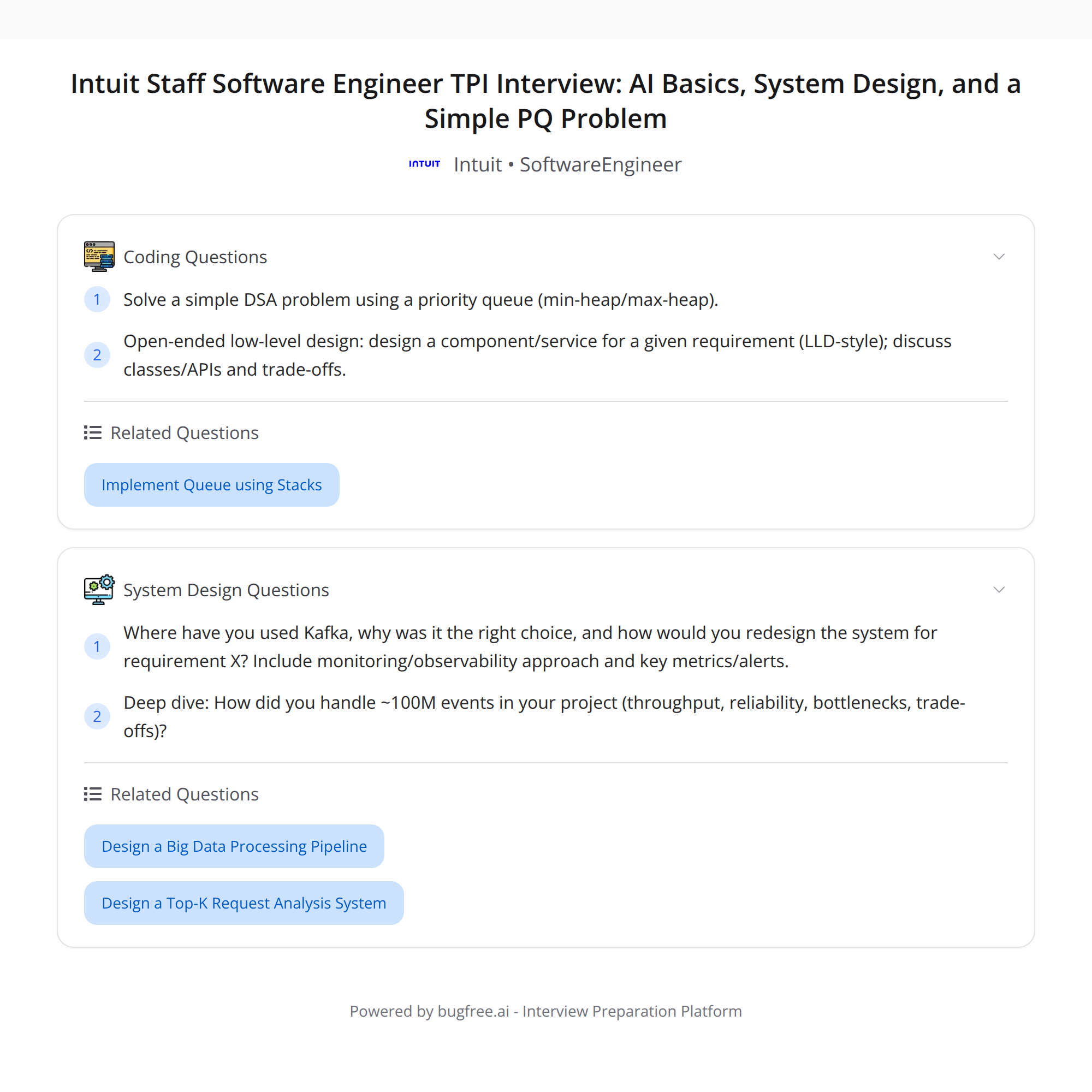

- Interview focus: practical AI basics, system design decisions, Kafka at scale, monitoring, resume deep-dive on handling ~100M events, a priority-queue coding problem, and a time-boxed low-level design (LLD) sketch.

- Coding: straightforward priority-queue (heap) + an open-ended LLD portion.

- Prep tip: narrate concrete numbers and operational details from your real systems (QPS, partitions, failures, runbooks).

Interview breakdown

AI fundamentals

- Emphasis on practical usage rather than heavy math proofs. Expect questions on how to integrate ML/LLM into production: inference latency, batching, model versioning, feature drift, and evaluation metrics.

- Talk trade-offs: on-device vs. server inference, caching responses, cost vs. latency, and safe-fallback strategies.

System design / architecture trade-offs

- Architecture choices, scaling approaches, consistency vs. availability trade-offs, and operational concerns (deployments, rollbacks, backward compatibility).

- Be explicit about constraints and why you chose a particular trade-off.

Kafka and event-driven systems at scale

- Deep dive into event volume, throughput, reliability, and bottlenecks.

- Expect questions on partitioning strategy, consumer group scaling, ordering guarantees, retention policies, compaction, and cross-datacenter replication.

Monitoring & observability

- Metrics, alerts, runbooks, and production troubleshooting. They focus on what you actually measured and how you reacted to incidents.

Resume deep-dive (~100M events)

- You’ll need to explain throughput, reliability strategies, the real bottlenecks, and what you did to detect/mitigate them.

Coding + LLD

- Coding: a priority-queue data structure problem — straightforward heap-based solution expected.

- LLD: open-ended design for a component or service; time-boxed, so provide a crisp sketch with components, APIs, data model, and scaling notes.

Kafka & event-driven systems — what to highlight

When discussing a system that handled ~100M events, narrate these concrete details:

- Traffic numbers: average and peak events/sec, message sizes, total write/read throughput.

- Topic & partition strategy: topic per resource or per tenant, number of partitions, and rationale (hot-key mitigation, parallelism).

- Durability & consistency: replication factor, in-sync replicas (ISR), leader placement, and retention/compaction decisions.

- Producer semantics: idempotence, retries, batching, compression.

- Consumer design: consumer lag patterns, parallelism with consumer groups, offset management, and ordering guarantees.

- Bottlenecks encountered: network bandwidth, disk throughput, GC pauses, broker CPU, or partition skew. Explain root cause analysis and remediation (repartitioning, batching, tuning JVM, adding brokers).

- Cross-cutting: schema evolution (Avro/Protobuf + schema registry), monitoring for under-replicated partitions, active controller count, and leader election rates.

Practical mitigations to mention:

- Increase partitions for throughput; watch partition count trade-offs (controller load, rebalances).

- Use idempotent producers and transactions for stronger delivery semantics if needed.

- Batch and compress messages to reduce network and broker load.

- Implement dead-letter queues and back-pressure strategies in consumers.

- Use MirrorMaker or Confluent Replicator for cross-dc replication with careful bandwidth planning.

Key Kafka metrics to call out:

- Bytes In/Out per broker, Requests/sec, Produce/Fetch latency, Under-replicated partitions, ISR size, Controller leader changes, Consumer lag.

Monitoring, alerts & on-call ops

- Define SLOs and SLAs: error budget, acceptable latencies, and throughput targets.

- Instrument everything: metrics, logs, traces. Correlate traces to find tail-latency causes.

- Alerts: actionable alerts (not just noise). Example alerts: consumer lag > X for Y minutes, under-replicated partitions, leader election spikes.

- Runbooks: step-by-step for common incidents (high lag, broker down, noisy GC). Document rollback paths and quick mitigation steps.

- Post-incident: root cause analysis, long-term fixes, and changes to monitoring.

The coding problem (priority queue)

Typical expectations:

- Implement a min/max priority queue using a binary heap (array-based heap). Core operations: insert (push), peek, pop (extract), and update-key if needed.

- Time complexity: O(log n) for insert/pop, O(1) for peek.

- Edge cases: empty queue behavior, duplicate priorities, stable ordering if required.

- Concurrency: if asked, discuss locking/sharding or lock-free approaches, and trade-offs of concurrent heaps vs. multiple partitions.

A short narrative for interview:

- State chosen data structure (binary heap), complexity, and memory usage.

- Walk through insertion and removal with an example.

- Mention alternatives (balanced BST, indexed heap for decrease-key) and why heap is simplest.

Low-level design (LLD) — how to time-box effectively

When given a time-boxed LLD prompt:

- Start with a one-sentence system goal and primary constraints (SLA, consistency, latency).

- Sketch the main components: API gateway, load balancer, workers/consumers, storage, cache, and monitoring.

- Define key APIs and a minimal data model (sample fields only).

- Explain how you scale each piece (sharding, horizontal scaling, partitioning) and handle failures (retries, idempotence, DLQ).

- Call out trade-offs briefly (e.g., strong ordering vs. throughput) and where you’d invest engineering time.

Example 5-minute structure:

- Quick requirements and constraints (1 min)

- High-level architecture diagram + components (2 min)

- Data model + key API signatures (1 min)

- Scaling & failure handling bullets (1 min)

How to narrate your real systems (prep tip)

Interviewers want clarity and ops experience. Use this pattern when describing any system:

- Context: What was the system? Business purpose.

- Scale: Concrete numbers (avg/peak QPS, message size, total events/day, storage size).

- Architecture: high-level components, data flow, and technologies used.

- Challenges: specific bottlenecks or incidents.

- Actions: what you changed (tuning, design, or process).

- Outcome: metrics after the change (reduced latency, fewer incidents, cost savings).

Sample lines to practice:

- "Our pipeline processed ~100M events per day (peak 10k E/sec). We used Kafka topics with 200 partitions to parallelize consumers."

- "We observed consumer lag spikes due to large GC pauses on a subset of brokers; mitigation involved JVM tuning and increasing partition count to spread load."

- "To guarantee at-least-once delivery with exactly-once processing semantics we used idempotent producers and consumer-side deduplication with compacted topics."

Practice telling two or three such stories from your resume with numbers and clear outcomes.

Final prep checklist

- Prepare 3 real-system stories with metrics and ops details.

- Review Kafka internals: partitioning, replication, rebalances, and key metrics.

- Practice a heap-based priority queue implementation and talk-through of complexity.

- Practice a 5-minute LLD sketch with components, APIs, and scaling notes.

- Be ready to discuss monitoring, runbooks, and incident responses with concrete examples.

If you want, I can:

- Turn one of your resume bullets into a practiced 2-minute narrative with numbers and ops details.

- Generate mock questions and ideal answer outlines for Kafka or the LLD portion.

#SystemDesign #Kafka #SoftwareEngineering