High-Score SAP Labs Java Interview (5–7 YOE) — Bugfree Users' Project-First Playbook

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

High-Score SAP Labs Java Interview (5–7 YOE) — Bugfree Users' Project-First Playbook

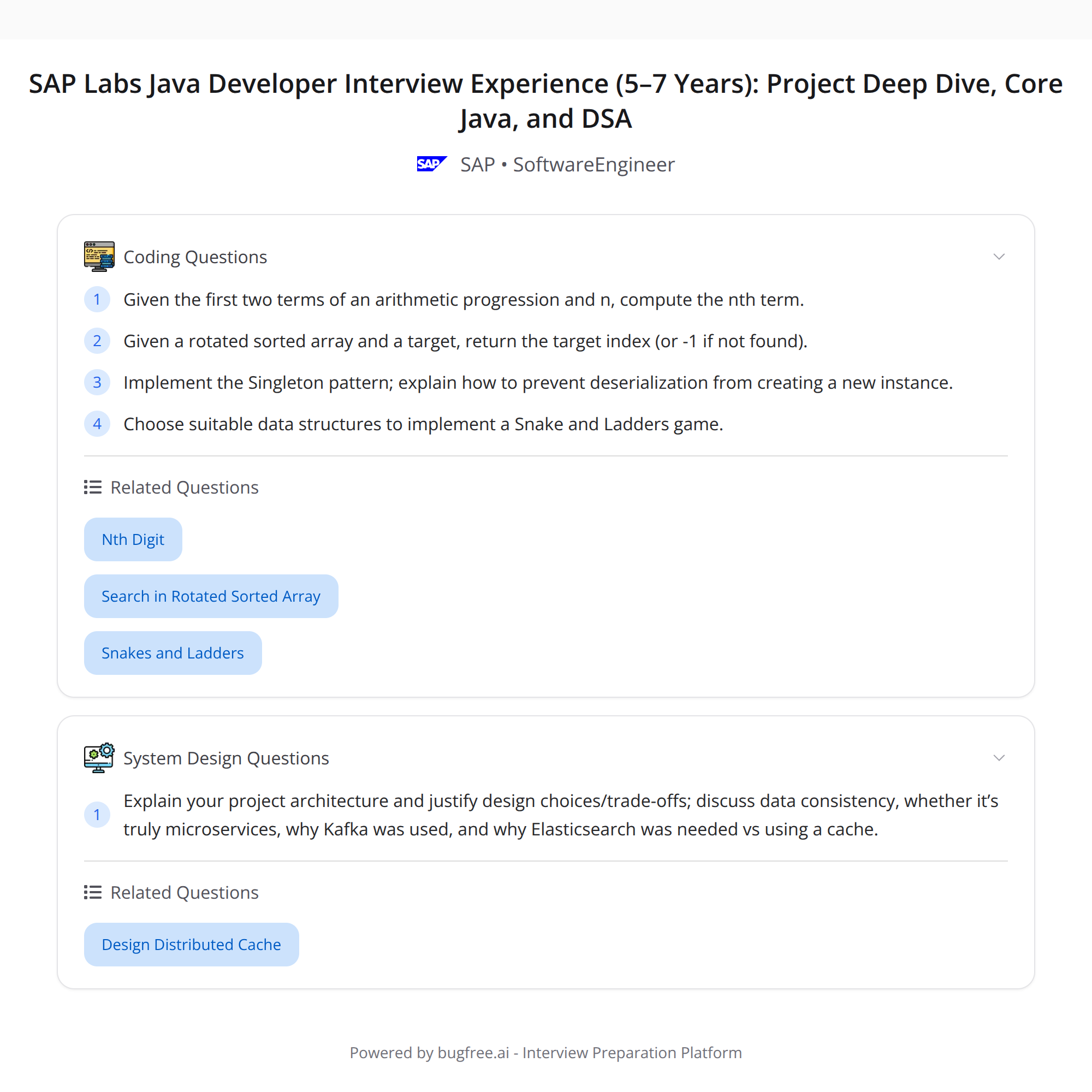

Posted by "bugfree users": a succinct, high-yield account of a successful SAP Labs Java Developer interview (5–7 years experience). The process had two focused rounds:

- Round 1: deep project dive + architecture trade-offs.

- Round 2: rapid-fire core Java, patterns and DSA.

Below is a cleaned, enriched playbook that highlights questions asked, how to prepare, and short sample responses you can adapt.

Quick summary of the rounds

Round 1 (project-first)

- Heavy focus on the candidate's project: design decisions, data consistency, whether the system is "really" microservices, choice of Kafka, choice of Elasticsearch vs a simpler cache.

- Two DSA problems: nth term of an arithmetic progression (AP); search in a rotated sorted array.

Round 2 (rapid-fire)

- Ownership in stack, Strategy pattern, garbage collection, HashMap vs Hashtable internals, Singleton + deserialization safety, data structure approach for Snake & Ladders, REST responses and HTTP codes.

Key takeaway: know your project cold, and be able to switch fast between design reasoning, Java internals/patterns, and algorithmic thinking.

Round 1 — Project deep-dive: what to expect and how to answer

Interviewers will probe concrete trade-offs. Be specific: mention alternatives you considered, why you rejected them, the metrics you used, and real incidents (e.g., outages or performance tuning) if any.

Common topics and how to address them:

"Is it really microservices?"

- Explain your service boundaries, ownership, independent deployability, team ownership, data ownership and communication patterns. If you used a monolithic or a modularized monolith, say so and justify (operational complexity vs team size, latency, data consistency needs).

"Design trade-offs & data consistency"

- Describe transactional boundaries, consistency model (strong vs eventual), how you handled distributed transactions (sagas, compensating actions) or chose to avoid them. Give concrete examples: cross-service update that required eventual consistency and how you reconciled it.

"Why Kafka?"

- Mention use cases: decoupling producers/consumers, high throughput, durability, replayability, ordering guarantees (per-partition) and use of consumer groups for scaling. Also explain alternatives you considered (e.g., RabbitMQ, Kinesis) and why Kafka fit better.

"Why Elasticsearch vs simpler caching?"

- Explain that Elasticsearch is for full-text search, flexible querying, scoring, analytics and near real-time indexing. A simple cache (Redis) only gives key-value lookups and limited query capability. If you used both (ES for search + Redis for hot data), say so and why.

Tips for project questions:

- Keep a concise elevator pitch of your project architecture.

- Prepare 3–4 concrete design decisions you made and the metrics/outcomes (latency, throughput, cost, simplicity).

- Be ready to diagram synchronous vs asynchronous flows and explain failure scenarios and retries.

Round 1 — The two DSA tasks

1) nth term of an arithmetic progression (AP)

- Problem: Given first term a and difference d, find the nth term.

- Approach: Use the formula: nth = a + (n - 1) * d.

- Edge cases: large n leading to overflow (use long/BigInteger as needed), negative d, n <= 0 (validate input).

2) Search in a rotated sorted array

- Problem: Given a sorted array rotated at some pivot, search a target in O(log n).

- Approach: Modified binary search. At each step, determine which half is sorted; then decide whether the target lies in the sorted half or search the other half.

- Complexity: O(log n) time, O(1) space.

- Edge cases: duplicates (makes some steps ambiguous), small arrays, full rotation.

If asked to code, articulate the invariants and termination conditions before writing code.

Round 2 — Rapid-fire topics and how to answer them succinctly

Ownership in stack

- Clarify: stack holds frames (local variables, callers). Heap holds objects; GC collects heap objects when no live references remain. Stack frames are popped when functions return.

Strategy pattern

- Intent: define a family of algorithms, encapsulate each one, and make them interchangeable. Provide a quick example: payment processing strategies (CreditCardStrategy, UpiStrategy) injected at runtime.

Garbage collection (GC)

- Mention generations (Young, Old), major/minor GC, pause reduction strategies (G1, CMS), and basics of reachability and finalization pitfalls. If asked specifics, name GC collectors and their trade-offs.

HashMap vs Hashtable + internals

- HashMap: unsynchronized, allows null keys/values, uses array of Node + linked lists or tree bins (Java 8+), default load factor 0.75, fail-fast iterators.

- Hashtable: synchronized, legacy, does not allow null keys/values.

- Resizing and rehash costs: discuss thresholds and complexity implications.

Singleton + deserialization safety

- Problem: deserialization can create a new instance. Fix: implement readResolve() to return the singleton instance, or use enum singletons which are inherently safe.

Data structure for Snake & Ladders

- Model board as graph nodes 1..N. From each node, edges correspond to dice outcomes (1..6) unless there's a snake/ladder redirect. Use BFS to find minimum dice throws to reach the final cell.

REST responses + HTTP codes

- Common mappings: 200 OK (success), 201 Created (resource created), 204 No Content (success w/o body), 400 Bad Request (client error), 401 Unauthorized, 403 Forbidden, 404 Not Found, 409 Conflict (versioning/dup), 500 Internal Server Error.

- Also mention use of proper response bodies and consistent error schema (code, message, timestamp, requestId).

Quick sample answers / phrasing

"Why Kafka instead of direct HTTP?"

- "We needed durable, replayable streams with high throughput, and to decouple producers from consumers so downstream services could process at their own pace. Kafka's partitioning also gave us ordering where required."

"Why Elasticsearch instead of Redis?"

- "We needed complex text search, scoring and aggregations; Redis is great for hot lookups but doesn't support full-text queries or analytics. We used Redis for hot key-value caching alongside ES for search."

"How do you ensure data consistency across services?"

- "We scoped transactions to single services, used eventual consistency across services, and implemented sagas with compensating steps for cross-service flows. For critical operations, we used idempotency keys and verification steps."

Prep checklist (what to study before an interview)

- Know your project end-to-end: diagrams, data flows, failure modes.

- Core Java internals: memory model, GC, HashMap internals, synchronization primitives.

- Design patterns: Strategy, Singleton (and pitfalls), Factory, Observer.

- Distributed systems basics: messaging (Kafka), consistency models, transactional patterns (saga), how to reason about microservices boundaries.

- Algorithms & DS: binary search variants (rotated array), BFS on implicit graphs (boards), typical array/string/linked-list problems.

- HTTP/REST semantics and common status codes.

Final takeaway

Be surgical: defend your project choices with metrics and trade-offs, and switch smoothly to code-level details when asked. Interviewers expect you to know both the big picture and the Java internals that make your design work.

Good luck — and rehearse succinct stories for 4–6 design decisions from your project so you can answer confidently under pressure.

#Java #Microservices #InterviewPrep