High-Score (Bugfree Users) Interview Experience: Intuit Staff SWE TPI — AI Basics, Kafka at Scale & a PQ Check

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

High-Score Interview Experience — Intuit Staff SWE (TPI)

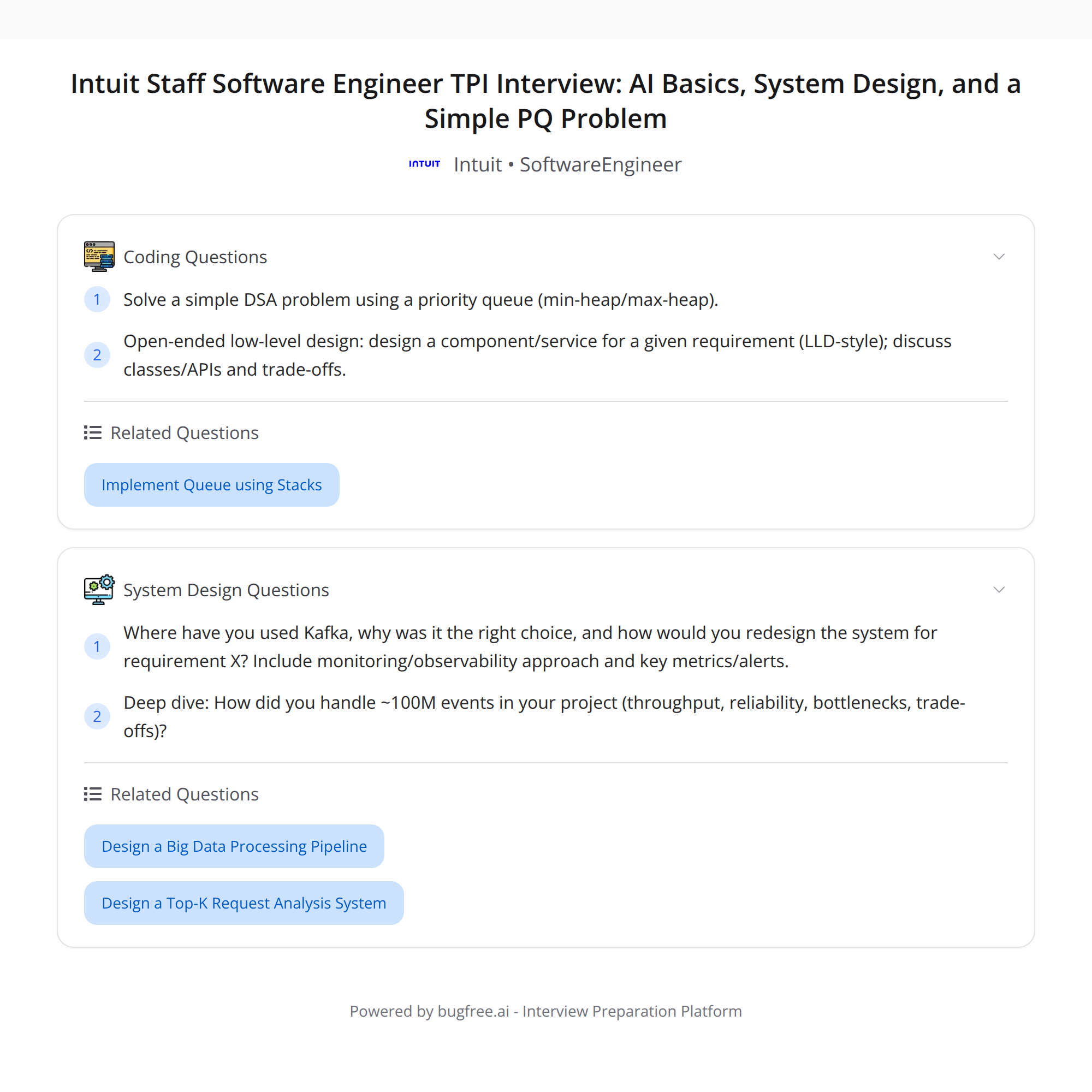

This post condenses a high-scoring interview report from Bugfree users for an Intuit Staff Software Engineer (TPI) role. The interview emphasized practical AI fundamentals, system design trade-offs, event-driven systems with Kafka at scale, monitoring/observability, and a coding round centered on a priority-queue problem plus a timeboxed low-level design (LLD) exercise.

What the interview focused on

- AI fundamentals — practical usage over heavy math. Expect questions about how to apply models, trade-offs (latency, cost, data quality), and integrations in production systems.

- System design & architecture trade-offs — clarifying requirements, choosing consistency models, fault tolerance, and scaling strategies.

- Kafka + event-driven systems at scale — throughput, partitions, ordering guarantees, backpressure, retention, and operational concerns.

- Monitoring & observability — meaningful metrics, alert thresholds, how you triage production incidents.

- Deep resume dive — be ready to explain anything you claim, especially systems that handled ~100M events: throughput numbers, bottlenecks, and reliability strategies.

- Coding — a straightforward priority-queue DSA problem and an open-ended LLD component design, time-boxed.

Detailed breakdown

1) AI basics (practical angle)

Interviewers favored practical, systems-focused AI questions rather than math-heavy theory. Topics to prepare:

- When to use pre-trained models vs fine-tuning vs prompt engineering.

- Trade-offs: inference latency, model size, cost per prediction, data privacy, and explainability.

- How to serve models in production: batching, caching, autoscaling, and A/B testing.

- Failure modes: hallucinations, dataset drift, monitoring model quality (data and prediction metrics).

Tip: Frame your answers around a specific use case — what you’d measure, how you’d mitigate risks, and an implementation sketch.

2) System design & architecture trade-offs

Expect trade-off conversations rather than one “correct” design. Common areas covered:

- Requirement clarification: load (QPS), SLAs, consistency vs availability, expected growth.

- Data model and storage choices: RDBMS vs NoSQL vs streaming.

- Caching and cache invalidation strategies.

- Failure modes and recovery: retries, idempotency, circuit breakers.

- Cost vs complexity: when multi-region, synchronous replication, or cross-region async replication makes sense.

Practice narrating why you chose an approach, what you gave up, and how you’d iterate.

3) Kafka and event-driven systems at scale

A deep resume dive often touched Kafka systems. Be ready to discuss:

- Throughput metrics (events/sec, MB/sec), consumer lag, and partition sizing.

- Ordering guarantees — when you need single-partition order vs when multiple partitions are fine.

- Producer strategies: batching, compression, idempotent producers.

- Consumer strategies: consumer groups, rebalancing, commit semantics (auto vs manual, at-least-once vs exactly-once).

- Retention policies, compaction, and schema evolution (Avro/Protobuf + schema registry).

- Common bottlenecks: network, broker CPU/disk, controller churn.

- Operational playbook: alerts to watch (broker offline, ISR shrink, consumer lag), and runbook steps for common incidents.

When describing your past Kafka system, include numbers: partitions, average throughput, peak throughput, and how you scaled to meet demand.

4) Monitoring, observability & prod troubleshooting

Interviewers care about practical observability choices. Prepare to discuss:

- Key metrics: error rates, latency P50/P95/P99, success vs failure counts, consumer lag, GC pause times.

- Instrumentation: tracing, structured logs, and business-level metrics.

- Alerting strategy: when to alert vs when to create paged incidents, thresholds, and escalation.

- A recent incident story: how you detected it, triaged, mitigated, and permanently fixed the root cause.

5) Resume deep dive — handling ~100M events

If you list large-scale systems on your resume, drill into specifics:

- Numbers: events/day, throughput, average event size, retention window.

- Bottlenecks you observed and how you mitigated them (partitioning strategy, batching, backpressure).

- Reliability techniques used: retries, deduplication, idempotency, exactly-once patterns (if any).

- Trade-offs: cost vs durability, latency vs throughput.

Interviewers will probe gaps: be honest about trade-offs and the parts you owned.

6) Coding — priority queue DSA + timeboxed LLD

The coding portion was a straightforward priority-queue problem (think heap-based solutions), followed by a time-boxed LLD design component. Recommended approach:

- For the DSA problem: state the complexity targets, outline using a binary heap or language-native priority queue, and handle edge cases (ties, updates, removals).

- For the LLD: sketch components, interfaces, data flows, and call out invariants and concurrency considerations. Keep scope tight to fit the timebox.

Example prompt types to expect:

- Implement a custom priority queue with operations: push, pop, peek, update-priority.

- Design a real-time task scheduler that uses a priority queue — describe how you’d persist state, recover from crashes, and scale workers.

Preparation tips (concrete)

- Narrate your real systems clearly: include scale (QPS/events/day), operational responsibilities, and SLA/SLI expectations.

- For AI topics, emphasize integration, monitoring, and guardrails rather than deep math derivations.

- Practice Kafka fundamentals plus operational scenarios (what alarms you’d set, what runbook steps you’d execute).

- For system design, practice short, trade-off-focused designs (10–20 minute sketches) and be prepared to dive into one subsystem.

- For coding: refresh heaps/priority queues, and practice a quick LLD sketch under a timer.

- Have at least one clear incident postmortem you can narrate (detection → mitigation → fix).

Sample questions you might get

- How would you serve an LLM for inference at low latency and high throughput? What trade-offs do you consider?

- Describe the Kafka topology you’d choose for a 100M events/day pipeline. How many partitions, retention policy, and consumer strategy?

- Given a service that suddenly sees consumer lag, how do you triage and mitigate?

- Implement a priority queue that supports decrease-key/update operations.

- Design a scheduler service that dispatches tasks by priority — how do you ensure reliability and scale?

Dos and don'ts

Dos:

- Quantify: give numbers for scale and performance.

- Focus on trade-offs and why you chose an approach.

- Tell concrete incident stories with clear outcomes and lessons.

Don'ts:

- Don’t speak in vague platitudes — be concrete about how systems behaved at scale.

- Don’t over-claim: if you didn’t implement exactly-once semantics, say so and describe what you did instead.

Final words

This interview rewards practical system thinking: clear narratives about scale and operations, pragmatic AI integration choices, strong Kafka/streaming intuition, and clean, time-boxed coding and LLD. Prepare by rehearsing concise stories from your resume, drilling Kafka and observability scenarios, and practicing a priority-queue implementation plus a quick, scoped LLD.

Good luck — focus on clarity, numbers, and trade-offs.

#SystemDesign #Kafka #SoftwareEngineering