High-Score Interview Experience (Bugfree Users): Google SWE PhD AI/ML New Grad Journey—What Actually Mattered

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

High-Score Interview Experience (Bugfree Users)

Posted by Bugfree Users — a high-score interview experience review.

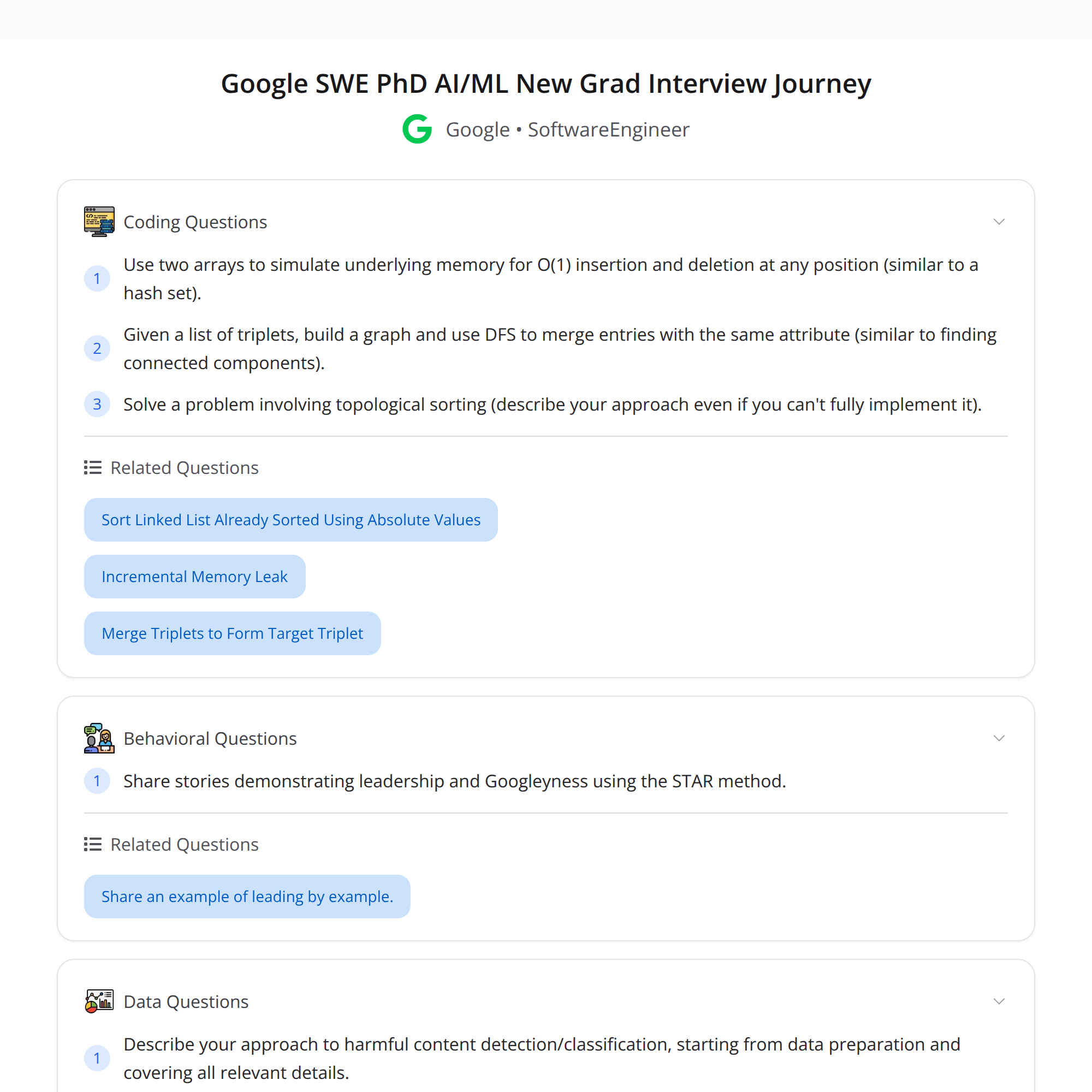

A PhD candidate (not from CS/ECE) who had some GenAI research summarized a rigorous Google SWE (AI/ML) New Grad interview loop they completed. Below is a cleaned-up, expanded breakdown of the timeline, what truly mattered, and concrete prep advice.

The interview timeline (what actually happened)

- Recruiter outreach

- HR sync + a mock interview session

- Onsite loop: 2 coding rounds, 1 ML system question, 1 behavioral

- After onsite: 2 additional coding rounds

This loop highlights that even with ML research experience, Google emphasized both coding and ML fundamentals, plus leadership/behavioral fit.

Top-level takeaways

- ML fundamentals + clear leadership stories can make you stand out, especially as a PhD.

- Coding performance still matters—pacing, writing tests, and minimizing dependence on hints are critical.

- Google rarely asks exact LeetCode problems; expect “disguised” patterns. Practice pattern recognition, not memorization.

- Start early — a semester ahead if possible — and protect dedicated prep time.

What helped this candidate succeed

- ML fundamentals: clear understanding of model training, evaluation metrics, bias-variance tradeoffs, overfitting/regularization techniques, and system-level considerations (data pipelines, latency/throughput tradeoffs).

- Leadership/behavioral stories: concise STAR-format stories showing impact, tradeoffs, cross-team collaboration, and mentoring.

- Solid coding basics: strong data structures and algorithms skills, but more importantly, good pacing, clear thinking out loud, and iterative testing.

Common pitfalls to avoid

- Relying on hints during interviews. Practice solving problems with fewer prompts.

- Memorizing exact LeetCode problems. Google disguises patterns—focus on underlying techniques (two pointers, sliding window, DFS/BFS, dynamic programming, graph reductions, hashing).

- Not practicing time management. Interview time is limited; practice finishing clean solutions within the allotted time.

Actionable prep plan (a semester ahead)

Weeks 1–4: Foundation

- Brush up on data structures: arrays, linked lists, stacks, queues, heaps, hash maps, trees.

- Revisit algorithm basics: sorting, search, recursion, BFS/DFS.

Weeks 5–10: Pattern practice

- Solve focused sets of problems per pattern (sliding window, two pointers, graph traversal, DP). Aim for 3–5 problems per pattern.

- Time yourself and practice writing clean code under constraints.

Weeks 11–14: Mock interviews + ML fundamentals

- Do timed mock interviews (partner or platform) and practice explaining solutions aloud.

- Review ML fundamentals: model evaluation, loss functions, optimization algorithms, regularization, basic probability/statistics, and system design for ML services.

Weeks 15–16: Final polish

- Create 6–8 STAR stories for behavioral rounds.

- Run a few full simulated loops: coding + ML question + behavioral.

Coding interview tips (practical)

- Start with clarifying questions. Confirm input sizes, edge cases, and expected return types.

- Sketch approach before coding. Mention complexity trade-offs.

- Write a clean brute force first if stuck, then optimize.

- Add simple tests (including edge cases) and walk through them.

- If you need help, ask directed questions instead of waiting for hints (e.g., “Would optimizing the time complexity from O(n^2) to O(n) be worth exploring?”).

ML interview tips

- Know how to compare models using metrics appropriate to the task (precision/recall, ROC-AUC for classification; RMSE, MAE for regression).

- Be ready to discuss feature engineering, data imbalance handling, cross-validation, and deployment tradeoffs (latency, monitoring, data drift).

- For system-level ML questions, present a clear pipeline: data ingestion → preprocessing → model training → validation → serving → monitoring.

Behavioral / leadership tips

- Use STAR (Situation, Task, Action, Result) and keep stories concise (2–3 minutes each).

- Emphasize impact with measurable outcomes when possible.

- Include examples of technical leadership (designing systems, mentoring students, leading experiments) and cross-functional collaboration.

Mock interviews & mental prep

- Do regular mocks under timed conditions. Record them if possible and review for clarity and pacing.

- Practice explaining your thought process clearly; interviewers value reasoning over perfect solutions.

Final thoughts

A PhD with GenAI research can leverage deep ML knowledge and leadership stories, but must still demonstrate reliable coding ability and interview discipline. Start early, focus on pattern recognition and fundamentals, and practice communicating clearly under time pressure.

Good luck — carve out focused prep time and iterate on weak spots.

#SoftwareEngineering #MachineLearning #InterviewPrep