High-Score Interview Experience: Google ML SWE (PhD) Loop — What the Tough Follow-ups Really Test

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

High-Score Interview Experience: Google ML SWE (PhD) Loop — What the Tough Follow-ups Really Test

A concise write-up from a high-scoring candidate (non-CS background) who completed Google’s ML SWE PhD loop (4 rounds). This summary highlights what each round focused on, the key follow-ups asked, and practical takeaways for preparing effectively.

Quick overview

- Interview type: Google ML SWE (PhD) loop

- Rounds: 4 (ML fundamentals, Behavioral, Coding #1, Coding #2)

- Candidate background: non-CS

- Common theme: solve the core quickly, then expect optimizations and harder variants

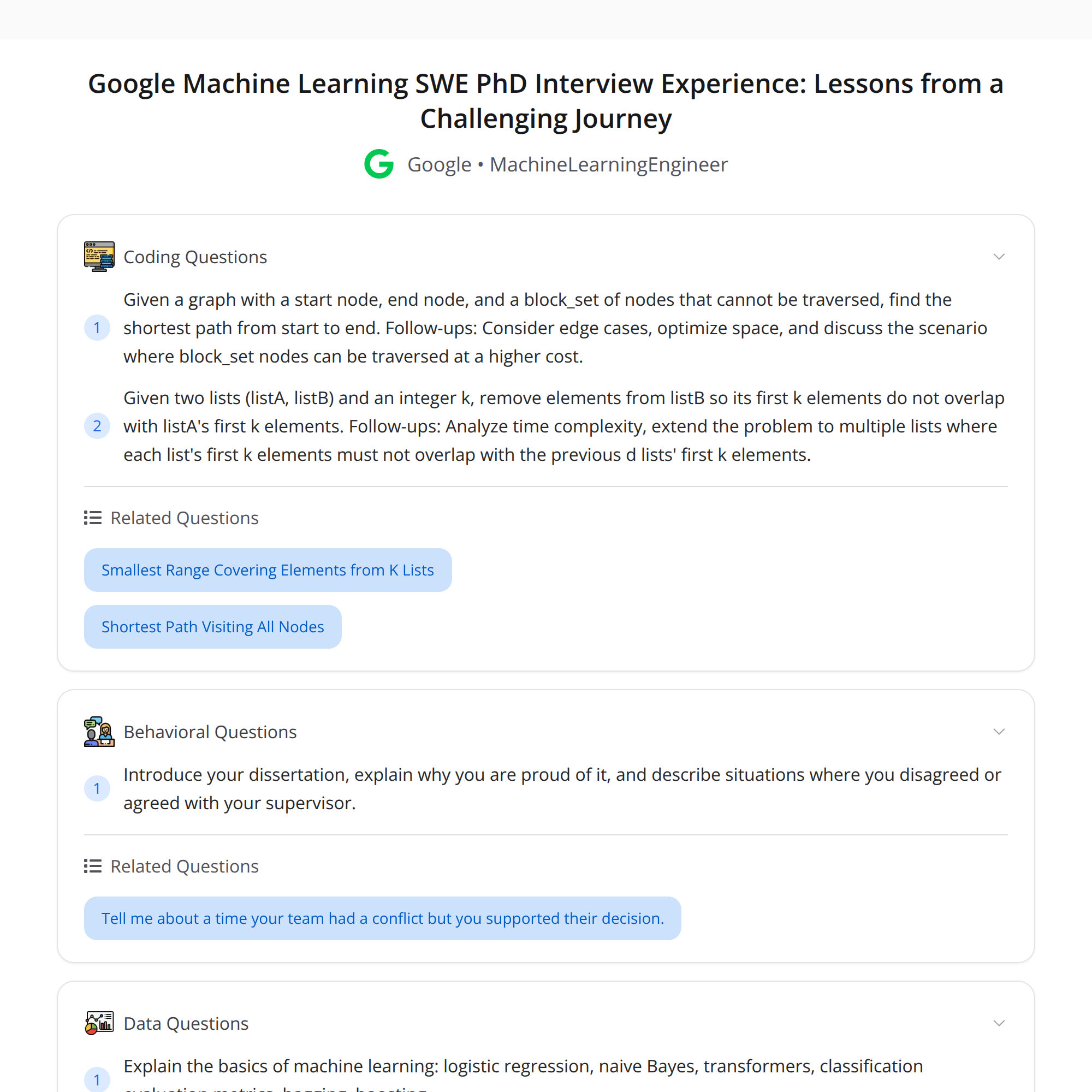

ML fundamentals (round content)

Topics covered:

- Logistic regression

- Naive Bayes

- Transformers (architecture/intuition)

- Evaluation metrics (precision, recall, F1, AUC, etc.)

- Ensemble methods (bagging vs boosting)

What they tested:

- Depth of conceptual understanding (not just definitions)

- Knowing when to use each model and their trade-offs

- Interpreting metrics in context (class imbalance, business trade-offs)

Prep tips:

- Be ready to explain assumptions, limitations, and complexity trade-offs.

- Review example scenarios where one metric is preferred over another.

Behavioral (round content)

Focus areas:

- Impact of your dissertation (or research) — articulating novelty, impact, and metrics of success

- Handling disagreement with a supervisor — communication, data-driven persuasion, escalation strategy

Prep tips:

- Use STAR format: Situation, Task, Action, Result. Quantify impact where possible.

- Prepare at least one concrete example of a disagreement and how you reached a constructive outcome.

Coding round 1 — Shortest path with blocked nodes

Problem sketch:

- Find shortest path in a grid/graph when some nodes are blocked.

- Core solution: BFS for unweighted shortest path.

Follow-ups / harder variants asked:

- Space optimization — reduce memory usage (e.g., in-place marking, using bitsets, compressing visited structure).

- Variant with higher traversal cost — edges/nodes with weights. This pushes toward Dijkstra or A* and reasoning about heuristics if applicable.

Key expectations:

- First, deliver a correct BFS implementation quickly.

- Then explain and implement optimizations while keeping correctness.

- Finally, adapt to weighted traversal by discussing algorithmic changes and complexity.

Prep tips:

- Practice BFS/DFS and common space optimizations.

- Be ready to justify switching to Dijkstra and to discuss admissible heuristics if A* comes up.

Coding round 2 — Top-k / list-avoidance constraint

Problem sketch:

- Given listA (top-k items) and listB, remove items from listB so the top-k selection doesn’t overlap with listA.

- Extension: multiple lists with constraint “avoid items that appear in the last d lists.”

Follow-ups / harder variants asked:

- Generalize to multiple lists, enforcing an "avoid last d lists" constraint.

- Consider performance when lists are large or when k is large relative to list sizes.

Key expectations:

- Provide a clear core solution (hash sets, priority queues) quickly.

- Then discuss scalability, edge cases, and trade-offs for streaming or memory-limited scenarios.

Prep tips:

- Be comfortable with sets, heaps, frequency maps, and sliding-window style constraints.

- Think about online/streaming versions if inputs are too large to store.

Key takeaways

- Solve the core problem quickly and correctly — interviewers expect a working baseline fast.

- Expect iterative follow-ups: time/space optimizations and problem generalizations.

- Explain trade-offs and clearly state complexity (time & space) after each improvement.

- For ML rounds, focus on intuition, assumptions, and when a model is appropriate.

- For behavioral, be concrete: quantify impact and show collaborative problem-solving.

Practical checklist to prepare

- Brush up: BFS/DFS, Dijkstra, heaps, hash sets, priority queues.

- Practice optimizing memory and time — in-place, bitsets, streaming.

- Review ML fundamentals: logistic regression, Naive Bayes, transformers, evaluation metrics, bagging vs boosting.

- Prepare 3–4 behavioral stories with clear metrics and outcomes.

- During interviews: communicate assumptions, test edge cases, and iterate from core solution to optimized variants.

Good luck — focus on getting a correct baseline quickly, then use the extra time to demonstrate depth by optimizing and generalizing your solution.