High-Score (Bugfree Users) DoorDash SWE Interview Experience: Coding + VO Deep Dive

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

High-Score (Bugfree Users) DoorDash SWE Interview Experience: Coding + VO Deep Dive

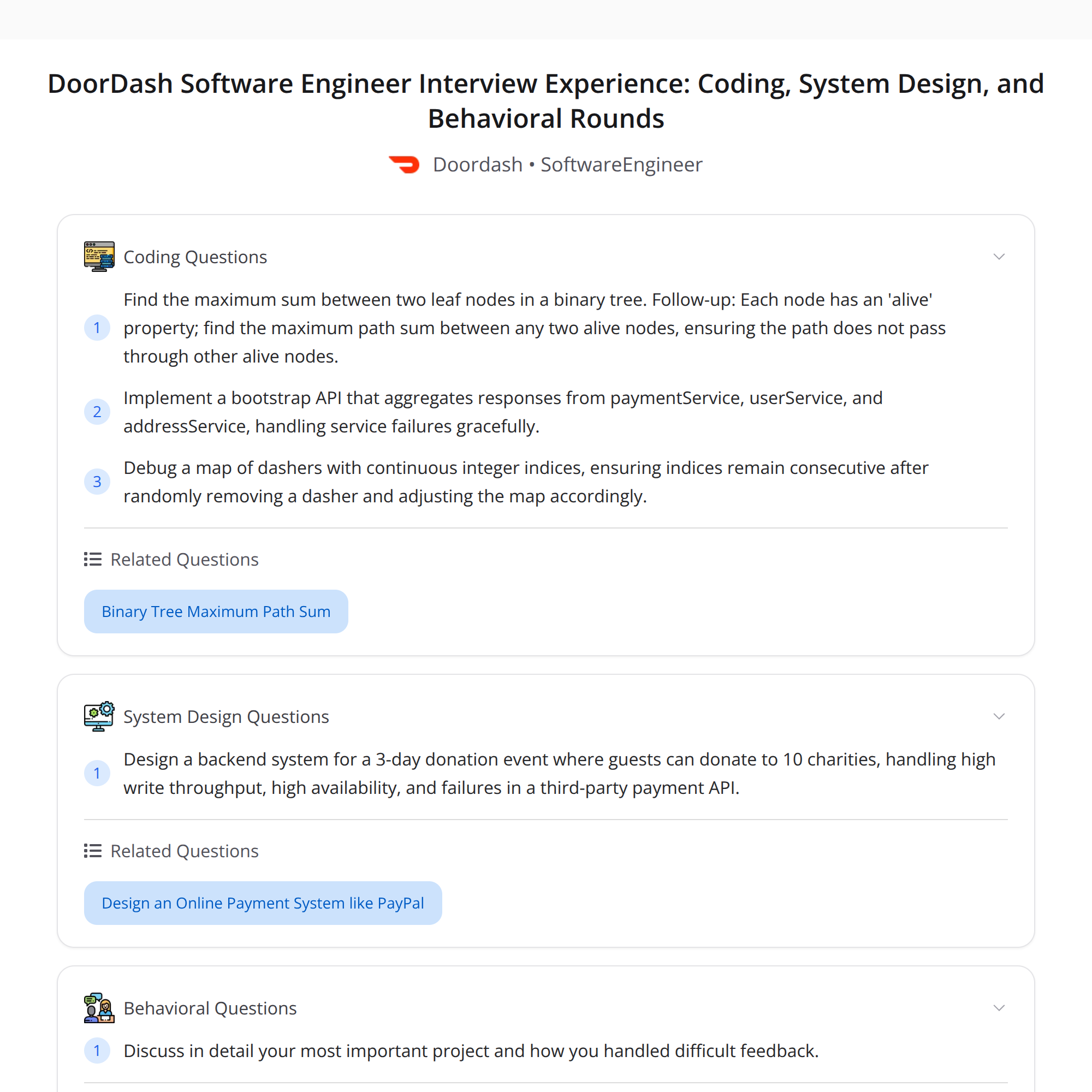

A compact, enriched recap of a high-score DoorDash SWE loop shared by Bugfree users. This breakdown covers the coding question, VO (code craft) design, debugging discussion, system design, and behavioral focus areas — plus practical tips and expected trade-offs.

Quick summary

- The loop emphasized strong fundamentals and real-world engineering trade-offs.

- Interview stages: Online coding -> VO Code Craft -> Debugging -> System Design -> Behavioral.

- Questions tested correctness, complexity reasoning, resiliency, and ownership.

1) Online Coding — Max path sum between two leaf nodes

Problem: find the maximum path sum between two leaf nodes in a binary tree.

Solution outline:

- Perform a DFS that computes the max root-to-leaf path sum for each subtree and updates a global maximum when a node connects two child paths (left + node + right).

- For each node, return the maximum path sum from that node down to any leaf in its subtree. While returning, update a global max using left + node.val + right when both children exist.

Complexity: O(n) time, O(h) stack space (h = tree height).

Follow-up (alive nodes): compute the maximum sum between two "alive" nodes such that the path does not pass through any other alive node.

- Conceptual approach:

- Annotate nodes as alive/dead.

- For each node, compute two values:

- bestDownNoAlive: best downwards path sum from this node to any node in the subtree that contains no alive nodes (useful when the current node is not alive and you want to pass through it),

- bestDownToAlive: best downwards path sum ending at an alive node in the subtree without passing through other alive nodes (only valid if the path's endpoint is an alive node).

- Combine children carefully: if the current node is alive, you can combine child bestDownNoAlive values to form paths that start or end at this alive node, but you must not allow additional alive nodes in between.

- Update global max only when forming a path whose endpoints are both alive and there are no other alive nodes on it.

This follow-up requires careful state-passing in DFS and handling edge cases where subpaths include alive nodes. Tests should include chains and trees with multiple alive nodes to validate constraints.

Tips for coding interviews:

- Talk through base cases (null node, leaf nodes).

- Explain the global-max technique and why you can't just return a single value without auxiliary state for the follow-up.

- Mention complexity and test edge cases.

2) VO Code Craft — Bootstrap API aggregating payment/user/address services

Scenario: design a bootstrap endpoint that aggregates responses from multiple microservices (payments, users, addresses). Services may intermittently return 500s.

Key requirements and trade-offs:

- Availability vs. consistency: prefer returning a best-effort response rather than blocking the client when some downstream services are failing.

- Resilience: deal with partial failures and provide reasonable defaults.

Practical design patterns to demonstrate:

- Timeouts and per-call retry with exponential backoff (bounded retries).

- Circuit breaker around flaky downstream services to avoid cascading failures.

- Fallback strategies:

- Use cached/stale data when available (short TTL), or

- Provide safe defaults (e.g., "unknown address" or empty payment metadata) and annotate the response with flags indicating degraded data.

- Idempotent requests when retries are performed.

- Standardized error codes and telemetry (metrics, tracing) for quick diagnosis.

Implementation notes:

- Compose responses asynchronously (parallel calls) with a deadline; assemble partial results when a deadline is reached.

- Retry policy: limit retries, add jitter to avoid thundering herd.

- Integration tests and contract tests to ensure the aggregator handles various downstream behaviors.

What interviewers listen for:

- Clear failure modes and mitigation strategies (retries vs circuit-breakers vs fallback).

- Observability and how you'd monitor service health and user impact.

3) Debugging — Maintain continuous indices in a dasher map after random removals

Problem statement: keep a compact/continuous index range for dashers while removals happen at random times.

Approaches:

- Indirection layer (recommended): map stable dasher IDs to compact indices via a logical indirection table. Benefits:

- Avoids shifting huge arrays on each removal.

- Index compaction can be done asynchronously.

- Free-list + background compaction:

- Maintain a free index list; allocate from it for new dashers.

- Periodically run a background compaction that rewrites contiguous indices and swaps entries atomically.

Concurrency and thread-safety:

- Use fine-grained locks, or optimistic concurrency (CAS) for index table updates.

- Minimize locks on hot paths: reads should be lock-free if possible; writes may use short critical sections.

- Ensure atomic pointer swaps when re-mapping indices to prevent inconsistent views.

Handling async remote failures:

- Use a retry + dead-letter queue pattern for remote operations that may fail.

- For operations that must succeed before publishing an index change, use two-phase commit patterns sparingly; prefer eventual consistency with compensating actions if possible.

Debugging tips to discuss in an interview:

- Describe the invariant you want to preserve (e.g., indices 0..N-1 assigned or a stable indirection map).

- Explain how you'd instrument and test your design under concurrent churn.

4) System Design — High-write, highly available donation event

Requirements recap:

- Extremely high write volume during an event.

- High availability and low user-facing latency.

- Third-party payment gateways may fail; donations must be recorded and reconciled.

- End-of-event aggregation for reporting.

An architecture sketch:

- API Gateway / LB -> Ingress service -> Write path → message queue (Kafka/Kinesis)

- Producers write minimal donation records to the queue with an idempotency key.

- Stream processors / workers:

- Persist canonical events to a durable storage (hot DB: Cassandra, DynamoDB, or time-series store optimized for writes).

- Invoke payment gateway asynchronously; place failed attempts into a retry queue or dead-letter store (S3) for offline reconciliation.

- Emit aggregated metrics to in-memory aggregators (per-shard) for near-real-time counters.

- Aggregation and end-of-event reporting:

- Use stream processing (Flink, ksqlDB, or Spark Streaming) to create rolling aggregates and final reductions.

- For final, authoritative results, run a reconcile job that consumes the canonical audit log and the payment outcomes, applying deduplication and correction.

Handling 3rd-party payment failures:

- Persist donation intent immediately (append-only log) even if charge is pending.

- Retry payments with exponential backoff + bounded attempts.

- If retries fail, archive to durable storage and surface for offline processing & manual review; mark state as "pending" and include in reconciliation.

- Ensure idempotency keys so retries or late acknowledgements don't double-charge.

Scaling and consistency considerations:

- Partition by event or donation id to scale writes across partitions.

- Use eventual consistency for speed; provide strong reconciliation at the end.

- Protect against data loss with replication and write-ahead logs.

5) Behavioral — deep project ownership & tough feedback

What interviewers want to hear:

- Concrete ownership examples: how you drove ambiguous projects end-to-end, prioritized scope, and measured impact.

- Receiving tough feedback: show humility, a learning plan, and concrete follow-up actions (e.g., changed process, added tests, improved docs).

Suggested structure for answers (STAR variation):

- Situation: briefly set context.

- Task: your responsibility.

- Action: what you did (decisions, trade-offs, collaboration).

- Result: measurable outcomes and what you learned.

Example line to use: "I owned the entire flow from ingestion to reconciliation, instrumented the pipeline, and iterated on failure handling after observing a 2% failure rate in the first hour." Keep specifics and metrics where possible.

Key takeaways & interview tips

- Emphasize correctness first, then explain complexity and optimizations.

- For design/VO questions, present clear failure modes and well-known patterns (timeouts, retries, circuit breakers, fallbacks, idempotency).

- For debugging, state invariants, concurrency controls, and how you'd test under load.

- Show ownership in behavioral answers: state the problem, your decisions, measurable outcomes, and what you changed.

Good luck — focus on clear communication, justify trade-offs, and back explanations with small examples or sketches when possible.