High-Score (Bugfree Users) Interview Experience: Atlassian Principal MLE — ML Craft + Jira Ranking Design + Tricky Rate Limiter

bugfree.ai is an advanced AI-powered platform designed to help software engineers master system design and behavioral interviews. Whether you’re preparing for your first interview or aiming to elevate your skills, bugfree.ai provides a robust toolkit tailored to your needs. Key Features:

150+ system design questions: Master challenges across all difficulty levels and problem types, including 30+ object-oriented design and 20+ machine learning design problems. Targeted practice: Sharpen your skills with focused exercises tailored to real-world interview scenarios. In-depth feedback: Get instant, detailed evaluations to refine your approach and level up your solutions. Expert guidance: Dive deep into walkthroughs of all system design solutions like design Twitter, TinyURL, and task schedulers. Learning materials: Access comprehensive guides, cheat sheets, and tutorials to deepen your understanding of system design concepts, from beginner to advanced. AI-powered mock interview: Practice in a realistic interview setting with AI-driven feedback to identify your strengths and areas for improvement.

bugfree.ai goes beyond traditional interview prep tools by combining a vast question library, detailed feedback, and interactive AI simulations. It’s the perfect platform to build confidence, hone your skills, and stand out in today’s competitive job market. Suitable for:

New graduates looking to crack their first system design interview. Experienced engineers seeking advanced practice and fine-tuning of skills. Career changers transitioning into technical roles with a need for structured learning and preparation.

High-Score Atlassian Principal MLE Interview — Notes & Tips

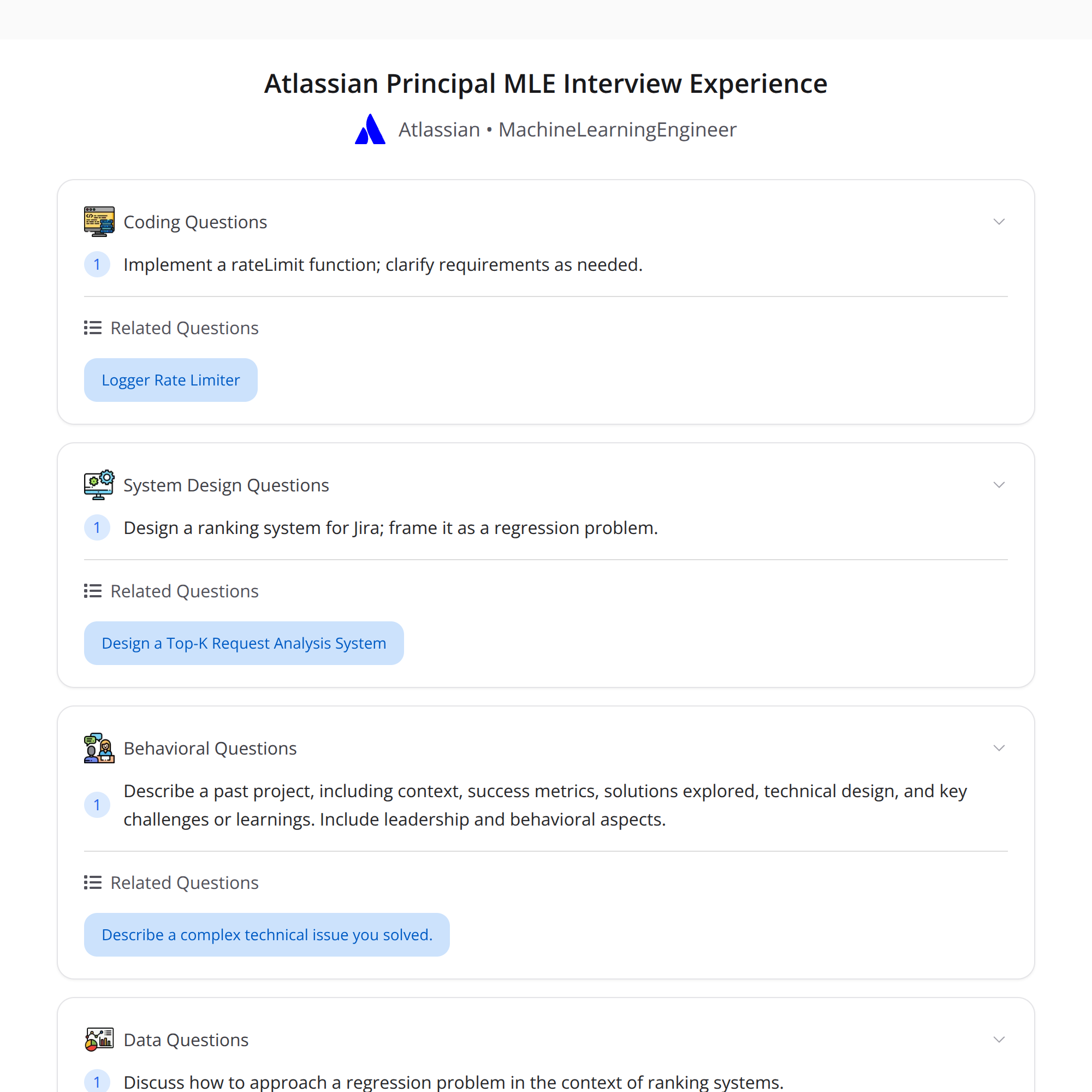

This post summarizes a high-scoring interview report from Bugfree users for the Atlassian Principal Machine Learning Engineer role. It highlights the three main rounds, what the interviewers were looking for, and practical advice you can apply when preparing: an ML Craft project deep-dive, an ML system design exercise (Jira ranking), and a coding challenge (a tricky rate limiter).

Quick overview

- ML Craft (≈1 hour): a deep-dive on a past project — context, success metrics, solution tradeoffs, technical design, and leadership/behavioral signals.

- ML System Design: design a Jira ranking system; framing it as a regression problem helped structure features, labels, evaluation, and iteration strategy.

- Coding: implement a

rateLimit()function with tricky requirements; clarifying questions, edge cases and clear communication mattered as much as the final code.

Takeaway: bring a crisp project narrative, show end-to-end thinking, and communicate tradeoffs clearly.

1) ML Craft — how to present a winning project

This round tests deep ownership, technical depth, and communication. Treat it like storytelling with engineering rigor.

What to cover (recommended structure):

- Context & goal: what product/team, who the users are, and the key question you were solving. Keep it concise.

- Success metrics: define business and ML metrics you optimized (e.g., NDCG, CTR uplift, reduced incident rate, latency targets). Be specific about targets and baselines.

- Solution overview: high-level architecture, model family, major components (feature pipeline, training, serving, monitoring).

- Design tradeoffs: why you picked approach A vs B; show awareness of alternatives and cost/complexity/performance tradeoffs.

- Technical details & challenges: describe one or two hard engineering or modeling problems and how you solved them (e.g., label noise, feature drift, scale bottlenecks).

- Results & iteration: concrete results, how you validated improvements (offline vs online), what you learned, next steps you would take.

- Leadership signals: cross-team coordination, stakeholder management, mentorship, and how you set priorities.

Tips that interviewers notice:

- Bring numbers and timelines — “we improved NDCG@10 from 0.62 to 0.71 over 3 sprints.”

- Use diagrams if needed (briefly sketch components verbally).

- Call out monitoring and guardrails (alerts, data quality checks, A/B test setup).

- Be honest about tradeoffs and unknowns — show a plan for mitigating risks.

2) ML System Design — Jira ranking framed as regression

The interview asked to design a Jira issue ranking system. One effective approach is to frame ranking as a regression/score prediction problem and then optimize it as a ranking task.

Suggested framing and steps:

Problem definition & constraints

- What's being ranked (issues for which user?) and what objective (relevance for triage, predicted time-to-resolution, or user engagement)?

- Latency and scale constraints (interactive UI, per-user real-time ranking vs batch pre-ranking).

Define labels

- Regression-style continuous labels: e.g., probability the user will interact, time-to-completion (normalized), or a relevance score derived from historical actions.

- Alternatively, transform into ordinal or pairwise labels if you have click/accept signals.

Feature design

- Query/user features: user role, team, past interactions, watch list.

- Issue features: priority, status, labels, creator, components, text embeddings (summary/description), time since last update.

- Context/temporal features: time-of-day, sprint deadlines, component-specific backlog size.

Modeling choices

- Start simple: gradient boosted trees (XGBoost/LightGBM) or linear models for interpretability and fast iteration.

- Move to neural ranking or pairwise approaches if you need deep text understanding (BERT embeddings + shallow combiner).

Evaluation

- Offline: NDCG@k, MAP, AUC for classification-style signals, RMSE/MAE for regression targets.

- Online: CTR on suggested issues, reduced mean time to resolve, save rate, user satisfaction surveys.

Iteration & production concerns

- Offline-to-online calibration: map predicted scores to calibrated probabilities or ranks.

- Serving: precompute scores in batch for large backlogs; use online feature store for personalized signals.

- Monitoring: drift detection, shadow testing, and A/B metrics keyed to business outcomes.

Tradeoffs and practical notes:

- If latency is tight, do a two-stage pipeline: cheap candidate retrieval (BM25/embedding nearest neighbors) then re-rank with a richer model.

- Label choice is critical: pick a label that aligns with business value (e.g., prioritize issues likely to be resolved quickly vs those the user will act on).

- Explainability matters in enterprise products — logging feature contributions and offering “why this issue” explanations help adoption.

3) Coding — the tricky rateLimit() problem

The coding round asked for a rateLimit() implementation with non-obvious constraints. The key evaluation criteria were: clarifying questions, correct logic, edge-case handling, and clear communication.

First: ask clarifying questions (examples):

- Is the limiter per-user, per-IP, or global?

- Is it a fixed window, sliding window, token bucket or leaky bucket semantics?

- Are we allowed to use persistent storage (Redis) or is this in-memory only?

- Is concurrency expected (multi-threaded/process safe)?

- What should happen when the limit is hit (reject, queue, delay)?

Common tricky constraints and how to approach them:

- Sliding window requirement: implement using timestamped hits + pruning or an efficient counter (e.g., maintain a dequeue of timestamps per key).

- Memory limits: if you must support many keys, use approximate counters (e.g., counting bloom or fixed buckets) or TTL the keys aggressively.

- Concurrency: use atomic ops in distributed stores (Redis INCR with EXPIRE), or locks if in-memory.

A simple robust approach (conceptual):

- Token bucket semantics backed by Redis:

- Store for each key: last_timestamp and tokens_remaining.

- On request: compute tokens_to_add = (now - last_timestamp) * rate; tokens = min(capacity, tokens_remaining + tokens_to_add); if tokens >= 1: decrement and allow; else reject.

- Use Redis EVAL (Lua) to keep ops atomic.

Edge cases to mention in the interview:

- Clock skew between servers — use monotonic clocks or a single source (Redis server time).

- Cold start: initialize tokens to capacity on first request.

- Burst behavior: document allowed burst size and how that affects system safety.

When coding on a whiteboard or shared editor, narrate each assumption and tradeoff, and incrementally add handling for edge cases.

Final takeaways & prep checklist

- Tell a crisp, numbers-backed story for your ML Craft project. Focus on impact, tradeoffs and leadership.

- In system design, pick a clear framing (regression for ranking can simplify label/feature design) and show how offline metrics map to product outcomes.

- For coding, ask clarifying questions early, choose a clear algorithm (token bucket/sliding window), and surface edge cases and concurrency considerations.

Prep checklist:

- Prepare 2–3 projects with: context, metric baselines, architecture diagram, challenges, and concrete outcomes.

- Practice ranking problems: feature sets, label engineering, candidate retrieval + re-rank pipelines.

- Brush up on rate-limiter patterns (fixed window, sliding window, token bucket) and how to implement them with/without Redis.

Good luck — the combination of clear storytelling, technical depth, and explicit tradeoff reasoning is what makes a high-scoring interview at this level.

#MachineLearning #SystemDesign #InterviewPrep